id int64 599M 3.48B | number int64 1 7.8k | title stringlengths 1 290 | state stringclasses 2

values | comments listlengths 0 30 | created_at timestamp[s]date 2020-04-14 10:18:02 2025-10-05 06:37:50 | updated_at timestamp[s]date 2020-04-27 16:04:17 2025-10-05 10:32:43 | closed_at timestamp[s]date 2020-04-14 12:01:40 2025-10-01 13:56:03 ⌀ | body stringlengths 0 228k ⌀ | user stringlengths 3 26 | html_url stringlengths 46 51 | pull_request dict | is_pull_request bool 2

classes |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

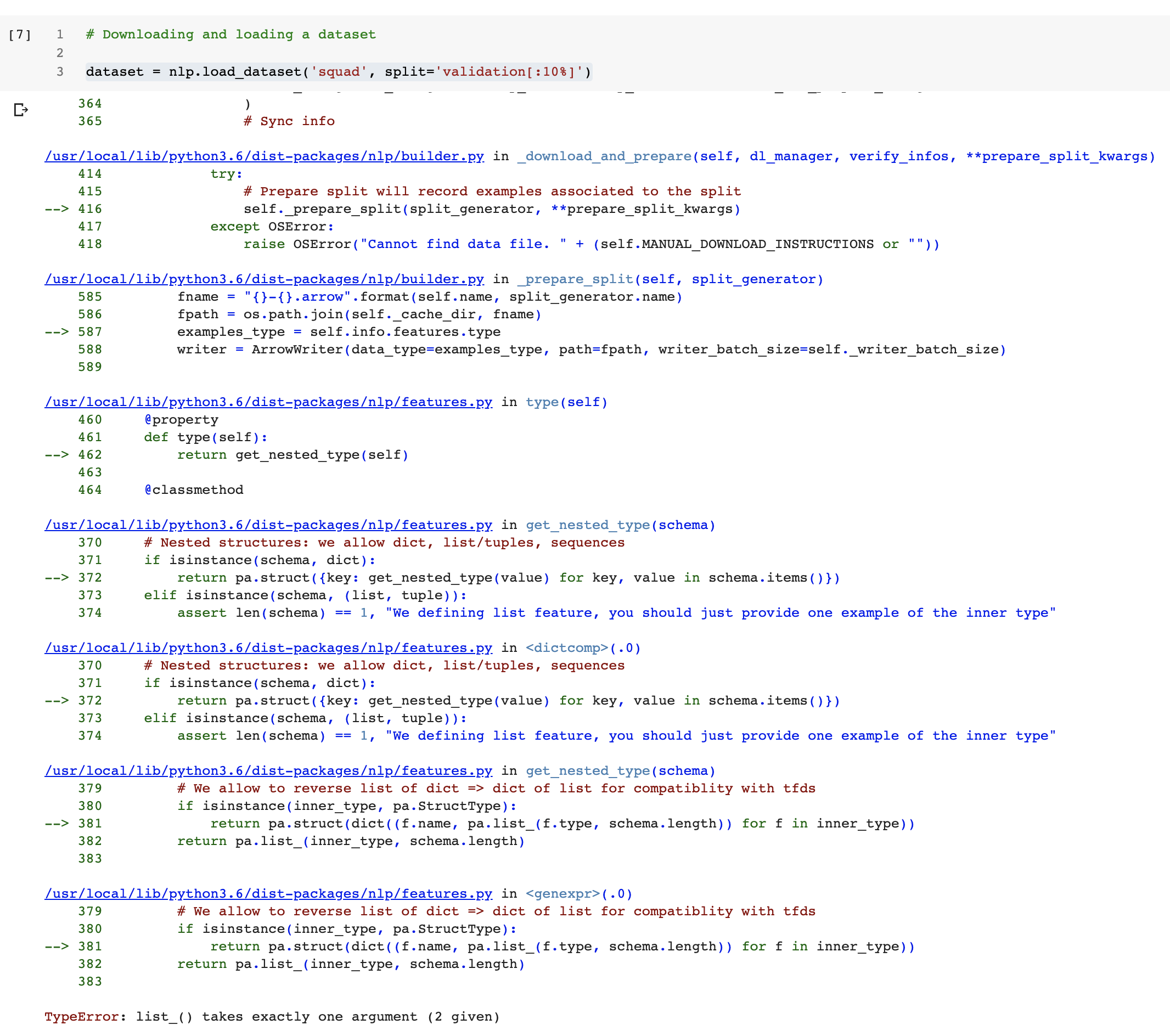

646,792,487 | 319 | Nested sequences with dicts | closed | [] | 2020-06-27T23:45:17 | 2020-07-03T10:22:00 | 2020-07-03T10:22:00 | Am pretty much finished [adding a dataset](https://github.com/ghomasHudson/nlp/blob/DocRED/datasets/docred/docred.py) for [DocRED](https://github.com/thunlp/DocRED), but am getting an error when trying to add a nested `nlp.features.sequence(nlp.features.sequence({key:value,...}))`.

The original data is in this form... | ghomasHudson | https://github.com/huggingface/datasets/issues/319 | null | false |

646,682,840 | 318 | Multitask | closed | [] | 2020-06-27T13:27:29 | 2022-07-06T15:19:57 | 2022-07-06T15:19:57 | Following our discussion in #217, I've implemented a first working version of `MultiDataset`.

There's a function `build_multitask()` which takes either individual `nlp.Dataset`s or `dicts` of splits and constructs `MultiDataset`(s). I've added a notebook with example usage.

I've implemented many of the `nlp.Datas... | ghomasHudson | https://github.com/huggingface/datasets/pull/318 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/318",

"html_url": "https://github.com/huggingface/datasets/pull/318",

"diff_url": "https://github.com/huggingface/datasets/pull/318.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/318.patch",

"merged_at": null

} | true |

646,555,384 | 317 | Adding a dataset with multiple subtasks | closed | [] | 2020-06-26T23:14:19 | 2020-10-27T15:36:52 | 2020-10-27T15:36:52 | I intent to add the datasets of the MT Quality Estimation shared tasks to `nlp`. However, they have different subtasks -- such as word-level, sentence-level and document-level quality estimation, each of which having different language pairs, and some of the data reused in different subtasks.

For example, in [QE 201... | erickrf | https://github.com/huggingface/datasets/issues/317 | null | false |

646,366,450 | 316 | add AG News dataset | closed | [] | 2020-06-26T16:11:58 | 2020-06-30T09:58:08 | 2020-06-30T08:31:55 | adds support for the AG-News topic classification dataset | jxmorris12 | https://github.com/huggingface/datasets/pull/316 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/316",

"html_url": "https://github.com/huggingface/datasets/pull/316",

"diff_url": "https://github.com/huggingface/datasets/pull/316.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/316.patch",

"merged_at": "2020-06-30T08:31:55"... | true |

645,888,943 | 315 | [Question] Best way to batch a large dataset? | open | [] | 2020-06-25T22:30:20 | 2020-10-27T15:38:17 | null | I'm training on large datasets such as Wikipedia and BookCorpus. Following the instructions in [the tutorial notebook](https://colab.research.google.com/github/huggingface/nlp/blob/master/notebooks/Overview.ipynb), I see the following recommended for TensorFlow:

```python

train_tf_dataset = train_tf_dataset.filter(... | jarednielsen | https://github.com/huggingface/datasets/issues/315 | null | false |

645,461,174 | 314 | Fixed singlular very minor spelling error | closed | [] | 2020-06-25T10:45:59 | 2020-06-26T08:46:41 | 2020-06-25T12:43:59 | An instance of "independantly" was changed to "independently". That's all. | SchizoidBat | https://github.com/huggingface/datasets/pull/314 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/314",

"html_url": "https://github.com/huggingface/datasets/pull/314",

"diff_url": "https://github.com/huggingface/datasets/pull/314.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/314.patch",

"merged_at": "2020-06-25T12:43:59"... | true |

645,390,088 | 313 | Add MWSC | closed | [] | 2020-06-25T09:22:02 | 2020-06-30T08:28:11 | 2020-06-30T08:28:11 | Adding the [Modified Winograd Schema Challenge](https://github.com/salesforce/decaNLP/blob/master/local_data/schema.txt) dataset which formed part of the [decaNLP](http://decanlp.com/) benchmark. Not sure how much use people would find for it it outside of the benchmark, but it is general purpose.

Code is heavily bo... | ghomasHudson | https://github.com/huggingface/datasets/pull/313 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/313",

"html_url": "https://github.com/huggingface/datasets/pull/313",

"diff_url": "https://github.com/huggingface/datasets/pull/313.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/313.patch",

"merged_at": "2020-06-30T08:28:10"... | true |

645,025,561 | 312 | [Feature request] Add `shard()` method to dataset | closed | [] | 2020-06-24T22:48:33 | 2020-07-06T12:35:36 | 2020-07-06T12:35:36 | Currently, to shard a dataset into 10 pieces on different ranks, you can run

```python

rank = 3 # for example

size = 10

dataset = nlp.load_dataset('wikitext', 'wikitext-2-raw-v1', split=f"train[{rank*10}%:{(rank+1)*10}%]")

```

However, this breaks down if you have a number of ranks that doesn't divide cleanly... | jarednielsen | https://github.com/huggingface/datasets/issues/312 | null | false |

645,013,131 | 311 | Add qa_zre | closed | [] | 2020-06-24T22:17:22 | 2020-06-29T16:37:38 | 2020-06-29T16:37:38 | Adding the QA-ZRE dataset from ["Zero-Shot Relation Extraction via Reading Comprehension"](http://nlp.cs.washington.edu/zeroshot/).

A common processing step seems to be replacing the `XXX` placeholder with the `subject`. I've left this out as it's something you could easily do with `map`. | ghomasHudson | https://github.com/huggingface/datasets/pull/311 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/311",

"html_url": "https://github.com/huggingface/datasets/pull/311",

"diff_url": "https://github.com/huggingface/datasets/pull/311.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/311.patch",

"merged_at": "2020-06-29T16:37:38"... | true |

644,806,720 | 310 | add wikisql | closed | [] | 2020-06-24T18:00:35 | 2020-06-25T12:32:25 | 2020-06-25T12:32:25 | Adding the [WikiSQL](https://github.com/salesforce/WikiSQL) dataset.

Interesting things to note:

- Have copied the function (`_convert_to_human_readable`) which converts the SQL query to a human-readable (string) format as this is what most people will want when actually using this dataset for NLP applications.

- ... | ghomasHudson | https://github.com/huggingface/datasets/pull/310 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/310",

"html_url": "https://github.com/huggingface/datasets/pull/310",

"diff_url": "https://github.com/huggingface/datasets/pull/310.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/310.patch",

"merged_at": "2020-06-25T12:32:25"... | true |

644,783,822 | 309 | Add narrative qa | closed | [] | 2020-06-24T17:26:18 | 2020-09-03T09:02:10 | 2020-09-03T09:02:09 | Test cases for dummy data don't pass

Only contains data for summaries (not whole story) | Varal7 | https://github.com/huggingface/datasets/pull/309 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/309",

"html_url": "https://github.com/huggingface/datasets/pull/309",

"diff_url": "https://github.com/huggingface/datasets/pull/309.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/309.patch",

"merged_at": null

} | true |

644,195,251 | 308 | Specify utf-8 encoding for MRPC files | closed | [] | 2020-06-23T22:44:36 | 2020-06-25T12:52:21 | 2020-06-25T12:16:10 | Fixes #307, again probably a Windows-related issue. | patpizio | https://github.com/huggingface/datasets/pull/308 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/308",

"html_url": "https://github.com/huggingface/datasets/pull/308",

"diff_url": "https://github.com/huggingface/datasets/pull/308.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/308.patch",

"merged_at": "2020-06-25T12:16:09"... | true |

644,187,262 | 307 | Specify encoding for MRPC | closed | [] | 2020-06-23T22:24:49 | 2020-06-25T12:16:09 | 2020-06-25T12:16:09 | Same as #242, but with MRPC: on Windows, I get a `UnicodeDecodeError` when I try to download the dataset:

```python

dataset = nlp.load_dataset('glue', 'mrpc')

```

```python

Downloading and preparing dataset glue/mrpc (download: Unknown size, generated: Unknown size, total: Unknown size) to C:\Users\Python\.cache... | patpizio | https://github.com/huggingface/datasets/issues/307 | null | false |

644,176,078 | 306 | add pg19 dataset | closed | [] | 2020-06-23T22:03:52 | 2020-07-06T07:55:59 | 2020-07-06T07:55:59 | https://github.com/huggingface/nlp/issues/274

Add functioning PG19 dataset with dummy data

`cos_e.py` was just auto-linted by `make style` | lucidrains | https://github.com/huggingface/datasets/pull/306 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/306",

"html_url": "https://github.com/huggingface/datasets/pull/306",

"diff_url": "https://github.com/huggingface/datasets/pull/306.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/306.patch",

"merged_at": "2020-07-06T07:55:59"... | true |

644,148,149 | 305 | Importing downloaded package repository fails | closed | [] | 2020-06-23T21:09:05 | 2020-07-30T16:44:23 | 2020-07-30T16:44:23 | The `get_imports` function in `src/nlp/load.py` has a feature to download a package as a zip archive of the github repository and import functions from the unpacked directory. This is used for example in the `metrics/coval.py` file, and would be useful to add BLEURT (@ankparikh).

Currently however, the code seems to... | yjernite | https://github.com/huggingface/datasets/issues/305 | null | false |

644,091,970 | 304 | Problem while printing doc string when instantiating multiple metrics. | closed | [] | 2020-06-23T19:32:05 | 2020-07-22T09:50:58 | 2020-07-22T09:50:58 | When I load more than one metric and try to print doc string of a particular metric,. It shows the doc strings of all imported metric one after the other which looks quite confusing and clumsy.

Attached [Colab](https://colab.research.google.com/drive/13H0ZgyQ2se0mqJ2yyew0bNEgJuHaJ8H3?usp=sharing) Notebook for problem ... | codehunk628 | https://github.com/huggingface/datasets/issues/304 | null | false |

643,912,464 | 303 | allow to move files across file systems | closed | [] | 2020-06-23T14:56:08 | 2020-06-23T15:08:44 | 2020-06-23T15:08:43 | Users are allowed to use the `cache_dir` that they want.

Therefore it can happen that we try to move files across filesystems.

We were using `os.rename` that doesn't allow that, so I changed some of them to `shutil.move`.

This should fix #301 | lhoestq | https://github.com/huggingface/datasets/pull/303 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/303",

"html_url": "https://github.com/huggingface/datasets/pull/303",

"diff_url": "https://github.com/huggingface/datasets/pull/303.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/303.patch",

"merged_at": "2020-06-23T15:08:43"... | true |

643,910,418 | 302 | Question - Sign Language Datasets | closed | [] | 2020-06-23T14:53:40 | 2020-11-25T11:25:33 | 2020-11-25T11:25:33 | An emerging field in NLP is SLP - sign language processing.

I was wondering about adding datasets here, specifically because it's shaping up to be large and easily usable.

The metrics for sign language to text translation are the same.

So, what do you think about (me, or others) adding datasets here?

An exa... | AmitMY | https://github.com/huggingface/datasets/issues/302 | null | false |

643,763,525 | 301 | Setting cache_dir gives error on wikipedia download | closed | [] | 2020-06-23T11:31:44 | 2020-06-24T07:05:07 | 2020-06-24T07:05:07 | First of all thank you for a super handy library! I'd like to download large files to a specific drive so I set `cache_dir=my_path`. This works fine with e.g. imdb and squad. But on wikipedia I get an error:

```

nlp.load_dataset('wikipedia', '20200501.de', split = 'train', cache_dir=my_path)

```

```

OSError ... | hallvagi | https://github.com/huggingface/datasets/issues/301 | null | false |

643,688,304 | 300 | Fix bertscore references | closed | [] | 2020-06-23T09:38:59 | 2020-06-23T14:47:38 | 2020-06-23T14:47:37 | I added some type checking for metrics. There was an issue where a metric could interpret a string a a list. A `ValueError` is raised if a string is given instead of a list.

Moreover I added support for both strings and lists of strings for `references` in `bertscore`, as it is the case in the original code.

Both... | lhoestq | https://github.com/huggingface/datasets/pull/300 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/300",

"html_url": "https://github.com/huggingface/datasets/pull/300",

"diff_url": "https://github.com/huggingface/datasets/pull/300.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/300.patch",

"merged_at": "2020-06-23T14:47:36"... | true |

643,611,557 | 299 | remove some print in snli file | closed | [] | 2020-06-23T07:46:06 | 2020-06-23T08:10:46 | 2020-06-23T08:10:44 | This PR removes unwanted `print` statements in some files such as `snli.py` | mariamabarham | https://github.com/huggingface/datasets/pull/299 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/299",

"html_url": "https://github.com/huggingface/datasets/pull/299",

"diff_url": "https://github.com/huggingface/datasets/pull/299.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/299.patch",

"merged_at": "2020-06-23T08:10:44"... | true |

643,603,804 | 298 | Add searchable datasets | closed | [] | 2020-06-23T07:33:03 | 2020-06-26T07:50:44 | 2020-06-26T07:50:43 | # Better support for Numpy format + Add Indexed Datasets

I was working on adding Indexed Datasets but in the meantime I had to also add more support for Numpy arrays in the lib.

## Better support for Numpy format

New features:

- New fast method to convert Numpy arrays from Arrow structure (up to x100 speed up... | lhoestq | https://github.com/huggingface/datasets/pull/298 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/298",

"html_url": "https://github.com/huggingface/datasets/pull/298",

"diff_url": "https://github.com/huggingface/datasets/pull/298.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/298.patch",

"merged_at": "2020-06-26T07:50:43"... | true |

643,444,625 | 297 | Error in Demo for Specific Datasets | closed | [] | 2020-06-23T00:38:42 | 2020-07-17T17:43:06 | 2020-07-17T17:43:06 | Selecting `natural_questions` or `newsroom` dataset in the online demo results in an error similar to the following.

| s-jse | https://github.com/huggingface/datasets/issues/297 | null | false |

643,423,717 | 296 | snli -1 labels | closed | [] | 2020-06-22T23:33:30 | 2020-06-23T14:41:59 | 2020-06-23T14:41:58 | I'm trying to train a model on the SNLI dataset. Why does it have so many -1 labels?

```

import nlp

from collections import Counter

data = nlp.load_dataset('snli')['train']

print(Counter(data['label']))

Counter({0: 183416, 2: 183187, 1: 182764, -1: 785})

```

| jxmorris12 | https://github.com/huggingface/datasets/issues/296 | null | false |

643,245,412 | 295 | Improve input warning for evaluation metrics | closed | [] | 2020-06-22T17:28:57 | 2020-06-23T14:47:37 | 2020-06-23T14:47:37 | Hi,

I am the author of `bert_score`. Recently, we received [ an issue ](https://github.com/Tiiiger/bert_score/issues/62) reporting a problem in using `bert_score` from the `nlp` package (also see #238 in this repo). After looking into this, I realized that the problem arises from the format `nlp.Metric` takes inpu... | Tiiiger | https://github.com/huggingface/datasets/issues/295 | null | false |

643,181,179 | 294 | Cannot load arxiv dataset on MacOS? | closed | [] | 2020-06-22T15:46:55 | 2020-06-30T15:25:10 | 2020-06-30T15:25:10 | I am having trouble loading the `"arxiv"` config from the `"scientific_papers"` dataset on MacOS. When I try loading the dataset with:

```python

arxiv = nlp.load_dataset("scientific_papers", "arxiv")

```

I get the following stack trace:

```bash

JSONDecodeError Traceback (most recen... | JohnGiorgi | https://github.com/huggingface/datasets/issues/294 | null | false |

642,942,182 | 293 | Don't test community datasets | closed | [] | 2020-06-22T10:15:33 | 2020-06-22T11:07:00 | 2020-06-22T11:06:59 | This PR disables testing for community datasets on aws.

It should fix the CI that is currently failing. | lhoestq | https://github.com/huggingface/datasets/pull/293 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/293",

"html_url": "https://github.com/huggingface/datasets/pull/293",

"diff_url": "https://github.com/huggingface/datasets/pull/293.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/293.patch",

"merged_at": "2020-06-22T11:06:59"... | true |

642,897,797 | 292 | Update metadata for x_stance dataset | closed | [] | 2020-06-22T09:13:26 | 2020-06-23T08:07:24 | 2020-06-23T08:07:24 | Thank you for featuring the x_stance dataset in your library. This PR updates some metadata:

- Citation: Replace preprint with proceedings

- URL: Use a URL with long-term availability

| jvamvas | https://github.com/huggingface/datasets/pull/292 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/292",

"html_url": "https://github.com/huggingface/datasets/pull/292",

"diff_url": "https://github.com/huggingface/datasets/pull/292.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/292.patch",

"merged_at": "2020-06-23T08:07:24"... | true |

642,688,450 | 291 | break statement not required | closed | [] | 2020-06-22T01:40:55 | 2020-06-23T17:57:58 | 2020-06-23T09:37:02 | mayurnewase | https://github.com/huggingface/datasets/pull/291 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/291",

"html_url": "https://github.com/huggingface/datasets/pull/291",

"diff_url": "https://github.com/huggingface/datasets/pull/291.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/291.patch",

"merged_at": null

} | true | |

641,978,286 | 290 | ConnectionError - Eli5 dataset download | closed | [] | 2020-06-19T13:40:33 | 2020-06-20T13:22:24 | 2020-06-20T13:22:24 | Hi, I have a problem with downloading Eli5 dataset. When typing `nlp.load_dataset('eli5')`, I get ConnectionError: Couldn't reach https://storage.googleapis.com/huggingface-nlp/cache/datasets/eli5/LFQA_reddit/1.0.0/explain_like_im_five-train_eli5.arrow

I would appreciate if you could help me with this issue. | JovanNj | https://github.com/huggingface/datasets/issues/290 | null | false |

641,934,194 | 289 | update xsum | closed | [] | 2020-06-19T12:28:32 | 2020-06-22T13:27:26 | 2020-06-22T07:20:07 | This PR makes the following update to the xsum dataset:

- Manual download is not required anymore

- dataset can be loaded as follow: `nlp.load_dataset('xsum')`

**Important**

Instead of using on outdated url to download the data: "https://raw.githubusercontent.com/EdinburghNLP/XSum/master/XSum-Dataset/XSum... | mariamabarham | https://github.com/huggingface/datasets/pull/289 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/289",

"html_url": "https://github.com/huggingface/datasets/pull/289",

"diff_url": "https://github.com/huggingface/datasets/pull/289.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/289.patch",

"merged_at": "2020-06-22T07:20:07"... | true |

641,888,610 | 288 | Error at the first example in README: AttributeError: module 'dill' has no attribute '_dill' | closed | [] | 2020-06-19T11:01:22 | 2020-06-21T09:05:11 | 2020-06-21T09:05:11 | /Users/parasol_tree/anaconda3/lib/python3.6/site-packages/tensorflow/python/framework/dtypes.py:469: FutureWarning: Passing (type, 1) or '1type' as a synonym of type is deprecated; in a future version of numpy, it will be understood as (type, (1,)) / '(1,)type'.

_np_qint8 = np.dtype([("qint8", np.int8, 1)])

/Users/... | wutong8023 | https://github.com/huggingface/datasets/issues/288 | null | false |

641,800,227 | 287 | fix squad_v2 metric | closed | [] | 2020-06-19T08:24:46 | 2020-06-19T08:33:43 | 2020-06-19T08:33:41 | Fix #280

The imports were wrong | lhoestq | https://github.com/huggingface/datasets/pull/287 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/287",

"html_url": "https://github.com/huggingface/datasets/pull/287",

"diff_url": "https://github.com/huggingface/datasets/pull/287.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/287.patch",

"merged_at": "2020-06-19T08:33:41"... | true |

641,585,758 | 286 | Add ANLI dataset. | closed | [] | 2020-06-18T22:27:30 | 2020-06-22T12:23:27 | 2020-06-22T12:23:27 | I completed all the steps in https://github.com/huggingface/nlp/blob/master/CONTRIBUTING.md#how-to-add-a-dataset and push the code for ANLI. Please let me know if there are any errors. | easonnie | https://github.com/huggingface/datasets/pull/286 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/286",

"html_url": "https://github.com/huggingface/datasets/pull/286",

"diff_url": "https://github.com/huggingface/datasets/pull/286.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/286.patch",

"merged_at": "2020-06-22T12:23:26"... | true |

641,360,702 | 285 | Consistent formatting of citations | closed | [] | 2020-06-18T16:25:23 | 2020-06-22T08:09:25 | 2020-06-22T08:09:24 | #283 | mariamabarham | https://github.com/huggingface/datasets/pull/285 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/285",

"html_url": "https://github.com/huggingface/datasets/pull/285",

"diff_url": "https://github.com/huggingface/datasets/pull/285.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/285.patch",

"merged_at": "2020-06-22T08:09:23"... | true |

641,337,217 | 284 | Fix manual download instructions | closed | [] | 2020-06-18T15:59:57 | 2020-06-19T08:24:21 | 2020-06-19T08:24:19 | This PR replaces the static `DatasetBulider` variable `MANUAL_DOWNLOAD_INSTRUCTIONS` by a property function `manual_download_instructions()`.

Some datasets like XTREME and all WMT need the manual data dir only for a small fraction of the possible configs.

After some brainstorming with @mariamabarham and @lhoestq... | patrickvonplaten | https://github.com/huggingface/datasets/pull/284 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/284",

"html_url": "https://github.com/huggingface/datasets/pull/284",

"diff_url": "https://github.com/huggingface/datasets/pull/284.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/284.patch",

"merged_at": "2020-06-19T08:24:19"... | true |

641,270,439 | 283 | Consistent formatting of citations | closed | [] | 2020-06-18T14:48:45 | 2020-06-22T17:30:46 | 2020-06-22T17:30:46 | The citations are all of a different format, some have "```" and have text inside, others are proper bibtex.

Can we make it so that they all are proper citations, i.e. parse by the bibtex spec:

https://bibtexparser.readthedocs.io/en/master/ | srush | https://github.com/huggingface/datasets/issues/283 | null | false |

641,217,759 | 282 | Update dataset_info from gcs | closed | [] | 2020-06-18T13:41:15 | 2020-06-18T16:24:52 | 2020-06-18T16:24:51 | Some datasets are hosted on gcs (wikipedia for example). In this PR I make sure that, when a user loads such datasets, the file_instructions are built using the dataset_info.json from gcs and not from the info extracted from the local `dataset_infos.json` (the one that contain the info for each config). Indeed local fi... | lhoestq | https://github.com/huggingface/datasets/pull/282 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/282",

"html_url": "https://github.com/huggingface/datasets/pull/282",

"diff_url": "https://github.com/huggingface/datasets/pull/282.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/282.patch",

"merged_at": "2020-06-18T16:24:51"... | true |

641,067,856 | 281 | Private/sensitive data | closed | [] | 2020-06-18T09:47:27 | 2020-06-20T13:15:12 | 2020-06-20T13:15:12 | Hi all,

Thanks for this fantastic library, it makes it very easy to do prototyping for NLP projects interchangeably between TF/Pytorch.

Unfortunately, there is data that cannot easily be shared publicly as it may contain sensitive information.

Is there support/a plan to support such data with NLP, e.g. by readin... | MFreidank | https://github.com/huggingface/datasets/issues/281 | null | false |

640,677,615 | 280 | Error with SquadV2 Metrics | closed | [] | 2020-06-17T19:10:54 | 2020-06-19T08:33:41 | 2020-06-19T08:33:41 | I can't seem to import squad v2 metrics.

**squad_metric = nlp.load_metric('squad_v2')**

**This throws me an error.:**

```

ImportError Traceback (most recent call last)

<ipython-input-8-170b6a170555> in <module>

----> 1 squad_metric = nlp.load_metric('squad_v2')

~/env/lib6... | avinregmi | https://github.com/huggingface/datasets/issues/280 | null | false |

640,611,692 | 279 | Dataset Preprocessing Cache with .map() function not working as expected | closed | [] | 2020-06-17T17:17:21 | 2021-07-06T21:43:28 | 2021-04-18T23:43:49 | I've been having issues with reproducibility when loading and processing datasets with the `.map` function. I was only able to resolve them by clearing all of the cache files on my system.

Is there a way to disable using the cache when processing a dataset? As I make minor processing changes on the same dataset, I ... | sarahwie | https://github.com/huggingface/datasets/issues/279 | null | false |

640,518,917 | 278 | MemoryError when loading German Wikipedia | closed | [] | 2020-06-17T15:06:21 | 2020-06-19T12:53:02 | 2020-06-19T12:53:02 | Hi, first off let me say thank you for all the awesome work you're doing at Hugging Face across all your projects (NLP, Transformers, Tokenizers) - they're all amazing contributions to us working with NLP models :)

I'm trying to download the German Wikipedia dataset as follows:

```

wiki = nlp.load_dataset("wikip... | gregburman | https://github.com/huggingface/datasets/issues/278 | null | false |

640,163,053 | 277 | Empty samples in glue/qqp | closed | [] | 2020-06-17T05:54:52 | 2020-06-21T00:21:45 | 2020-06-21T00:21:45 | ```

qqp = nlp.load_dataset('glue', 'qqp')

print(qqp['train'][310121])

print(qqp['train'][362225])

```

```

{'question1': 'How can I create an Android app?', 'question2': '', 'label': 0, 'idx': 310137}

{'question1': 'How can I develop android app?', 'question2': '', 'label': 0, 'idx': 362246}

```

Notice that que... | richarddwang | https://github.com/huggingface/datasets/issues/277 | null | false |

639,490,858 | 276 | Fix metric compute (original_instructions missing) | closed | [] | 2020-06-16T08:52:01 | 2020-06-18T07:41:45 | 2020-06-18T07:41:44 | When loading arrow data we added in cc8d250 a way to specify the instructions that were used to store them with the loaded dataset.

However metrics load data the same way but don't need instructions (we use one single file).

In this PR I just make `original_instructions` optional when reading files to load a `Datas... | lhoestq | https://github.com/huggingface/datasets/pull/276 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/276",

"html_url": "https://github.com/huggingface/datasets/pull/276",

"diff_url": "https://github.com/huggingface/datasets/pull/276.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/276.patch",

"merged_at": "2020-06-18T07:41:43"... | true |

639,439,052 | 275 | NonMatchingChecksumError when loading pubmed dataset | closed | [] | 2020-06-16T07:31:51 | 2020-06-19T07:37:07 | 2020-06-19T07:37:07 | I get this error when i run `nlp.load_dataset('scientific_papers', 'pubmed', split = 'train[:50%]')`.

The error is:

```

---------------------------------------------------------------------------

NonMatchingChecksumError Traceback (most recent call last)

<ipython-input-2-7742dea167d0> in <module... | DavideStenner | https://github.com/huggingface/datasets/issues/275 | null | false |

639,156,625 | 274 | PG-19 | closed | [] | 2020-06-15T21:02:26 | 2020-07-06T15:35:02 | 2020-07-06T15:35:02 | Hi, and thanks for all your open-sourced work, as always!

I was wondering if you would be open to adding PG-19 to your collection of datasets. https://github.com/deepmind/pg19 It is often used for benchmarking long-range language modeling. | lucidrains | https://github.com/huggingface/datasets/issues/274 | null | false |

638,968,054 | 273 | update cos_e to add cos_e v1.0 | closed | [] | 2020-06-15T16:03:22 | 2020-06-16T08:25:54 | 2020-06-16T08:25:52 | This PR updates the cos_e dataset to add v1.0 as requested here #163

@nazneenrajani | mariamabarham | https://github.com/huggingface/datasets/pull/273 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/273",

"html_url": "https://github.com/huggingface/datasets/pull/273",

"diff_url": "https://github.com/huggingface/datasets/pull/273.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/273.patch",

"merged_at": "2020-06-16T08:25:52"... | true |

638,307,313 | 272 | asd | closed | [] | 2020-06-14T08:20:38 | 2020-06-14T09:16:41 | 2020-06-14T09:16:41 | sn696 | https://github.com/huggingface/datasets/pull/272 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/272",

"html_url": "https://github.com/huggingface/datasets/pull/272",

"diff_url": "https://github.com/huggingface/datasets/pull/272.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/272.patch",

"merged_at": null

} | true | |

638,135,754 | 271 | Fix allociné dataset configuration | closed | [] | 2020-06-13T10:12:10 | 2020-06-18T07:41:21 | 2020-06-18T07:41:20 | This is a patch for #244. According to the [live nlp viewer](url), the Allociné dataset must be loaded with :

```python

dataset = load_dataset('allocine', 'allocine')

```

This is redundant, as there is only one "dataset configuration", and should only be:

```python

dataset = load_dataset('allocine')

```

This ... | TheophileBlard | https://github.com/huggingface/datasets/pull/271 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/271",

"html_url": "https://github.com/huggingface/datasets/pull/271",

"diff_url": "https://github.com/huggingface/datasets/pull/271.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/271.patch",

"merged_at": null

} | true |

638,121,617 | 270 | c4 dataset is not viewable in nlpviewer demo | closed | [] | 2020-06-13T08:26:16 | 2020-10-27T15:35:29 | 2020-10-27T15:35:13 | I get the following error when I try to view the c4 dataset in [nlpviewer](https://huggingface.co/nlp/viewer/)

```python

ModuleNotFoundError: No module named 'langdetect'

Traceback:

File "/home/sasha/.local/lib/python3.7/site-packages/streamlit/ScriptRunner.py", line 322, in _run_script

exec(code, module.__d... | rajarsheem | https://github.com/huggingface/datasets/issues/270 | null | false |

638,106,774 | 269 | Error in metric.compute: missing `original_instructions` argument | closed | [] | 2020-06-13T06:26:54 | 2020-06-18T07:41:44 | 2020-06-18T07:41:44 | I'm running into an error using metrics for computation in the latest master as well as version 0.2.1. Here is a minimal example:

```python

import nlp

rte_metric = nlp.load_metric('glue', name="rte")

rte_metric.compute(

[0, 0, 1, 1],

[0, 1, 0, 1],

)

```

```

181 # Read the predictio... | zphang | https://github.com/huggingface/datasets/issues/269 | null | false |

637,848,056 | 268 | add Rotten Tomatoes Movie Review sentences sentiment dataset | closed | [] | 2020-06-12T15:53:59 | 2020-06-18T07:46:24 | 2020-06-18T07:46:23 | Sentence-level movie reviews v1.0 from here: http://www.cs.cornell.edu/people/pabo/movie-review-data/ | jxmorris12 | https://github.com/huggingface/datasets/pull/268 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/268",

"html_url": "https://github.com/huggingface/datasets/pull/268",

"diff_url": "https://github.com/huggingface/datasets/pull/268.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/268.patch",

"merged_at": "2020-06-18T07:46:23"... | true |

637,415,545 | 267 | How can I load/find WMT en-romanian? | closed | [] | 2020-06-12T01:09:37 | 2020-06-19T08:24:19 | 2020-06-19T08:24:19 | I believe it is from `wmt16`

When I run

```python

wmt = nlp.load_dataset('wmt16')

```

I get:

```python

AssertionError: The dataset wmt16 with config cs-en requires manual data.

Please follow the manual download instructions: Some of the wmt configs here, require a manual download.

Please look into wm... | sshleifer | https://github.com/huggingface/datasets/issues/267 | null | false |

637,156,392 | 266 | Add sort, shuffle, test_train_split and select methods | closed | [] | 2020-06-11T16:22:20 | 2020-06-18T16:23:25 | 2020-06-18T16:23:24 | Add a bunch of methods to reorder/split/select rows in a dataset:

- `dataset.select(indices)`: Create a new dataset with rows selected following the list/array of indices (which can have a different size than the dataset and contain duplicated indices, the only constrain is that all the integers in the list must be sm... | thomwolf | https://github.com/huggingface/datasets/pull/266 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/266",

"html_url": "https://github.com/huggingface/datasets/pull/266",

"diff_url": "https://github.com/huggingface/datasets/pull/266.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/266.patch",

"merged_at": "2020-06-18T16:23:23"... | true |

637,139,220 | 265 | Add pyarrow warning colab | closed | [] | 2020-06-11T15:57:51 | 2020-08-02T18:14:36 | 2020-06-12T08:14:16 | When a user installs `nlp` on google colab, then google colab doesn't update pyarrow, and the runtime needs to be restarted to use the updated version of pyarrow.

This is an issue because `nlp` requires the updated version to work correctly.

In this PR I added en error that is shown to the user in google colab if... | lhoestq | https://github.com/huggingface/datasets/pull/265 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/265",

"html_url": "https://github.com/huggingface/datasets/pull/265",

"diff_url": "https://github.com/huggingface/datasets/pull/265.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/265.patch",

"merged_at": "2020-06-12T08:14:16"... | true |

637,106,170 | 264 | Fix small issues creating dataset | closed | [] | 2020-06-11T15:20:16 | 2020-06-12T08:15:57 | 2020-06-12T08:15:56 | Fix many small issues mentioned in #249:

- don't force to install apache beam for commands

- fix None cache dir when using `dl_manager.download_custom`

- added new extras in `setup.py` named `dev` that contains tests and quality dependencies

- mock dataset sizes when running tests with dummy data

- add a note abou... | lhoestq | https://github.com/huggingface/datasets/pull/264 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/264",

"html_url": "https://github.com/huggingface/datasets/pull/264",

"diff_url": "https://github.com/huggingface/datasets/pull/264.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/264.patch",

"merged_at": "2020-06-12T08:15:56"... | true |

637,028,015 | 263 | [Feature request] Support for external modality for language datasets | closed | [] | 2020-06-11T13:42:18 | 2022-02-10T13:26:35 | 2022-02-10T13:26:35 | # Background

In recent years many researchers have advocated that learning meanings from text-based only datasets is just like asking a human to "learn to speak by listening to the radio" [[E. Bender and A. Koller,2020](https://openreview.net/forum?id=GKTvAcb12b), [Y. Bisk et. al, 2020](https://arxiv.org/abs/2004.10... | aleSuglia | https://github.com/huggingface/datasets/issues/263 | null | false |

636,702,849 | 262 | Add new dataset ANLI Round 1 | closed | [] | 2020-06-11T04:14:57 | 2020-06-12T22:03:03 | 2020-06-12T22:03:03 | Adding new dataset [ANLI](https://github.com/facebookresearch/anli/).

I'm not familiar with how to add new dataset. Let me know if there is any issue. I only include round 1 data here. There will be round 2, round 3 and more in the future with potentially different format. I think it will be better to separate them. | easonnie | https://github.com/huggingface/datasets/pull/262 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/262",

"html_url": "https://github.com/huggingface/datasets/pull/262",

"diff_url": "https://github.com/huggingface/datasets/pull/262.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/262.patch",

"merged_at": null

} | true |

636,372,380 | 261 | Downloading dataset error with pyarrow.lib.RecordBatch | closed | [] | 2020-06-10T16:04:19 | 2020-06-11T14:35:12 | 2020-06-11T14:35:12 | I am trying to download `sentiment140` and I have the following error

```

/usr/local/lib/python3.6/dist-packages/nlp/load.py in load_dataset(path, name, version, data_dir, data_files, split, cache_dir, download_config, download_mode, ignore_verifications, save_infos, **config_kwargs)

518 download_mode=... | cuent | https://github.com/huggingface/datasets/issues/261 | null | false |

636,261,118 | 260 | Consistency fixes | closed | [] | 2020-06-10T13:44:42 | 2020-06-11T10:34:37 | 2020-06-11T10:34:36 | A few bugs I've found while hacking | julien-c | https://github.com/huggingface/datasets/pull/260 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/260",

"html_url": "https://github.com/huggingface/datasets/pull/260",

"diff_url": "https://github.com/huggingface/datasets/pull/260.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/260.patch",

"merged_at": "2020-06-11T10:34:36"... | true |

636,239,529 | 259 | documentation missing how to split a dataset | closed | [] | 2020-06-10T13:18:13 | 2023-03-14T13:56:07 | 2020-06-18T22:20:24 | I am trying to understand how to split a dataset ( as arrow_dataset).

I know I can do something like this to access a split which is already in the original dataset :

`ds_test = nlp.load_dataset('imdb, split='test') `

But how can I split ds_test into a test and a validation set (without reading the data into m... | fotisj | https://github.com/huggingface/datasets/issues/259 | null | false |

635,859,525 | 258 | Why is dataset after tokenization far more larger than the orginal one ? | closed | [] | 2020-06-10T01:27:07 | 2020-06-10T12:46:34 | 2020-06-10T12:46:34 | I tokenize wiki dataset by `map` and cache the results.

```

def tokenize_tfm(example):

example['input_ids'] = hf_fast_tokenizer.convert_tokens_to_ids(hf_fast_tokenizer.tokenize(example['text']))

return example

wiki = nlp.load_dataset('wikipedia', '20200501.en', cache_dir=cache_dir)['train']

wiki.map(token... | richarddwang | https://github.com/huggingface/datasets/issues/258 | null | false |

635,620,979 | 257 | Tokenizer pickling issue fix not landed in `nlp` yet? | closed | [] | 2020-06-09T17:12:34 | 2020-06-10T21:45:32 | 2020-06-09T17:26:53 | Unless I recreate an arrow_dataset from my loaded nlp dataset myself (which I think does not use the cache by default), I get the following error when applying the map function:

```

dataset = nlp.load_dataset('cos_e')

tokenizer = GPT2TokenizerFast.from_pretrained('gpt2', cache_dir=cache_dir)

for split in datase... | sarahwie | https://github.com/huggingface/datasets/issues/257 | null | false |

635,596,295 | 256 | [Feature request] Add a feature to dataset | closed | [] | 2020-06-09T16:38:12 | 2020-06-09T16:51:42 | 2020-06-09T16:51:42 | Is there a straightforward way to add a field to the arrow_dataset, prior to performing map? | sarahwie | https://github.com/huggingface/datasets/issues/256 | null | false |

635,300,822 | 255 | Add dataset/piaf | closed | [] | 2020-06-09T10:16:01 | 2020-06-12T08:31:27 | 2020-06-12T08:31:27 | Small SQuAD-like French QA dataset [PIAF](https://www.aclweb.org/anthology/2020.lrec-1.673.pdf) | RachelKer | https://github.com/huggingface/datasets/pull/255 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/255",

"html_url": "https://github.com/huggingface/datasets/pull/255",

"diff_url": "https://github.com/huggingface/datasets/pull/255.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/255.patch",

"merged_at": "2020-06-12T08:31:27"... | true |

635,057,568 | 254 | [Feature request] Be able to remove a specific sample of the dataset | closed | [] | 2020-06-09T02:22:13 | 2020-06-09T08:41:38 | 2020-06-09T08:41:38 | As mentioned in #117, it's currently not possible to remove a sample of the dataset.

But it is a important use case : After applying some preprocessing, some samples might be empty for example. We should be able to remove these samples from the dataset, or at least mark them as `removed` so when iterating the datase... | astariul | https://github.com/huggingface/datasets/issues/254 | null | false |

634,791,939 | 253 | add flue dataset | closed | [] | 2020-06-08T17:11:09 | 2023-09-24T09:46:03 | 2020-07-16T07:50:59 | This PR add the Flue dataset as requested in this issue #223 . @lbourdois made a detailed description in that issue.

| mariamabarham | https://github.com/huggingface/datasets/pull/253 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/253",

"html_url": "https://github.com/huggingface/datasets/pull/253",

"diff_url": "https://github.com/huggingface/datasets/pull/253.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/253.patch",

"merged_at": null

} | true |

634,563,239 | 252 | NonMatchingSplitsSizesError error when reading the IMDB dataset | closed | [] | 2020-06-08T12:26:24 | 2021-08-27T15:20:58 | 2020-06-08T14:01:26 | Hi!

I am trying to load the `imdb` dataset with this line:

`dataset = nlp.load_dataset('imdb', data_dir='/A/PATH', cache_dir='/A/PATH')`

but I am getting the following error:

```

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "/mounts/Users/cisintern/antmarakis/anaconda3/... | antmarakis | https://github.com/huggingface/datasets/issues/252 | null | false |

634,544,977 | 251 | Better access to all dataset information | closed | [] | 2020-06-08T11:56:50 | 2020-06-12T08:13:00 | 2020-06-12T08:12:58 | Moves all the dataset info down one level from `dataset.info.XXX` to `dataset.XXX`

This way it's easier to access `dataset.feature['label']` for instance

Also, add the original split instructions used to create the dataset in `dataset.split`

Ex:

```

from nlp import load_dataset

stsb = load_dataset('glue', name=... | thomwolf | https://github.com/huggingface/datasets/pull/251 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/251",

"html_url": "https://github.com/huggingface/datasets/pull/251",

"diff_url": "https://github.com/huggingface/datasets/pull/251.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/251.patch",

"merged_at": "2020-06-12T08:12:58"... | true |

634,416,751 | 250 | Remove checksum download in c4 | closed | [] | 2020-06-08T09:13:00 | 2020-08-25T07:04:56 | 2020-06-08T09:16:59 | There was a line from the original tfds script that was still there and causing issues when loading the c4 script. This one should fix #233 and allow anyone to load the c4 script to generate the dataset | lhoestq | https://github.com/huggingface/datasets/pull/250 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/250",

"html_url": "https://github.com/huggingface/datasets/pull/250",

"diff_url": "https://github.com/huggingface/datasets/pull/250.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/250.patch",

"merged_at": "2020-06-08T09:16:59"... | true |

633,393,443 | 249 | [Dataset created] some critical small issues when I was creating a dataset | closed | [] | 2020-06-07T12:58:54 | 2020-06-12T08:28:51 | 2020-06-12T08:28:51 | Hi, I successfully created a dataset and has made a pr #248.

But I have encountered several problems when I was creating it, and those should be easy to fix.

1. Not found dataset_info.json

should be fixed by #241 , eager to wait it be merged.

2. Forced to install `apach_beam`

If we should install it, then it m... | richarddwang | https://github.com/huggingface/datasets/issues/249 | null | false |

633,390,427 | 248 | add Toronto BooksCorpus | closed | [] | 2020-06-07T12:54:56 | 2020-06-12T08:45:03 | 2020-06-12T08:45:02 | 1. I knew there is a branch `toronto_books_corpus`

- After I downloaded it, I found it is all non-english, and only have one row.

- It seems that it cites the wrong paper

- according to papar using it, it is called `BooksCorpus` but not `TornotoBooksCorpus`

2. It use a text mirror in google drive

- `bookscorpu... | richarddwang | https://github.com/huggingface/datasets/pull/248 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/248",

"html_url": "https://github.com/huggingface/datasets/pull/248",

"diff_url": "https://github.com/huggingface/datasets/pull/248.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/248.patch",

"merged_at": "2020-06-12T08:45:02"... | true |

632,380,078 | 247 | Make all dataset downloads deterministic by applying `sorted` to glob and os.listdir | closed | [] | 2020-06-06T11:02:10 | 2020-06-08T09:18:16 | 2020-06-08T09:18:14 | This PR makes all datasets loading deterministic by applying `sorted()` to all `glob.glob` and `os.listdir` statements.

Are there other "non-deterministic" functions apart from `glob.glob()` and `os.listdir()` that you can think of @thomwolf @lhoestq @mariamabarham @jplu ?

**Important**

It does break backward c... | patrickvonplaten | https://github.com/huggingface/datasets/pull/247 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/247",

"html_url": "https://github.com/huggingface/datasets/pull/247",

"diff_url": "https://github.com/huggingface/datasets/pull/247.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/247.patch",

"merged_at": "2020-06-08T09:18:14"... | true |

632,380,054 | 246 | What is the best way to cache a dataset? | closed | [] | 2020-06-06T11:02:07 | 2020-07-09T09:15:07 | 2020-07-09T09:15:07 | For example if I want to use streamlit with a nlp dataset:

```

@st.cache

def load_data():

return nlp.load_dataset('squad')

```

This code raises the error "uncachable object"

Right now I just fixed with a constant for my specific case:

```

@st.cache(hash_funcs={pyarrow.lib.Buffer: lambda b: 0})

```... | Mistobaan | https://github.com/huggingface/datasets/issues/246 | null | false |

631,985,108 | 245 | SST-2 test labels are all -1 | closed | [] | 2020-06-05T21:41:42 | 2021-12-08T00:47:32 | 2020-06-06T16:56:41 | I'm trying to test a model on the SST-2 task, but all the labels I see in the test set are -1.

```

>>> import nlp

>>> glue = nlp.load_dataset('glue', 'sst2')

>>> glue

{'train': Dataset(schema: {'sentence': 'string', 'label': 'int64', 'idx': 'int32'}, num_rows: 67349), 'validation': Dataset(schema: {'sentence': 'st... | jxmorris12 | https://github.com/huggingface/datasets/issues/245 | null | false |

631,869,155 | 244 | Add Allociné Dataset | closed | [] | 2020-06-05T19:19:26 | 2020-06-11T07:47:26 | 2020-06-11T07:47:26 | This is a french binary sentiment classification dataset, which was used to train this model: https://huggingface.co/tblard/tf-allocine.

Basically, it's a french "IMDB" dataset, with more reviews.

More info on [this repo](https://github.com/TheophileBlard/french-sentiment-analysis-with-bert). | TheophileBlard | https://github.com/huggingface/datasets/pull/244 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/244",

"html_url": "https://github.com/huggingface/datasets/pull/244",

"diff_url": "https://github.com/huggingface/datasets/pull/244.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/244.patch",

"merged_at": "2020-06-11T07:47:26"... | true |

631,735,848 | 243 | Specify utf-8 encoding for GLUE | closed | [] | 2020-06-05T16:33:00 | 2020-06-17T21:16:06 | 2020-06-08T08:42:01 | #242

This makes the GLUE-MNLI dataset readable on my machine, not sure if it's a Windows-only bug. | patpizio | https://github.com/huggingface/datasets/pull/243 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/243",

"html_url": "https://github.com/huggingface/datasets/pull/243",

"diff_url": "https://github.com/huggingface/datasets/pull/243.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/243.patch",

"merged_at": "2020-06-08T08:42:01"... | true |

631,733,683 | 242 | UnicodeDecodeError when downloading GLUE-MNLI | closed | [] | 2020-06-05T16:30:01 | 2020-06-09T16:06:47 | 2020-06-08T08:45:03 | When I run

```python

dataset = nlp.load_dataset('glue', 'mnli')

```

I get an encoding error (could it be because I'm using Windows?) :

```python

# Lots of error log lines later...

~\Miniconda3\envs\nlp\lib\site-packages\tqdm\std.py in __iter__(self)

1128 try:

-> 1129 for obj in iterable:... | patpizio | https://github.com/huggingface/datasets/issues/242 | null | false |

631,703,079 | 241 | Fix empty cache dir | closed | [] | 2020-06-05T15:45:22 | 2020-06-08T08:35:33 | 2020-06-08T08:35:31 | If the cache dir of a dataset is empty, the dataset fails to load and throws a FileNotFounfError. We could end up with empty cache dir because there was a line in the code that created the cache dir without using a temp dir. Using a temp dir is useful as it gets renamed to the real cache dir only if the full process is... | lhoestq | https://github.com/huggingface/datasets/pull/241 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/241",

"html_url": "https://github.com/huggingface/datasets/pull/241",

"diff_url": "https://github.com/huggingface/datasets/pull/241.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/241.patch",

"merged_at": "2020-06-08T08:35:31"... | true |

631,434,677 | 240 | Deterministic dataset loading | closed | [] | 2020-06-05T09:03:26 | 2020-06-08T09:18:14 | 2020-06-08T09:18:14 | When calling:

```python

import nlp

dataset = nlp.load_dataset("trivia_qa", split="validation[:1%]")

```

the resulting dataset is not deterministic over different google colabs.

After talking to @thomwolf, I suspect the reason to be the use of `glob.glob` in line:

https://github.com/huggingface/nlp/blob/2e0... | patrickvonplaten | https://github.com/huggingface/datasets/issues/240 | null | false |

631,340,440 | 239 | [Creating new dataset] Not found dataset_info.json | closed | [] | 2020-06-05T06:15:04 | 2020-06-07T13:01:04 | 2020-06-07T13:01:04 | Hi, I am trying to create Toronto Book Corpus. #131

I ran

`~/nlp % python nlp-cli test datasets/bookcorpus --save_infos --all_configs`

but this doesn't create `dataset_info.json` and try to use it

```

INFO:nlp.load:Checking datasets/bookcorpus/bookcorpus.py for additional imports.

INFO:filelock:Lock 1397953257... | richarddwang | https://github.com/huggingface/datasets/issues/239 | null | false |

631,260,143 | 238 | [Metric] Bertscore : Warning : Empty candidate sentence; Setting recall to be 0. | closed | [] | 2020-06-05T02:14:47 | 2020-06-29T17:10:19 | 2020-06-29T17:10:19 | When running BERT-Score, I'm meeting this warning :

> Warning: Empty candidate sentence; Setting recall to be 0.

Code :

```

import nlp

metric = nlp.load_metric("bertscore")

scores = metric.compute(["swag", "swags"], ["swags", "totally something different"], lang="en", device=0)

```

---

**What am I do... | astariul | https://github.com/huggingface/datasets/issues/238 | null | false |

631,199,940 | 237 | Can't download MultiNLI | closed | [] | 2020-06-04T23:05:21 | 2020-06-06T10:51:34 | 2020-06-06T10:51:34 | When I try to download MultiNLI with

```python

dataset = load_dataset('multi_nli')

```

I get this long error:

```python

---------------------------------------------------------------------------

OSError Traceback (most recent call last)

<ipython-input-13-3b11f6be4cb9> in <m... | patpizio | https://github.com/huggingface/datasets/issues/237 | null | false |

631,099,875 | 236 | CompGuessWhat?! dataset | closed | [] | 2020-06-04T19:45:50 | 2020-06-11T09:43:42 | 2020-06-11T07:45:21 | Hello,

Thanks for the amazing library that you put together. I'm Alessandro Suglia, the first author of CompGuessWhat?!, a recently released dataset for grounded language learning accepted to ACL 2020 ([https://compguesswhat.github.io](https://compguesswhat.github.io)).

This pull-request adds the CompGuessWhat?! ... | aleSuglia | https://github.com/huggingface/datasets/pull/236 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/236",

"html_url": "https://github.com/huggingface/datasets/pull/236",

"diff_url": "https://github.com/huggingface/datasets/pull/236.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/236.patch",

"merged_at": "2020-06-11T07:45:21"... | true |

630,952,297 | 235 | Add experimental datasets | closed | [] | 2020-06-04T15:54:56 | 2020-06-12T15:38:55 | 2020-06-12T15:38:55 | ## Adding an *experimental datasets* folder

After using the 🤗nlp library for some time, I find that while it makes it super easy to create new memory-mapped datasets with lots of cool utilities, a lot of what I want to do doesn't work well with the current `MockDownloader` based testing paradigm, making it hard to ... | yjernite | https://github.com/huggingface/datasets/pull/235 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/235",

"html_url": "https://github.com/huggingface/datasets/pull/235",

"diff_url": "https://github.com/huggingface/datasets/pull/235.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/235.patch",

"merged_at": "2020-06-12T15:38:55"... | true |

630,534,427 | 234 | Huggingface NLP, Uploading custom dataset | closed | [] | 2020-06-04T05:59:06 | 2020-07-06T09:33:26 | 2020-07-06T09:33:26 | Hello,

Does anyone know how we can call our custom dataset using the nlp.load command? Let's say that I have a dataset based on the same format as that of squad-v1.1, how am I supposed to load it using huggingface nlp.

Thank you! | Nouman97 | https://github.com/huggingface/datasets/issues/234 | null | false |

630,432,132 | 233 | Fail to download c4 english corpus | closed | [] | 2020-06-04T01:06:38 | 2021-01-08T07:17:32 | 2020-06-08T09:16:59 | i run following code to download c4 English corpus.

```

dataset = nlp.load_dataset('c4', 'en', beam_runner='DirectRunner'

, data_dir='/mypath')

```

and i met failure as follows

```

Downloading and preparing dataset c4/en (download: Unknown size, generated: Unknown size, total: Unknown size) to /home/adam/.... | donggyukimc | https://github.com/huggingface/datasets/issues/233 | null | false |

630,029,568 | 232 | Nlp cli fix endpoints | closed | [] | 2020-06-03T14:10:39 | 2020-06-08T09:02:58 | 2020-06-08T09:02:57 | With this PR users will be able to upload their own datasets and metrics.

As mentioned in #181, I had to use the new endpoints and revert the use of dataclasses (just in case we have changes in the API in the future).

We now distinguish commands for datasets and commands for metrics:

```bash

nlp-cli upload_data... | lhoestq | https://github.com/huggingface/datasets/pull/232 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/232",

"html_url": "https://github.com/huggingface/datasets/pull/232",

"diff_url": "https://github.com/huggingface/datasets/pull/232.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/232.patch",

"merged_at": "2020-06-08T09:02:57"... | true |

629,988,694 | 231 | Add .download to MockDownloadManager | closed | [] | 2020-06-03T13:20:00 | 2020-06-03T14:25:56 | 2020-06-03T14:25:55 | One method from the DownloadManager was missing and some users couldn't run the tests because of that.

@yjernite | lhoestq | https://github.com/huggingface/datasets/pull/231 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/231",

"html_url": "https://github.com/huggingface/datasets/pull/231",

"diff_url": "https://github.com/huggingface/datasets/pull/231.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/231.patch",

"merged_at": "2020-06-03T14:25:54"... | true |

629,983,684 | 230 | Don't force to install apache beam for wikipedia dataset | closed | [] | 2020-06-03T13:13:07 | 2020-06-03T14:34:09 | 2020-06-03T14:34:07 | As pointed out in #227, we shouldn't force users to install apache beam if the processed dataset can be downloaded. I moved the imports of some datasets to avoid this problem | lhoestq | https://github.com/huggingface/datasets/pull/230 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/230",

"html_url": "https://github.com/huggingface/datasets/pull/230",

"diff_url": "https://github.com/huggingface/datasets/pull/230.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/230.patch",

"merged_at": "2020-06-03T14:34:07"... | true |

629,956,490 | 229 | Rename dataset_infos.json to dataset_info.json | closed | [] | 2020-06-03T12:31:44 | 2020-06-03T12:52:54 | 2020-06-03T12:48:33 | As the file required for the viewing in the live nlp viewer is named as dataset_info.json | aswin-giridhar | https://github.com/huggingface/datasets/pull/229 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/229",

"html_url": "https://github.com/huggingface/datasets/pull/229",

"diff_url": "https://github.com/huggingface/datasets/pull/229.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/229.patch",

"merged_at": null

} | true |

629,952,402 | 228 | Not able to access the XNLI dataset | closed | [] | 2020-06-03T12:25:14 | 2020-07-17T17:44:22 | 2020-07-17T17:44:22 | When I try to access the XNLI dataset, I get the following error. The option of plain_text get selected automatically and then I get the following error.

```

FileNotFoundError: [Errno 2] No such file or directory: '/home/sasha/.cache/huggingface/datasets/xnli/plain_text/1.0.0/dataset_info.json'

Traceback:

File "/... | aswin-giridhar | https://github.com/huggingface/datasets/issues/228 | null | false |

629,845,704 | 227 | Should we still have to force to install apache_beam to download wikipedia ? | closed | [] | 2020-06-03T09:33:20 | 2020-06-03T15:25:41 | 2020-06-03T15:25:41 | Hi, first thanks to @lhoestq 's revolutionary work, I successfully downloaded processed wikipedia according to the doc. 😍😍😍

But at the first try, it tell me to install `apache_beam` and `mwparserfromhell`, which I thought wouldn't be used according to #204 , it was kind of confusing me at that time.

Maybe we s... | richarddwang | https://github.com/huggingface/datasets/issues/227 | null | false |

628,344,520 | 226 | add BlendedSkillTalk dataset | closed | [] | 2020-06-01T10:54:45 | 2020-06-03T14:37:23 | 2020-06-03T14:37:22 | This PR add the BlendedSkillTalk dataset, which is used to fine tune the blenderbot. | mariamabarham | https://github.com/huggingface/datasets/pull/226 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/226",

"html_url": "https://github.com/huggingface/datasets/pull/226",

"diff_url": "https://github.com/huggingface/datasets/pull/226.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/226.patch",

"merged_at": "2020-06-03T14:37:22"... | true |

628,083,366 | 225 | [ROUGE] Different scores with `files2rouge` | closed | [] | 2020-06-01T00:50:36 | 2020-06-03T15:27:18 | 2020-06-03T15:27:18 | It seems that the ROUGE score of `nlp` is lower than the one of `files2rouge`.

Here is a self-contained notebook to reproduce both scores : https://colab.research.google.com/drive/14EyAXValB6UzKY9x4rs_T3pyL7alpw_F?usp=sharing

---

`nlp` : (Only mid F-scores)

>rouge1 0.33508031962733364

rouge2 0.145743337761... | astariul | https://github.com/huggingface/datasets/issues/225 | null | false |

627,791,693 | 224 | [Feature Request/Help] BLEURT model -> PyTorch | closed | [] | 2020-05-30T18:30:40 | 2023-08-26T17:38:48 | 2021-01-04T09:53:32 | Hi, I am interested in porting google research's new BLEURT learned metric to PyTorch (because I wish to do something experimental with language generation and backpropping through BLEURT). I noticed that you guys don't have it yet so I am partly just asking if you plan to add it (@thomwolf said you want to do so on Tw... | adamwlev | https://github.com/huggingface/datasets/issues/224 | null | false |

627,683,386 | 223 | [Feature request] Add FLUE dataset | closed | [] | 2020-05-30T08:52:15 | 2020-12-03T13:39:33 | 2020-12-03T13:39:33 | Hi,

I think it would be interesting to add the FLUE dataset for francophones or anyone wishing to work on French.

In other requests, I read that you are already working on some datasets, and I was wondering if FLUE was planned.

If it is not the case, I can provide each of the cleaned FLUE datasets (in the form... | lbourdois | https://github.com/huggingface/datasets/issues/223 | null | false |

627,586,690 | 222 | Colab Notebook breaks when downloading the squad dataset | closed | [] | 2020-05-29T22:55:59 | 2020-06-04T00:21:05 | 2020-06-04T00:21:05 | When I run the notebook in Colab

https://colab.research.google.com/github/huggingface/nlp/blob/master/notebooks/Overview.ipynb

breaks when running this cell:

| carlos-aguayo | https://github.com/huggingface/datasets/issues/222 | null | false |

627,300,648 | 221 | Fix tests/test_dataset_common.py | closed | [] | 2020-05-29T14:12:15 | 2020-06-01T12:20:42 | 2020-05-29T15:02:23 | When I run the command `RUN_SLOW=1 pytest tests/test_dataset_common.py::LocalDatasetTest::test_load_real_dataset_arcd` while working on #220. I get the error ` unexpected keyword argument "'download_and_prepare_kwargs'"` at the level of `load_dataset`. Indeed, this [function](https://github.com/huggingface/nlp/blob/ma... | tayciryahmed | https://github.com/huggingface/datasets/pull/221 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/221",

"html_url": "https://github.com/huggingface/datasets/pull/221",

"diff_url": "https://github.com/huggingface/datasets/pull/221.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/221.patch",

"merged_at": "2020-05-29T15:02:23"... | true |

627,280,683 | 220 | dataset_arcd | closed | [] | 2020-05-29T13:46:50 | 2020-05-29T14:58:40 | 2020-05-29T14:57:21 | Added Arabic Reading Comprehension Dataset (ARCD): https://arxiv.org/abs/1906.05394 | tayciryahmed | https://github.com/huggingface/datasets/pull/220 | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/220",

"html_url": "https://github.com/huggingface/datasets/pull/220",

"diff_url": "https://github.com/huggingface/datasets/pull/220.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/220.patch",

"merged_at": "2020-05-29T14:57:21"... | true |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.