repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

recommenders-team/recommenders | data-science | 1,833 | [FEATURE] AzureML SDK v2 support | ### Description

<!--- Describe your expected feature in detail -->

AzureML SDK v2 was GA at Ignite 2022.

We saw the codes in [the examples](https://github.com/microsoft/recommenders/tree/main/examples) are based on SDK v1.

Do we have any plans to update the examples to follow SDK v2?

### Expected behavior with the suggested feature

<!--- For example: -->

<!--- *Adding algorithm xxx will help people understand more about xxx use case scenarios. -->

### Other Comments

| closed | 2022-10-25T02:20:07Z | 2024-05-06T14:49:21Z | https://github.com/recommenders-team/recommenders/issues/1833 | [

"enhancement"

] | shohei1029 | 2 |

unionai-oss/pandera | pandas | 871 | Dask DataFrame filter fails | **Describe the bug**

Dask Dataframes validated with `strict='filter'` do not drop extraneous columns.

- [x] I have checked that this issue has not already been reported.

- [x] I have confirmed this bug exists on the latest version of pandera.

#### Code Sample

```python

import pandas as pd

import pandera as pa

import dask.dataframe as dd

df1 = pd.DataFrame([[1, 1], [3, 2], [5, 3]], columns=["col1", "col2"])

df2 = dd.from_pandas(df1, npartitions=1)

my_schema = pa.DataFrameSchema(

{

"col2": pa.Column(int),

},

strict="filter",

)

new_df1 = my_schema(df1)

new_df2 = my_schema(df2)

```

#### Expected behavior

DataFrames should be filtered such that `col2` remains and `col1` is dropped. The validated pandas DataFrame `new_df1` behaves as expected. However, the resulting Dask DataFrame `new_df2` retains both columns.

#### Additional context

Apologies if this falls under the wider net of #119. I am interpreting that issue as pertaining to more complex memory management problems. Thanks for your help. | open | 2022-06-01T14:35:04Z | 2022-06-01T14:35:43Z | https://github.com/unionai-oss/pandera/issues/871 | [

"bug"

] | gg314 | 0 |

Kav-K/GPTDiscord | asyncio | 368 | Unknown interaction errors | Sometimes there are unknown interaction errors with a response in /gpt converse takes too long to return, or if the user deleted their original message it is responding to before it responds | closed | 2023-10-31T01:18:43Z | 2023-11-12T19:40:57Z | https://github.com/Kav-K/GPTDiscord/issues/368 | [

"bug",

"help wanted",

"good first issue",

"help-wanted-important"

] | Kav-K | 11 |

Hironsan/BossSensor | computer-vision | 6 | how many pictures are used to train | how many pictures are used to train? I use your code. Boss picture number: 300 other picture number: 300*10 ; (10 persons , everyone have 300 ). the model give accuracy 0.93. I think it is too low. how to improve the accuracy? | open | 2017-01-09T08:50:22Z | 2017-01-10T13:15:31Z | https://github.com/Hironsan/BossSensor/issues/6 | [] | seeyourcell | 2 |

ultrafunkamsterdam/undetected-chromedriver | automation | 1,274 | Cannot connect to chrome at 127.0.0.1:33773 when deployed on Render | Hello,

I am trying to run a python script for headless browsing deployed on Render but I have this error message:

```

May 19 03:15:54 PM Incoming POST request for /api/strategies/collaborative/

May 19 03:15:56 PM stderr: 2023/05/19 13:15:56 INFO ====== WebDriver manager ======

May 19 03:15:56 PM

May 19 03:15:57 PM stderr: 2023/05/19 13:15:57 INFO There is no [linux64] chromedriver "latest" for browser google-chrome "113.0.5672" in cache

May 19 03:15:57 PM

May 19 03:15:57 PM stderr: 2023/05/19 13:15:57 INFO Get LATEST chromedriver version for google-chrome

May 19 03:15:57 PM

May 19 03:15:57 PM stderr: 2023/05/19 13:15:57 INFO About to download new driver from https://chromedriver.storage.googleapis.com/113.0.5672.63/chromedriver_linux64.zip

May 19 03:15:57 PM

May 19 03:15:57 PM stderr:

[WDM] - Downloading: 0%| | 0.00/6.98M [00:00<?, ?B/s]

May 19 03:15:58 PM stderr:

[WDM] - Downloading: 19%|█▉ | 1.35M/6.98M [00:00<00:00, 7.96MB/s]

May 19 03:15:58 PM stderr:

[WDM] - Downloading: 39%|███▉ | 2.73M/6.98M [00:00<00:00, 10.9MB/s]

May 19 03:15:58 PM stderr:

[WDM] - Downloading: 58%|█████▊ | 4.02M/6.98M [00:00<00:00, 11.9MB/s]

May 19 03:15:58 PM stderr:

[WDM] - Downloading: 75%|███████▍ | 5.23M/6.98M [00:00<00:00, 12.1MB/s]

May 19 03:15:58 PM stderr:

[WDM] - Downloading: 92%|█████████▏| 6.42M/6.98M [00:00<00:00, 12.2MB/s]

May 19 03:15:58 PM stderr:

[WDM] - Downloading: 100%|██████████| 6.98M/6.98M [00:00<00:00, 12.5MB/s]

May 19 03:15:59 PM stderr:

May 19 03:15:59 PM 2023/05/19 13:15:59 INFO Driver has been saved in cache [/opt/render/.wdm/drivers/chromedriver/linux64/113.0.5672.63]

May 19 03:15:59 PM

May 19 03:15:59 PM stderr: 2023/05/19 13:15:59 INFO Loading undetected Chrome

May 19 03:15:59 PM

May 19 03:17:02 PM stderr: Traceback (most recent call last):

May 19 03:17:02 PM File "scripts/python.py", line 26, in <module>

May 19 03:17:02 PM main()

May 19 03:17:02 PM File "scripts/python.py", line 17, in main

May 19 03:17:02 PM python = Python_Client(login, password)

May 19 03:17:02 PM File "/opt/render/project/src/server/scripts/python_utils.py", line 93, in __init__

May 19 03:17:02 PM version_main=112

May 19 03:17:02 PM File "/opt/render/project/src/.venv/lib/python3.7/site-packages/undetected_chromedriver/__init__.py", line 461, in __init__

May 19 03:17:02 PM service=service, # needed or the service will be re-created

May 19 03:17:02 PM File "/opt/render/project/src/.venv/lib/python3.7/site-packages/selenium/webdriver/chrome/webdriver.py", line 93, in __init__

May 19 03:17:02 PM keep_alive,

May 19 03:17:02 PM File "/opt/render/project/src/.venv/lib/python3.7/site-packages/selenium/webdriver/chromium/webdriver.py", line 112, in __init__

May 19 03:17:02 PM options=options,

May 19 03:17:02 PM File "/opt/render/project/src/.venv/lib/python3.7/site-packages/selenium/webdriver/remote/webdriver.py", line 286, in __init__

May 19 03:17:02 PM self.start_session(capabilities, browser_profile)

May 19 03:17:02 PM File "/opt/render/project/src/.venv/lib/python3.7/site-packages/undetected_chromedriver/__init__.py", line 717, in start_session

May 19 03:17:02 PM capabilities, browser_profile

May 19 03:17:02 PM File "/opt/render/project/src/.venv/lib/python3.7/site-packages/selenium/webdriver/remote/webdriver.py", line 378, in start_session

May 19 03:17:02 PM response = self.execute(Command.NEW_SESSION, parameters)

May 19 03:17:02 PM File "/opt/render/project/src/.venv/lib/python3.7/site-packages/selenium/webdriver/remote/webdriver.py", line 440, in execute

May 19 03:17:02 PM self.error_handler.check_response(response)

May 19 03:17:02 PM File "/opt/render/project/src/.venv/lib/python3.7/site-packages/selenium/webdriver/remote/errorhandler.py", line 245, in check_response

May 19 03:17:02 PM raise exception_class(message, screen, stacktrace)

May 19 03:17:02 PM selenium.common.exceptions.WebDriverException: Message: unknown error: cannot connect to chrome at 127.0.0.1:33773

May 19 03:17:02 PM from chrome not reachable

May 19 03:17:02 PM Stacktrace:

May 19 03:17:02 PM #0 0x55d383f67fe3 <unknown>

May 19 03:17:02 PM #1 0x55d383ca6bc1 <unknown>

May 19 03:17:02 PM #2 0x55d383c94ff6 <unknown>

May 19 03:17:02 PM #3 0x55d383cd3e00 <unknown>

May 19 03:17:02 PM #4 0x55d383ccb352 <unknown>

May 19 03:17:02 PM #5 0x55d383d0daf7 <unknown>

May 19 03:17:02 PM #6 0x55d383d0d11f <unknown>

May 19 03:17:02 PM #7 0x55d383d04693 <unknown>

May 19 03:17:02 PM #8 0x55d383cd703a <unknown>

May 19 03:17:02 PM #9 0x55d383cd817e <unknown>

May 19 03:17:02 PM #10 0x55d383f29dbd <unknown>

May 19 03:17:02 PM #11 0x55d383f2dc6c <unknown>

May 19 03:17:02 PM #12 0x55d383f374b0 <unknown>

May 19 03:17:02 PM #13 0x55d383f2ed63 <unknown>

May 19 03:17:02 PM #14 0x55d383f01c35 <unknown>

May 19 03:17:02 PM #15 0x55d383f52138 <unknown>

May 19 03:17:02 PM #16 0x55d383f522c7 <unknown>

May 19 03:17:02 PM #17 0x55d383f60093 <unknown>

May 19 03:17:02 PM #18 0x7f0685c35fa3 start_thread

May 19 03:17:02 PM

May 19 03:17:02 PM

May 19 03:17:03 PM child process exited with code 1

May 19 03:17:03 PM Script output:

May 19 03:17:03 PM JSON string is empty

May 19 03:17:03 PM Error: TypeError: Cannot read properties of undefined (reading 'map')

```

Here is my code :

```

def __init__(

self,

username :str,

password :str,

headless :bool = True,

cold_start :bool = False,

verbose :bool = False

):

if verbose:

logging.getLogger().setLevel(logging.INFO)

logging.info('Verbose mode active')

options = uc.ChromeOptions()

# options.add_argument('--incognito')

options.binary_location = '/opt/render/.local/share/undetected_chromedriver/undetected_chromedriver'

service = Service(ChromeDriverManager().install())

if headless:

options.add_argument('--headless')

logging.info('Loading undetected Chrome')

self.browser = uc.Chrome(

# use_subprocess=True,

service=service,

options=options,

headless=headless,

version_main=112

)

self.browser.set_page_load_timeout(30)

logging.info('Opening service')

# Retry mechanism for opening the ChatGPT page

for i in range(3): # Try 3 times

try:

self.browser.get('https://xxxxxxxx')

logging.info('Successfully opened service)

```

It works locally

What is wrong? | closed | 2023-05-19T13:45:05Z | 2023-05-19T17:53:57Z | https://github.com/ultrafunkamsterdam/undetected-chromedriver/issues/1274 | [] | Louvivien | 0 |

tensorflow/tensor2tensor | deep-learning | 1,809 | Character level language model does not work | ### Description

I try to train a character level language model but only get strange tokens as output. The model trains and loss decreases.

Am I just trying to infer from the model incorrectly, or is there something else going on?

...

### Environment information

```

OS: Debian GNU/Linux 9.11 (stretch) (GNU/Linux 4.9.0-11-amd64 x86_64\n)

$ pip freeze | grep tensor

mesh-tensorflow==0.1.13

tensor2tensor==1.15.5

tensorboard==1.15.0

tensorflow-datasets==1.2.0

tensorflow-estimator==1.15.1

tensorflow-gan==2.0.0

tensorflow-gpu==1.15.2

tensorflow-hub==0.6.0

tensorflow-io==0.8.1

tensorflow-metadata==0.21.1

tensorflow-probability==0.7.0

tensorflow-serving-api-gpu==1.14.0

$ python3 -V

Python 3.5.3

```

### For bugs: reproduction and error logs

# Steps to reproduce:

Train a character level model by running the following:

```

sudo pip3 install tensor2tensor

PROBLEM=languagemodel_ptb10k

MODEL=transformer

HPARAMS=transformer_small

DATA_DIR=$HOME/t2t_data

TMP_DIR=/tmp/t2t_datagen

TRAIN_DIR=$HOME/t2t_train/$PROBLEM/$MODEL-$HPARAMS

mkdir -p $DATA_DIR $TMP_DIR $TRAIN_DIR

t2t-datagen --data_dir=$DATA_DIR --tmp_dir=$TMP_DIR --problem=$PROBLEM

t2t-trainer --data_dir=$DATA_DIR \

--problem=$PROBLEM\

--model=$MODEL \

--hparams_set=$HPARAMS \

--output_dir=$TRAIN_DIR

```

It trains and the loss decreases.

After a while, try to make an inference by running the following:

```

DECODE_FILE=$DATA_DIR/decode_this.txt

echo "My name is Joh" >> $DECODE_FILE

echo "The last character of this senten" >> $DECODE_FILE

echo "th" >> $DECODE_FILE

BEAM_SIZE=4

ALPHA=0.6

t2t-decoder \

--data_dir=$DATA_DIR \

--problem=$PROBLEM \

--model=$MODEL \

--hparams_set=$HPARAMS \

--output_dir=$TRAIN_DIR \

--decode_hparams="beam_size=$BEAM_SIZE,alpha=$ALPHA" \

--decode_from_file=$DECODE_FILE \

--decode_to_file=output.txt

cat output.txt

```

I expect to at least see "e" after "th", but instead I get:

```

����������������������������������������������������������������������������������������������������

����������������������������������������������������������������������������������������������������

����������������������������������������������������������������������������������������������������

```

Please let me know what other information I can provide.

I'm just trying to get reasonable output of a character-level language model. I might be doing inference step wrong? Appreciate any help, thanks. | open | 2020-05-02T03:05:00Z | 2020-05-02T03:09:37Z | https://github.com/tensorflow/tensor2tensor/issues/1809 | [] | KosayJabre | 0 |

ScrapeGraphAI/Scrapegraph-ai | machine-learning | 714 | issue with chunking? Getting error Token indices sequence lenght is longer than specified maxium token. | Iam getting a error `**Token indices sequence length is longer than the specified maximum sequence length for this model (4504 > 1024)**. Running this sequence through the model will result in indexing errors`

from scrapegraphai.graphs import SmartScraperGraph

from scrapegraphai.utils import prettify_exec_info

```

graph_config = {

"llm": {

"model": "ollama/llama3.1:8b-instruct-q8_0",

"temperature": 1,

"format": "json", # Ollama needs the format to be specified explicitly

"model_tokens": 2000, # depending on the model set context length

"base_url": "http://localhost:11434", # set ollama URL of the local host (YOU CAN CHANGE IT, if you have a different endpoint

},

"embeddings": {

"model": "ollama/nomic-embed-text",

"temperature": 0,

"base_url": "http://localhost:11434", # set ollama URL

}

}

# ************************************************

# Create the SmartScraperGraph instance and run it

# ************************************************

smart_scraper_graph = SmartScraperGraph(

prompt="List all articles also provide article url, upvotes and author name",

# also accepts a string with the already downloaded HTML code

source="https://news.ycombinator.com/",

config=graph_config

)

result = smart_scraper_graph.run()

print(result)

graph_exec_info = smart_scraper_graph.get_execution_info()

print(prettify_exec_info(graph_exec_info))

```

```

C:\Users\djds4\llm-scraper\src>python -m test

Model ollama/llama3.1:8b-instruct-q8_0 not found,

using default token size (8192)

None of PyTorch, TensorFlow >= 2.0, or Flax have been found. Models won't be available and only tokenizers, configuration and file/data utilities can be used.

C:\Users\djds4\AppData\Local\Packages\PythonSoftwareFoundation.Python.3.12_qbz5n2kfra8p0\LocalCache\local-packages\Python312\site-packages\transformers\tokenization_utils_base.py:1601: FutureWarning: `clean_up_tokenization_spaces` was not set. It will be set to `True` by default. This behavior will be depracted in transformers v4.45, and will be then set to `False` by default. For more details check this issue: https://github.com/huggingface/transformers/issues/31884

warnings.warn(

Token indices sequence length is longer than the specified maximum sequence length for this model (4504 > 1024). Running this sequence through the model will result in indexing errors

{'24': {'title': 'Ask HN: Any good essays/books/advice about software sales?', 'user': 'nikasakana', 'points': 148, 'comments': 59}, '25': {'title': 'The Case of the Missing Increment', 'url': 'https://www.computerenhance.com/p/the-case-of-the-missing-increment', 'points': 17, 'comments': 3}, '26': {'title': 'Show HN: A macOS app to prevent sound quality degradation on AirPods', 'url': 'https://apps.apple.com/us/app/crystalclear-sound/id6695723746?mt=12', 'points': 165, 'comments': 216}, '27': {'title': 'Do AI companies work?', 'url': 'https://benn.substack.com/p/do-ai-companies-work', 'points': 326, 'comments': 334}, '28': {'title': 'Keep Track: 3D Satellite Toolkit', 'url': 'https://app.keeptrack.space', 'points': 162, 'comments': 36}, '29': {'title': "Fix photogrammetry bridges so that they are not 'solid' underneath (2020)", 'url': 'https://forums.flightsimulator.com/t/fix-photogrammetry-bridges-so-that-they-are-not-solid-underneath/326917', 'points': 50, 'comments': 14}, '30': {'title': 'GnuCash 5.9', 'url': 'https://www.gnucash.org/news.phtml', 'points': 223, 'comments': 115}}

node_name total_tokens prompt_tokens completion_tokens successful_requests total_cost_USD exec_time

0 Fetch 0 0 0 0 0.0 1.782655

1 ParseNode 0 0 0 0 0.0 0.903249

2 GenerateAnswer 1386 1026 360 1 0.0 149.856989

3 TOTAL RESULT 1386 1026 360 1 0.0 152.542893

```

- not sure why i get the error `Model ollama/llama3.1:8b-instruct-q8_0 not found,` when the response seems to be served from that model.

- Articles returned are from the end of the page, so there definetly is some kind of chunking running.

| closed | 2024-10-01T08:17:17Z | 2025-01-04T09:57:35Z | https://github.com/ScrapeGraphAI/Scrapegraph-ai/issues/714 | [] | djds4rce | 9 |

pandas-dev/pandas | pandas | 60,410 | DOC: incorrect formula for half-life of exponentially weighted window | ### Pandas version checks

- [X] I have checked that the issue still exists on the latest versions of the docs on `main` [here](https://pandas.pydata.org/docs/dev/)

### Location of the documentation

https://pandas.pydata.org/docs/dev/user_guide/window.html#exponentially-weighted-window

### Documentation problem

in the documentation for alpha as a function of half-life the formula says 1-exp^(log(0.5)/h)

it should be either exp(log(0.5)/h) or e^(log(0.5)/h) but not exp^(log(0.5)/h)

### Suggested fix for documentation

I suggest changing it to 1-e^(log(0.5)/h) | closed | 2024-11-25T03:53:39Z | 2024-11-25T18:36:09Z | https://github.com/pandas-dev/pandas/issues/60410 | [

"Docs",

"Needs Triage"

] | partev | 0 |

mlfoundations/open_clip | computer-vision | 1,021 | RuntimeError: expected scalar type Float but found BFloat16 (ComfyUI) | !!! Exception during processing !!! expected scalar type Float but found BFloat16

Traceback (most recent call last):

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\ComfyUI\execution.py", line 327, in execute

output_data, output_ui, has_subgraph = get_output_data(obj, input_data_all, execution_block_cb=execution_block_cb, pre_execute_cb=pre_execute_cb)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\ComfyUI\execution.py", line 202, in get_output_data

return_values = _map_node_over_list(obj, input_data_all, obj.FUNCTION, allow_interrupt=True, execution_block_cb=execution_block_cb, pre_execute_cb=pre_execute_cb)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\ComfyUI\execution.py", line 174, in _map_node_over_list

process_inputs(input_dict, i)

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\ComfyUI\execution.py", line 163, in process_inputs

results.append(getattr(obj, func)(**inputs))

^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\ComfyUI\comfy_extras\nodes_custom_sampler.py", line 651, in sample

samples = guider.sample(noise.generate_noise(latent), latent_image, sampler, sigmas, denoise_mask=noise_mask, callback=callback, disable_pbar=disable_pbar, seed=noise.seed)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\ComfyUI\comfy\samplers.py", line 985, in sample

output = executor.execute(noise, latent_image, sampler, sigmas, denoise_mask, callback, disable_pbar, seed)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\ComfyUI\comfy\patcher_extension.py", line 110, in execute

return self.original(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\ComfyUI\comfy\samplers.py", line 953, in outer_sample

output = self.inner_sample(noise, latent_image, device, sampler, sigmas, denoise_mask, callback, disable_pbar, seed) ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\ComfyUI\comfy\samplers.py", line 936, in inner_sample

samples = executor.execute(self, sigmas, extra_args, callback, noise, latent_image, denoise_mask, disable_pbar)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\ComfyUI\comfy\patcher_extension.py", line 110, in execute

return self.original(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\ComfyUI\comfy\samplers.py", line 715, in sample

samples = self.sampler_function(model_k, noise, sigmas, extra_args=extra_args, callback=k_callback, disable=disable_pbar, **self.extra_options)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\python_embeded\Lib\site-packages\torch\utils\_contextlib.py", line 116, in decorate_context

return func(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\ComfyUI\comfy\k_diffusion\sampling.py", line 161, in sample_euler

denoised = model(x, sigma_hat * s_in, **extra_args)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\ComfyUI\comfy\samplers.py", line 380, in __call__

out = self.inner_model(x, sigma, model_options=model_options, seed=seed)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\ComfyUI\comfy\samplers.py", line 916, in __call__

return self.predict_noise(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\ComfyUI\comfy\samplers.py", line 919, in predict_noise

return sampling_function(self.inner_model, x, timestep, self.conds.get("negative", None), self.conds.get("positive", None), self.cfg, model_options=model_options, seed=seed)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\ComfyUI\comfy\samplers.py", line 360, in sampling_function

out = calc_cond_batch(model, conds, x, timestep, model_options)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\ComfyUI\comfy\samplers.py", line 196, in calc_cond_batch

return executor.execute(model, conds, x_in, timestep, model_options)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\ComfyUI\comfy\patcher_extension.py", line 110, in execute

return self.original(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\ComfyUI\comfy\samplers.py", line 309, in _calc_cond_batch

output = model.apply_model(input_x, timestep_, **c).chunk(batch_chunks)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\ComfyUI\comfy\model_base.py", line 131, in apply_model

return comfy.patcher_extension.WrapperExecutor.new_class_executor(

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\ComfyUI\comfy\patcher_extension.py", line 110, in execute

return self.original(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\ComfyUI\comfy\model_base.py", line 160, in _apply_model

model_output = self.diffusion_model(xc, t, context=context, control=control, transformer_options=transformer_options, **extra_conds).float()

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\python_embeded\Lib\site-packages\torch\nn\modules\module.py", line 1736, in _wrapped_call_impl

return self._call_impl(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\python_embeded\Lib\site-packages\torch\nn\modules\module.py", line 1747, in _call_impl

return forward_call(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\ComfyUI\comfy\ldm\flux\model.py", line 204, in forward

out = self.forward_orig(img, img_ids, context, txt_ids, timestep, y, guidance, control, transformer_options, attn_mask=kwargs.get("attention_mask", None))

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\ComfyUI\custom_nodes\ComfyUI-PuLID-Flux-Enhanced\pulidflux.py", line 148, in forward_orig

img = img + node_data['weight'] * self.pulid_ca[ca_idx](node_data['embedding'], img)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\python_embeded\Lib\site-packages\torch\nn\modules\module.py", line 1736, in _wrapped_call_impl

return self._call_impl(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\python_embeded\Lib\site-packages\torch\nn\modules\module.py", line 1747, in _call_impl

return forward_call(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\ComfyUI\custom_nodes\ComfyUI-PuLID-Flux-Enhanced\encoders_flux.py", line 53, in forward

latents = self.norm2(latents)

^^^^^^^^^^^^^^^^^^^

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\python_embeded\Lib\site-packages\torch\nn\modules\module.py", line 1736, in _wrapped_call_impl

return self._call_impl(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\python_embeded\Lib\site-packages\torch\nn\modules\module.py", line 1747, in _call_impl

return forward_call(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\python_embeded\Lib\site-packages\torch\nn\modules\normalization.py", line 217, in forward

return F.layer_norm(

^^^^^^^^^^^^^

File "D:\ComfyUI_windows_portable_nvidia\ComfyUI_windows_portable\python_embeded\Lib\site-packages\torch\nn\functional.py", line 2900, in layer_norm

return torch.layer_norm(

^^^^^^^^^^^^^^^^^

RuntimeError: expected scalar type Float but found BFloat16 | closed | 2025-01-15T14:03:44Z | 2025-01-15T20:39:42Z | https://github.com/mlfoundations/open_clip/issues/1021 | [] | Ehsan7104 | 0 |

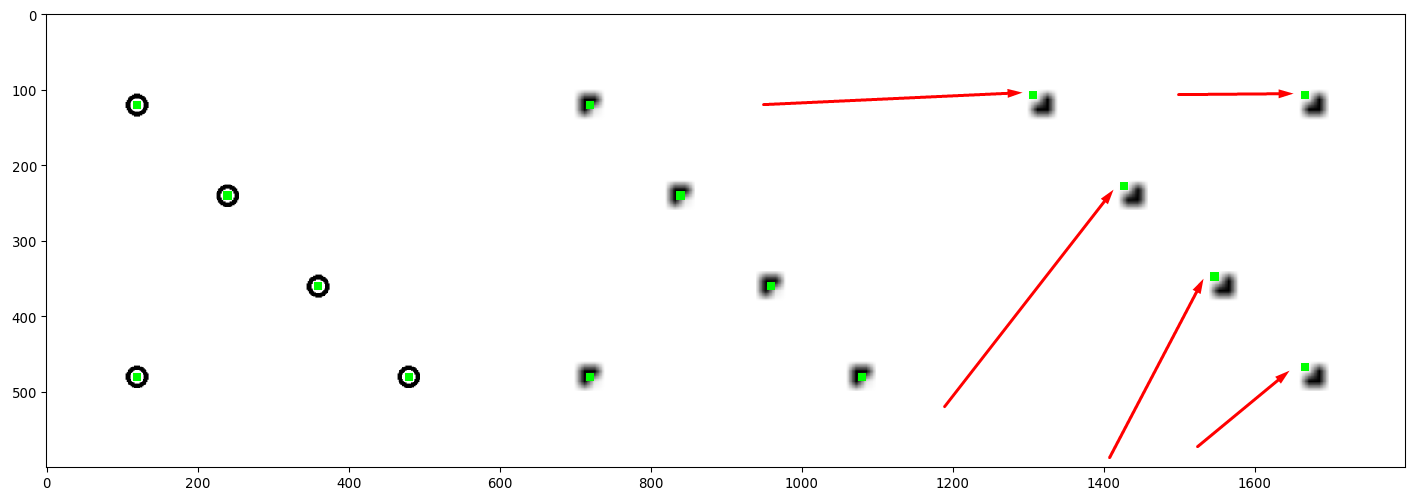

aleju/imgaug | deep-learning | 496 | Keypoints relocate wrongly when rotated | I observed that when I use `iaa.Affine(rotate="anything that is not zero")`, the keypoints shifted wrongly on the small image.

In the following image, the 3rd plot is rotated by 180 degrees, but somehow the keypoints are not in the center of the circle. The problem is more noticeable when you resize the image further to 30x30.

Here's the minimal code to reproduce the issue:

```python

import cv2 as cv

import numpy as np

import imgaug as ia

from imgaug import augmenters as iaa

from imgaug.augmentables.kps import KeypointsOnImage

def enlarge_and_plot(img, koi):

plot = cv.resize(img, (600, 600))

plot = koi.on(plot).draw_on_image(plot, size=10)

return plot

# creating big image and big keypoints

img = np.ones((500,500,3), dtype=np.uint8) * 255

coords = [100,100,200,200,300,300,400,400,100,400] # list of XY coordinates

coords = np.float32(coords).reshape((-1, 2))

for coord in coords:

cv.circle(img, tuple(coord), 10, (0,0,0), thickness=3)

koi = KeypointsOnImage.from_xy_array(coords, img.shape)

plot1 = enlarge_and_plot(img, koi)

# make small image and small keypoints

img = cv.resize(img, (45, 45))

koi = koi.on(img)

plot2 = enlarge_and_plot(img, koi)

# rotate small image and small keypoints

print(koi)

img, koi = iaa.Affine(rotate=180)(image=img, keypoints=koi)

print(koi)

plot3 = enlarge_and_plot(img, koi)

ia.imshow(np.hstack([plot1, plot2, plot3]))

```

I don't know if this is a bug or it's simply my mistake of how I'm using the library. Please give me insight. @aleju

But I think it's kind of an off-by-one error. I see that the first coordinate (100,100) gets mapped to (9,9) on 45x45 image, which is correct, but after rotation, the coordinate gets mapped to (35,35) which should be (36,36) if I understand correctly.

Here's the output of the program:

```python

KeypointsOnImage([Keypoint(x=9.00000000, y=9.00000000), Keypoint(x=18.00000000, y=18.00000000), Keypoint(x=27.00000191, y=27.00000191), Keypoint(x=36.00000000, y=36.00000000), Keypoint(x=9.00000000, y=36.00000000)], shape=(45, 45, 3))

KeypointsOnImage([Keypoint(x=35.00000000, y=35.00000000), Keypoint(x=26.00000000, y=26.00000000), Keypoint(x=16.99999809, y=16.99999809), Keypoint(x=8.00000000, y=8.00000000), Keypoint(x=35.00000000, y=8.00000000)], shape=(45, 45, 3))

```

If this is the case, it means that you won't notice this bug in a big image because one pixel wrong is not visible to the naked eyes | closed | 2019-11-14T13:15:33Z | 2019-11-16T08:53:19Z | https://github.com/aleju/imgaug/issues/496 | [] | offchan42 | 6 |

tableau/server-client-python | rest-api | 760 | assign groups to projects while publishing workbooks | Is there a way to assign projects to groups based on the requirement

| open | 2020-12-10T17:05:38Z | 2021-02-22T19:17:17Z | https://github.com/tableau/server-client-python/issues/760 | [

"enhancement"

] | yashwathreddy | 3 |

ymcui/Chinese-BERT-wwm | tensorflow | 205 | 计算两句子的相似度 | '''

>>> import torch

>>> from transformers import BertModel, BertTokenizer

>>> model_name = "hfl/chinese-roberta-wwm-ext-large"

>>> tokenizer = BertTokenizer.from_pretrained(model_name)

>>> model = BertModel.from_pretrained(model_name)

>>> input_text1 = "今天天气不错,你觉得呢?"

>>> input_text2 = "今天天气不错,你觉得呢?我喜欢吃饺子"

>>> input_ids1 = tokenizer.encode(input_text1, add_special_tokens=True)

>>> input_ids2 = tokenizer.encode(input_text2, add_special_tokens=True)

>>> input_ids1 = torch.tensor([input_ids1])

>>> input_ids2 = torch.tensor([input_ids2])

>>> out1 = model(input_ids1)[0]

>>> out2 = model(input_ids2)[0]

>>> out1.shape

torch.Size([1, 14, 1024])

>>> out2.shape

torch.Size([1, 20, 1024])

'''

为什么输出特征维度不一样,我想比较两个句子的相似度,用哪个维度的特征呢? | closed | 2021-11-24T08:12:51Z | 2022-02-13T12:04:20Z | https://github.com/ymcui/Chinese-BERT-wwm/issues/205 | [] | yfq512 | 2 |

scanapi/scanapi | rest-api | 514 | Add hacktoberfest topic on the repository | It would be nice to have the topic for next month | closed | 2021-09-26T20:57:59Z | 2022-02-02T16:41:24Z | https://github.com/scanapi/scanapi/issues/514 | [

"Question"

] | patrickelectric | 6 |

JaidedAI/EasyOCR | machine-learning | 430 | CUDA out of memory error while trying to transcribe a lot of images. | Like the title suggests I am trying to transcribe thousands of images but I ran into this CUDA OOM error after 40 images were transcribed

```

Traceback (most recent call last):

File "C:/Users/cubeservdev/Dev/OCRTest/ocr_test.py", line 15, in <module>

transcription = reader.readtext(f'images/{image_file}', detail=0)

File "C:\ProgramData\Anaconda3\envs\EasyOCRTest\lib\site-packages\easyocr\easyocr.py", line 378, in readtext

add_margin, False)

File "C:\ProgramData\Anaconda3\envs\EasyOCRTest\lib\site-packages\easyocr\easyocr.py", line 273, in detect

False, self.device, optimal_num_chars)

File "C:\ProgramData\Anaconda3\envs\EasyOCRTest\lib\site-packages\easyocr\detection.py", line 81, in get_textbox

bboxes, polys = test_net(canvas_size, mag_ratio, detector, image, text_threshold, link_threshold, low_text, poly, device, estimate_num_chars)

File "C:\ProgramData\Anaconda3\envs\EasyOCRTest\lib\site-packages\easyocr\detection.py", line 38, in test_net

y, feature = net(x)

File "C:\ProgramData\Anaconda3\envs\EasyOCRTest\lib\site-packages\torch\nn\modules\module.py", line 727, in _call_impl

result = self.forward(*input, **kwargs)

File "C:\ProgramData\Anaconda3\envs\EasyOCRTest\lib\site-packages\torch\nn\parallel\data_parallel.py", line 159, in forward

return self.module(*inputs[0], **kwargs[0])

File "C:\ProgramData\Anaconda3\envs\EasyOCRTest\lib\site-packages\torch\nn\modules\module.py", line 727, in _call_impl

result = self.forward(*input, **kwargs)

File "C:\ProgramData\Anaconda3\envs\EasyOCRTest\lib\site-packages\easyocr\craft.py", line 60, in forward

sources = self.basenet(x)

File "C:\ProgramData\Anaconda3\envs\EasyOCRTest\lib\site-packages\torch\nn\modules\module.py", line 727, in _call_impl

result = self.forward(*input, **kwargs)

File "C:\ProgramData\Anaconda3\envs\EasyOCRTest\lib\site-packages\easyocr\model\modules.py", line 61, in forward

h = self.slice1(X)

File "C:\ProgramData\Anaconda3\envs\EasyOCRTest\lib\site-packages\torch\nn\modules\module.py", line 727, in _call_impl

result = self.forward(*input, **kwargs)

File "C:\ProgramData\Anaconda3\envs\EasyOCRTest\lib\site-packages\torch\nn\modules\container.py", line 117, in forward

input = module(input)

File "C:\ProgramData\Anaconda3\envs\EasyOCRTest\lib\site-packages\torch\nn\modules\module.py", line 727, in _call_impl

result = self.forward(*input, **kwargs)

File "C:\ProgramData\Anaconda3\envs\EasyOCRTest\lib\site-packages\torch\nn\modules\batchnorm.py", line 136, in forward

self.weight, self.bias, bn_training, exponential_average_factor, self.eps)

File "C:\ProgramData\Anaconda3\envs\EasyOCRTest\lib\site-packages\torch\nn\functional.py", line 2058, in batch_norm

training, momentum, eps, torch.backends.cudnn.enabled

RuntimeError: CUDA out of memory. Tried to allocate 1.41 GiB (GPU 0; 3.00 GiB total capacity; 1.75 GiB already allocated; 0 bytes free; 1.84 GiB reserved in total by PyTorch)

libpng warning: iCCP: known incorrect sRGB profile

```

```py

import os

import time

import easyocr

if __name__ == '__main__':

images = os.listdir('images')

image_count = len(images)

reader = easyocr.Reader(['ch_sim', 'en'], recog_network='latin_g1', gpu=True)

for index, image_file in enumerate(images):

text_file_name = image_file.split('.')[0]

print(f'Transcribing ({index}/{image_count}) {text_file_name}', end='')

start_time = time.time()

with open(f'transcriptions/{text_file_name}.txt', 'w+', encoding='utf-8') as text_file:

transcription = reader.readtext(f'images/{image_file}', detail=0)

text_file.write('\n'.join(transcription))

print(f' finished in {round(time.time() - start_time, 2)} seconds.')

```

I don't think the memory issues/leaks are being caused by my code but I could most definitely be wrong. How can I resolve this issue? | closed | 2021-05-16T18:42:48Z | 2022-03-02T09:24:59Z | https://github.com/JaidedAI/EasyOCR/issues/430 | [] | cubeserverdev | 4 |

mage-ai/mage-ai | data-science | 5,299 | [BUG] The unique_conflict_method='UPDATE' function of MySQL data exporter did not work properly | ### Mage version

v0.9.72

### Describe the bug

When I use the MySQL data exporter like following code

```Python

with MySQL.with_config(ConfigFileLoader(config_path, config_profile)) as loader:

loader.export(

df,

schema_name=None,

table_name=table_name,

index=False, # Specifies whether to include index in exported table

if_exists='append', # Specify resolution policy if table name already exists

allow_reserved_words=True,

unique_conflict_method='UPDATE',

unique_constraints=constraints_columns,

)

```

It report an error `You have an error in your SQL syntax; check the manual that corresponds to your MariaDB server version for the right syntax to use near 'AS new`

### To reproduce

1. use the code I provided

2. run the block

### Expected behavior

1. update the conflict record successfully

### Screenshots

_No response_

### Operating system

_No response_

### Additional context

could fix the problem with the code below in MySQL.py

```Python

if UNIQUE_CONFLICT_METHOD_UPDATE == unique_conflict_method:

update_command = [f'{col} = VALUES({col})' for col in cleaned_columns]

query += [

f"ON DUPLICATE KEY UPDATE {', '.join(update_command)}",

]

``` | open | 2024-07-29T14:49:13Z | 2024-07-29T14:49:13Z | https://github.com/mage-ai/mage-ai/issues/5299 | [

"bug"

] | highkay | 0 |

rougier/scientific-visualization-book | matplotlib | 53 | Wrong Code in page 96 (Size, aspect & layout)? | When I run the code `F`, `H` and `I` scripts in Page 96 (Chapter 8 Size, aspect & layout), I got the figure as shown in the following figures. It is different from Figure 8.1. When I modify the axes aspect to 0.5, 1 and 2 respectively, it gets normal. It seems that `aspect='auto'` doesn't work.

- F, H and I

<img width="295" alt="F script" src="https://user-images.githubusercontent.com/39882510/174038682-c45a16a8-5a21-4ec5-b2c6-2358fb59e9aa.png">

<img width="291" alt="H script" src="https://user-images.githubusercontent.com/39882510/174038799-696424c4-88fa-4527-a2e6-114aba0ca5c9.png">

<img width="290" alt="I script" src="https://user-images.githubusercontent.com/39882510/174038996-7cf45c48-2920-4ed7-81c7-e43ec7361380.png">

| closed | 2022-06-16T09:32:37Z | 2022-06-23T07:08:39Z | https://github.com/rougier/scientific-visualization-book/issues/53 | [] | zhangkaihua88 | 3 |

aminalaee/sqladmin | asyncio | 316 | Feature parity with Flask-Admin | # Feature parity with Flask-Admin

## General features

| Feature | Status |

| ---------------------------------------------- | ------- |

| `ModelView` with configurations | ✓ |

| `BaseView` for creating custom views | ✓ |

| Authentication | ✓ |

| Ajax search related model | ✓ |

| Customizing the templates (basic) | ✓ |

| Batch operations | |

| Inline models | |

| Limited support for multiple PK models | |

| Managing files | |

| Allow usage of related model in list/sort/etc. | |

| Grouping views | |

| Customizing batch operations (actions) | |

## ModelView options parity

| Option | Status |

| ----------------------------- | ------- |

| `can_create` | ✓ |

| `can_edit` | ✓ |

| `can_delete` | ✓ |

| `can_view_details` | ✓ |

| `can_export` | ✓ |

| `column_list` | ✓ |

| `column_exclude_list` | ✓ |

| `column_formatters` | ✓ |

| `page_size` | ✓ |

| `page_size_options` | ✓ |

| `column_searchable_list` | ✓ |

| `column_sortable_list` | ✓ |

| `column_default_sort` | ✓ |

| `column_details_list` | ✓ |

| `column_details_exclude_list` | ✓ |

| `column_formatters_detail` | ✓ |

| `list_template` | ✓ |

| `create_template` | ✓ |

| `details_template` | ✓ |

| `edit_template` | ✓ |

| `column_export_list` | ✓ |

| `column_export_exclude_list` | ✓ |

| `export_types` | ✓ |

| `export_max_rows` | ✓ |

| `form` | ✓ |

| `form_base_class` | ✓ |

| `form_args` | ✓ |

| `form_columns` | ✓ |

| `form_excluded_columns` | ✓ |

| `form_overrides` | ✓ |

| `form_include_pk` | ✓ |

| `form_ajax_refs` | ✓ |

| `column_filters` | |

# Django features

## General features

| Feature | Status |

| ---------------- | ------- |

| `save_as` option | ✓ |

| open | 2022-09-14T09:32:47Z | 2022-12-21T11:00:26Z | https://github.com/aminalaee/sqladmin/issues/316 | [] | aminalaee | 0 |

microsoft/unilm | nlp | 1,325 | E5: what prompt is used in training and evaluation? | @intfloat

**Describe**

I tried to reproduce the E5 score on MTEB (particularly BEIR) using the released checkpoint, but I do observe a big gap on some datasets (e.g. on TREC-COVID reproduced 51.0 vs. reported 79.6). I suspect that the prompt can be the key (as in the eval [code](https://github.com/microsoft/unilm/blob/027f0eb1cedac529915721110ab9a8dbdfad4dd9/e5/mteb_eval.py#L27C24-L27C30)). It is not clearly stated in the paper (Sec 4.3, it mentions that "For tasks other than zero-shot text classification and retrieval, we use the query embeddings by default.").

So I wonder if you can elaborate what prompts are used in E5 training and MTEB evaluation?

1. What prompts are used in pretraining?

2. What prompts are used in fine-tuning?

3. What prompts are used in MTEB evaluation? Does the prompt applied to both query and doc ([line 32](https://github.com/microsoft/unilm/blob/027f0eb1cedac529915721110ab9a8dbdfad4dd9/e5/mteb_eval.py#L32) shows ['', 'query: ', 'passage: '])?

Thank you!

Rui

| closed | 2023-10-11T21:21:23Z | 2023-10-12T02:18:52Z | https://github.com/microsoft/unilm/issues/1325 | [] | memray | 2 |

apache/airflow | python | 47,274 | Clearing Task Instances Intermittently Throws HTTP 500 Error | ### Apache Airflow version

AF3 beta1

### If "Other Airflow 2 version" selected, which one?

_No response_

### What happened?

When we try to clear task instance it's throws Intermittently.

**Logs:**

```

NFO: 192.168.207.1:54306 - "POST /public/dags/etl_dag/clearTaskInstances HTTP/1.1" 500 Internal Server Error

ERROR: Exception in ASGI application

+ Exception Group Traceback (most recent call last):

| File "/usr/local/lib/python3.9/site-packages/starlette/_utils.py", line 76, in collapse_excgroups

| yield

| File "/usr/local/lib/python3.9/site-packages/starlette/middleware/base.py", line 178, in __call__

| recv_stream.close()

| File "/usr/local/lib/python3.9/site-packages/anyio/_backends/_asyncio.py", line 767, in __aexit__

| raise BaseExceptionGroup(

| exceptiongroup.ExceptionGroup: unhandled errors in a TaskGroup (1 sub-exception)

+-+---------------- 1 ----------------

| Traceback (most recent call last):

| File "/usr/local/lib/python3.9/site-packages/uvicorn/protocols/http/httptools_impl.py", line 409, in run_asgi

| result = await app( # type: ignore[func-returns-value]

| File "/usr/local/lib/python3.9/site-packages/fastapi/applications.py", line 1054, in __call__

| await super().__call__(scope, receive, send)

| File "/usr/local/lib/python3.9/site-packages/starlette/applications.py", line 112, in __call__

| await self.middleware_stack(scope, receive, send)

| File "/usr/local/lib/python3.9/site-packages/starlette/middleware/errors.py", line 187, in __call__

| raise exc

| File "/usr/local/lib/python3.9/site-packages/starlette/middleware/errors.py", line 165, in __call__

| await self.app(scope, receive, _send)

| File "/usr/local/lib/python3.9/site-packages/starlette/middleware/gzip.py", line 29, in __call__

| await responder(scope, receive, send)

| File "/usr/local/lib/python3.9/site-packages/starlette/middleware/gzip.py", line 126, in __call__

| await super().__call__(scope, receive, send)

| File "/usr/local/lib/python3.9/site-packages/starlette/middleware/gzip.py", line 46, in __call__

| await self.app(scope, receive, self.send_with_compression)

| File "/usr/local/lib/python3.9/site-packages/starlette/middleware/cors.py", line 93, in __call__

| await self.simple_response(scope, receive, send, request_headers=headers)

| File "/usr/local/lib/python3.9/site-packages/starlette/middleware/cors.py", line 144, in simple_response

| await self.app(scope, receive, send)

| File "/usr/local/lib/python3.9/site-packages/starlette/middleware/base.py", line 178, in __call__

| recv_stream.close()

| File "/usr/local/lib/python3.9/contextlib.py", line 137, in __exit__

| self.gen.throw(typ, value, traceback)

| File "/usr/local/lib/python3.9/site-packages/starlette/_utils.py", line 82, in collapse_excgroups

| raise exc

| File "/usr/local/lib/python3.9/site-packages/starlette/middleware/base.py", line 175, in __call__

| response = await self.dispatch_func(request, call_next)

| File "/opt/airflow/airflow/api_fastapi/core_api/middleware.py", line 28, in dispatch

| response = await call_next(request)

| File "/usr/local/lib/python3.9/site-packages/starlette/middleware/base.py", line 153, in call_next

| raise app_exc

| File "/usr/local/lib/python3.9/site-packages/starlette/middleware/base.py", line 140, in coro

| await self.app(scope, receive_or_disconnect, send_no_error)

| File "/usr/local/lib/python3.9/site-packages/starlette/middleware/exceptions.py", line 62, in __call__

| await wrap_app_handling_exceptions(self.app, conn)(scope, receive, send)

| File "/usr/local/lib/python3.9/site-packages/starlette/_exception_handler.py", line 53, in wrapped_app

| raise exc

| File "/usr/local/lib/python3.9/site-packages/starlette/_exception_handler.py", line 42, in wrapped_app

| await app(scope, receive, sender)

| File "/usr/local/lib/python3.9/site-packages/starlette/routing.py", line 714, in __call__

| await self.middleware_stack(scope, receive, send)

| File "/usr/local/lib/python3.9/site-packages/starlette/routing.py", line 734, in app

| await route.handle(scope, receive, send)

| File "/usr/local/lib/python3.9/site-packages/starlette/routing.py", line 288, in handle

| await self.app(scope, receive, send)

| File "/usr/local/lib/python3.9/site-packages/starlette/routing.py", line 76, in app

| await wrap_app_handling_exceptions(app, request)(scope, receive, send)

| File "/usr/local/lib/python3.9/site-packages/starlette/_exception_handler.py", line 53, in wrapped_app

| raise exc

| File "/usr/local/lib/python3.9/site-packages/starlette/_exception_handler.py", line 42, in wrapped_app

| await app(scope, receive, sender)

| File "/usr/local/lib/python3.9/site-packages/starlette/routing.py", line 73, in app

| response = await f(request)

| File "/usr/local/lib/python3.9/site-packages/fastapi/routing.py", line 301, in app

| raw_response = await run_endpoint_function(

| File "/usr/local/lib/python3.9/site-packages/fastapi/routing.py", line 214, in run_endpoint_function

| return await run_in_threadpool(dependant.call, **values)

| File "/usr/local/lib/python3.9/site-packages/starlette/concurrency.py", line 37, in run_in_threadpool

| return await anyio.to_thread.run_sync(func)

| File "/usr/local/lib/python3.9/site-packages/anyio/to_thread.py", line 56, in run_sync

| return await get_async_backend().run_sync_in_worker_thread(

| File "/usr/local/lib/python3.9/site-packages/anyio/_backends/_asyncio.py", line 2461, in run_sync_in_worker_thread

| return await future

| File "/usr/local/lib/python3.9/site-packages/anyio/_backends/_asyncio.py", line 962, in run

| result = context.run(func, *args)

| File "/opt/airflow/airflow/api_fastapi/core_api/routes/public/task_instances.py", line 651, in post_clear_task_instances

| dag = dag.partial_subset(

| File "/opt/airflow/task_sdk/src/airflow/sdk/definitions/dag.py", line 811, in partial_subset

| dag.task_dict = {

| File "/opt/airflow/task_sdk/src/airflow/sdk/definitions/dag.py", line 812, in <dictcomp>

| t.task_id: _deepcopy_task(t)

| File "/opt/airflow/task_sdk/src/airflow/sdk/definitions/dag.py", line 808, in _deepcopy_task

| return copy.deepcopy(t, memo)

| File "/usr/local/lib/python3.9/copy.py", line 153, in deepcopy

| y = copier(memo)

| File "/opt/airflow/task_sdk/src/airflow/sdk/definitions/baseoperator.py", line 1188, in __deepcopy__

| object.__setattr__(result, k, v)

| AttributeError: can't set attribute

+------------------------------------

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/usr/local/lib/python3.9/site-packages/uvicorn/protocols/http/httptools_impl.py", line 409, in run_asgi

result = await app( # type: ignore[func-returns-value]

File "/usr/local/lib/python3.9/site-packages/fastapi/applications.py", line 1054, in __call__

await super().__call__(scope, receive, send)

File "/usr/local/lib/python3.9/site-packages/starlette/applications.py", line 112, in __call__

await self.middleware_stack(scope, receive, send)

File "/usr/local/lib/python3.9/site-packages/starlette/middleware/errors.py", line 187, in __call__

raise exc

File "/usr/local/lib/python3.9/site-packages/starlette/middleware/errors.py", line 165, in __call__

await self.app(scope, receive, _send)

File "/usr/local/lib/python3.9/site-packages/starlette/middleware/gzip.py", line 29, in __call__

await responder(scope, receive, send)

File "/usr/local/lib/python3.9/site-packages/starlette/middleware/gzip.py", line 126, in __call__

await super().__call__(scope, receive, send)

File "/usr/local/lib/python3.9/site-packages/starlette/middleware/gzip.py", line 46, in __call__

await self.app(scope, receive, self.send_with_compression)

File "/usr/local/lib/python3.9/site-packages/starlette/middleware/cors.py", line 93, in __call__

await self.simple_response(scope, receive, send, request_headers=headers)

File "/usr/local/lib/python3.9/site-packages/starlette/middleware/cors.py", line 144, in simple_response

await self.app(scope, receive, send)

File "/usr/local/lib/python3.9/site-packages/starlette/middleware/base.py", line 178, in __call__

recv_stream.close()

File "/usr/local/lib/python3.9/contextlib.py", line 137, in __exit__

self.gen.throw(typ, value, traceback)

File "/usr/local/lib/python3.9/site-packages/starlette/_utils.py", line 82, in collapse_excgroups

raise exc

File "/usr/local/lib/python3.9/site-packages/starlette/middleware/base.py", line 175, in __call__

response = await self.dispatch_func(request, call_next)

File "/opt/airflow/airflow/api_fastapi/core_api/middleware.py", line 28, in dispatch

response = await call_next(request)

File "/usr/local/lib/python3.9/site-packages/starlette/middleware/base.py", line 153, in call_next

raise app_exc

File "/usr/local/lib/python3.9/site-packages/starlette/middleware/base.py", line 140, in coro

await self.app(scope, receive_or_disconnect, send_no_error)

File "/usr/local/lib/python3.9/site-packages/starlette/middleware/exceptions.py", line 62, in __call__

await wrap_app_handling_exceptions(self.app, conn)(scope, receive, send)

File "/usr/local/lib/python3.9/site-packages/starlette/_exception_handler.py", line 53, in wrapped_app

raise exc

File "/usr/local/lib/python3.9/site-packages/starlette/_exception_handler.py", line 42, in wrapped_app

await app(scope, receive, sender)

File "/usr/local/lib/python3.9/site-packages/starlette/routing.py", line 714, in __call__

await self.middleware_stack(scope, receive, send)

File "/usr/local/lib/python3.9/site-packages/starlette/routing.py", line 734, in app

await route.handle(scope, receive, send)

File "/usr/local/lib/python3.9/site-packages/starlette/routing.py", line 288, in handle

await self.app(scope, receive, send)

File "/usr/local/lib/python3.9/site-packages/starlette/routing.py", line 76, in app

await wrap_app_handling_exceptions(app, request)(scope, receive, send)

File "/usr/local/lib/python3.9/site-packages/starlette/_exception_handler.py", line 53, in wrapped_app

raise exc

File "/usr/local/lib/python3.9/site-packages/starlette/_exception_handler.py", line 42, in wrapped_app

await app(scope, receive, sender)

File "/usr/local/lib/python3.9/site-packages/starlette/routing.py", line 73, in app

response = await f(request)

File "/usr/local/lib/python3.9/site-packages/fastapi/routing.py", line 301, in app

raw_response = await run_endpoint_function(

File "/usr/local/lib/python3.9/site-packages/fastapi/routing.py", line 214, in run_endpoint_function

return await run_in_threadpool(dependant.call, **values)

File "/usr/local/lib/python3.9/site-packages/starlette/concurrency.py", line 37, in run_in_threadpool

return await anyio.to_thread.run_sync(func)

File "/usr/local/lib/python3.9/site-packages/anyio/to_thread.py", line 56, in run_sync

return await get_async_backend().run_sync_in_worker_thread(

File "/usr/local/lib/python3.9/site-packages/anyio/_backends/_asyncio.py", line 2461, in run_sync_in_worker_thread

return await future

File "/usr/local/lib/python3.9/site-packages/anyio/_backends/_asyncio.py", line 962, in run

result = context.run(func, *args)

File "/opt/airflow/airflow/api_fastapi/core_api/routes/public/task_instances.py", line 651, in post_clear_task_instances

dag = dag.partial_subset(

File "/opt/airflow/task_sdk/src/airflow/sdk/definitions/dag.py", line 811, in partial_subset

dag.task_dict = {

File "/opt/airflow/task_sdk/src/airflow/sdk/definitions/dag.py", line 812, in <dictcomp>

t.task_id: _deepcopy_task(t)

File "/opt/airflow/task_sdk/src/airflow/sdk/definitions/dag.py", line 808, in _deepcopy_task

return copy.deepcopy(t, memo)

File "/usr/local/lib/python3.9/copy.py", line 153, in deepcopy

y = copier(memo)

File "/opt/airflow/task_sdk/src/airflow/sdk/definitions/baseoperator.py", line 1188, in __deepcopy__

object.__setattr__(result, k, v)

AttributeError: can't set attribute

```

### What you think should happen instead?

Task instances endpoint should not throw HTTP500

### How to reproduce

As I mentioned, its intermittent you need to try clearing task instance couple of times from UI and you will observe this issue

### Operating System

Linux

### Versions of Apache Airflow Providers

_No response_

### Deployment

Other

### Deployment details

_No response_

### Anything else?

_No response_

### Are you willing to submit PR?

- [ ] Yes I am willing to submit a PR!

### Code of Conduct

- [x] I agree to follow this project's [Code of Conduct](https://github.com/apache/airflow/blob/main/CODE_OF_CONDUCT.md)

| open | 2025-03-02T10:59:30Z | 2025-03-12T07:18:15Z | https://github.com/apache/airflow/issues/47274 | [

"kind:bug",

"priority:high",

"area:core",

"AIP-84",

"area:task-sdk",

"affected_version:3.0.0beta"

] | vatsrahul1001 | 11 |

ray-project/ray | pytorch | 51,493 | CI test windows://python/ray/tests:test_actor_pool is consistently_failing | CI test **windows://python/ray/tests:test_actor_pool** is consistently_failing. Recent failures:

- https://buildkite.com/ray-project/postmerge/builds/8965#0195aaf1-9737-4a02-a7f8-1d7087c16fb1

- https://buildkite.com/ray-project/postmerge/builds/8965#0195aa03-5c4f-4156-97c5-9793049512c1

DataCaseName-windows://python/ray/tests:test_actor_pool-END

Managed by OSS Test Policy | closed | 2025-03-19T00:05:15Z | 2025-03-19T21:51:38Z | https://github.com/ray-project/ray/issues/51493 | [

"bug",

"triage",

"core",

"flaky-tracker",

"ray-test-bot",

"ci-test",

"weekly-release-blocker",

"stability"

] | can-anyscale | 3 |

albumentations-team/albumentations | machine-learning | 1,630 | [Tech debt] Improve Interface for RandomFog | Right now in the transform we have separate parameters for `fog_coef_upper` and `fog_coef_upper`

Better would be to have one parameter `fog_coef_range = [fog_coef_lower, fog_coef_upper]`

=>

We can update transform to use new signature, keep old as working, but mark as deprecated.

----

PR could be similar to https://github.com/albumentations-team/albumentations/pull/1704 | closed | 2024-04-05T18:37:00Z | 2024-06-07T04:34:28Z | https://github.com/albumentations-team/albumentations/issues/1630 | [

"good first issue",

"Tech debt"

] | ternaus | 1 |

dot-agent/nextpy | fastapi | 157 | Why hasn't this project been updated for a while | This is a good project, but it hasn't been updated for a long time. Why is that | open | 2024-09-23T09:25:54Z | 2025-01-13T12:36:11Z | https://github.com/dot-agent/nextpy/issues/157 | [] | redpintings | 2 |

hyperspy/hyperspy | data-visualization | 2,970 | Should `hs.load` always return a list? | One common inconvenience/confusing that beginners experience with hyperspy is issue like https://github.com/hyperspy/hyperspy/issues/2959, because `hs.load` can return a list of signals or a signal... this is very simple to explain but this could be avoided if `hs.load` would always return a list, even when the file to load contains only a single dataset.

For example, we change the API to have a syntax very similar to what matplotlib does with `plot`

```python

import matplotlib.pyplot as plt

fig, ax = plt.subplots()

lines = ax.plot([0, 1, 2])

print(lines)

# lines is a list

# [<matplotlib.lines.Line2D at 0x252af81fa90>]

line, = ax.plot([3, 4, 5])

print(line)

# line is not a list

# <matplotlib.lines.Line2D at 0x252afa14640>

```

| open | 2022-06-22T10:38:02Z | 2023-09-08T20:16:42Z | https://github.com/hyperspy/hyperspy/issues/2970 | [

"type: API change"

] | ericpre | 1 |

Significant-Gravitas/AutoGPT | python | 9,587 | Deepseek support | Hey Devs,

let me start by saying that this repo is great. Good job on your work, and thanks for sharing it.

Could we include support of DeepSeek V3 API? If there is a solution out there then when can it be implemented?

Thanks! | open | 2025-03-06T10:34:11Z | 2025-03-12T10:43:11Z | https://github.com/Significant-Gravitas/AutoGPT/issues/9587 | [] | q377985133 | 3 |

tqdm/tqdm | jupyter | 697 | DataFrameGroupBy.progress_apply not always equal to DataFrameGroupBy.apply | - [X] I have visited the [source website], and in particular

read the [known issues]

- [X] I have searched through the [issue tracker] for duplicates

- [X] I have mentioned version numbers, operating system and

environment, where applicable:

**tqdm version**: 4.31.1

**Python version:** 3.7.1

**OS Version:** Ubuntu 16.04

**Context:**

```python

import pandas as pd

import numpy as np

from tqdm import tqdm

tqdm.pandas()

df_size = int(5e6)

df = pd.DataFrame(dict(a=np.random.randint(1, 8, df_size),

b=np.random.rand(df_size)))

```

**Observed:**

```python

df.groupby('a').apply(max)

a b

a

1 1.0 0.999999

2 2.0 0.999997

3 3.0 0.999999

4 4.0 0.999997

5 5.0 0.999999

6 6.0 1.000000

7 7.0 1.000000

# but

df.groupby('a').progress_apply(max)

a

1 b

2 b

3 b

4 b

5 b

6 b

7 b

dtype: object

```

**Expected:**

`df.groupby('a').apply(max) ` and `df.groupby('a').progress_apply(max)` return the same value.

**Additional information:**

Replacing `max` by `sum` returns normal result for `apply`, but throws this error for `progress_apply`:

> TypeError: unsupported operand type(s) for +: 'int' and 'str'

| open | 2019-03-16T17:32:53Z | 2019-05-09T16:29:45Z | https://github.com/tqdm/tqdm/issues/697 | [

"help wanted 🙏",

"to-fix ⌛",

"submodule ⊂"

] | nalepae | 4 |

holoviz/panel | jupyter | 7,402 | Unable to run ChartJS example from custom_models.md | <details>

<summary>Software Version Info</summary>

```plaintext

panel.__version__ = '1.5.2.post1.dev8+gef313542.d20241015'

bokeh.__version__ = 3.6.0

OS Windows

Browser firefox

```

</details>

The impression I get is that the [custom_models.md](https://github.com/holoviz/panel/blob/main/doc/developer_guide/custom_models.md) is potentially a little out of date or does not build for all systems. while attempting to create the example custom model using ChartJS I encounterered a few errors in the chartjs.ts file that had to be changed but the bulk of the issue is when I run the final :

```

panel serve panel/tests/pane/test_chartjs.py --auto --show

```

A window is opened showing the python error:

```

AttributeError: unexpected attribute 'title' to ChartJS, possible attributes are align, aspect_ratio, clicks, context_menu, css_classes, css_variables, disabled, elements, flow_mode, height, height_policy, js_event_callbacks, js_property_callbacks, margin, max_height, max_width, min_height, min_width, name, object, resizable, sizing_mode, styles, stylesheets, subscribed_events, syncable, tags, visible, width or width_policy

```

### Changes Made from the custom_models example:

for the most part I have followed the custom_models.md to the word except for these changes required to get the code to run:

panel/model/chartjs.py

```python

from bokeh.core.properties import Int, String

from .layout import HTMLBox # changed from from 'bokeh.models import HTMLBox' as this threw error 'ImportError: cannot import name 'HTMLBox' from 'bokeh.models' (C:\Users\lyndo\OneDrive\Documents\Professional_Work\NMIS\three-panel\panel\.pixi\envs\test-312\Lib\site-packages\bokeh\models\__init__.py)'

class ChartJS(HTMLBox):

"""Custom ChartJS Model"""

object = String()

clicks = Int()

```

panel/model/chartjs.ts

```typescript

// See https://docs.bokeh.org/en/latest/docs/reference/models/layouts.html

import { HTMLBox, HTMLBoxView } from "./layout" // changed from 'import { HTMLBox, HTMLBoxView } from "@bokehjs/models/layouts/html_box"'

// See https://docs.bokeh.org/en/latest/docs/reference/core/properties.html

import * as p from "@bokehjs/core/properties"

// The view of the Bokeh extension/ HTML element

// Here you can define how to render the model as well as react to model changes or View events.

export class ChartJSView extends HTMLBoxView {

declare model: ChartJS // declare added

objectElement: any // Element

override connect_signals(): void { // override added ect ...

super.connect_signals()

this.on_change(this.model.properties.object, () => {

this.render();

})

}

override render(): void {

super.render()

this.el.innerHTML = `<button type="button">${this.model.object}</button>`

this.objectElement = this.el.firstElementChild

this.objectElement.addEventListener("click", () => {this.model.clicks+=1;}, false)

}

}

export namespace ChartJS {

export type Attrs = p.AttrsOf<Props>

export type Props = HTMLBox.Props & {

object: p.Property<string>,

clicks: p.Property<number>,

}

}

export interface ChartJS extends ChartJS.Attrs { }

// The Bokeh .ts model corresponding to the Bokeh .py model

export class ChartJS extends HTMLBox {

declare properties: ChartJS.Props

constructor(attrs?: Partial<ChartJS.Attrs>) {

super(attrs)

}

static override __module__ = "panel.models.chartjs"

static {

this.prototype.default_view = ChartJSView;

this.define<ChartJS.Props>(({Int, String}) => ({

object: [String, "Click Me!"],

clicks: [Int, 0],

}))

}

}

```

| closed | 2024-10-15T16:48:03Z | 2024-10-21T09:14:18Z | https://github.com/holoviz/panel/issues/7402 | [] | LyndonAlcock | 4 |

jupyter/nbgrader | jupyter | 1,210 | Autograde cell with input or print statemes | <!--

Thanks for helping to improve nbgrader!

If you are submitting a bug report or looking for support, please use the below

template so we can efficiently solve the problem.

If you are requesting a new feature, feel free to remove irrelevant pieces of

the issue template.

-->

1. Is it possible to autograde a cell that contains an input statement?

2. Is it possible to autograde a cell that contains a print statement?

### Operating system

OS X 10.14.6

### `nbgrader --version`

Python version 3.7.3 (default, Mar 27 2019, 16:54:48)

[Clang 4.0.1 (tags/RELEASE_401/final)]

nbgrader version 0.6.0

### `jupyterhub --version` (if used with JupyterHub)

### `jupyter notebook --version`

6.0.0

### Expected behavior

### Actual behavior

### Steps to reproduce the behavior

| open | 2019-08-30T22:10:49Z | 2023-07-12T21:21:23Z | https://github.com/jupyter/nbgrader/issues/1210 | [

"enhancement"

] | hebertodelrio | 5 |

awesto/django-shop | django | 262 | django-shop is not python3 compatible | I'm trying to fix this in my branch python3. I got rid of classy-tags since they are obsolete and not ported to python3. All other libraries are already ported.

Then I made necessary changes to the source code and raised minimum django version to 1.5.1.

Now all tests (except one under python3 - the circular import is somehow not circular there) are passing under both versions. But it needs far more testing.

| closed | 2013-12-22T11:17:00Z | 2016-02-02T13:56:48Z | https://github.com/awesto/django-shop/issues/262 | [] | katomaso | 3 |

xonsh/xonsh | data-science | 5,157 | ast DeprecationWarnings with Python 3.12b1 | Since upgrading from Python 3.12a7 to 3.12b2 and using xonsh 0.14, I see a flurry of DeprecationWarnings when starting up xonsh (in my case on Windows):

```

C:\Users\jaraco\.local\pipx\venvs\xonsh\Lib\site-packages\xonsh\ast.py:9: DeprecationWarning: ast.Bytes is deprecated and will be removed in Python 3.14; use ast.Constant instead

from ast import (

C:\Users\jaraco\.local\pipx\venvs\xonsh\Lib\site-packages\xonsh\ast.py:40: DeprecationWarning: ast.Ellipsis is deprecated and will be removed in Python 3.14; use ast.Constant instead

from ast import Ellipsis as EllipsisNode

C:\Users\jaraco\.local\pipx\venvs\xonsh\Lib\site-packages\xonsh\ast.py:41: DeprecationWarning: ast.NameConstant is deprecated and will be removed in Python 3.14; use ast.Constant instead

from ast import (

C:\Users\jaraco\.local\pipx\venvs\xonsh\Lib\site-packages\xonsh\ast.py:41: DeprecationWarning: ast.Num is deprecated and will be removed in Python 3.14; use ast.Constant instead

from ast import (

C:\Users\jaraco\.local\pipx\venvs\xonsh\Lib\site-packages\xonsh\ast.py:41: DeprecationWarning: ast.Str is deprecated and will be removed in Python 3.14; use ast.Constant instead

from ast import (

C:\Users\jaraco\.local\pipx\venvs\xonsh\Lib\site-packages\xonsh\parsers\base.py:3550: DeprecationWarning: ast.Str is deprecated and will be removed in Python 3.14; use ast.Constant instead

p[0] = ast.Str(s=p1.value, lineno=p1.lineno, col_offset=p1.lexpos)

C:\Program Files\Python 3.12\Lib\ast.py:587: DeprecationWarning: Attribute s is deprecated and will be removed in Python 3.14; use value instead

return Constant(*args, **kwargs)

C:\Users\jaraco\.local\pipx\venvs\xonsh\Lib\site-packages\xonsh\parsers\base.py:179: DeprecationWarning: Attribute s is deprecated and will be removed in Python 3.14; use value instead

return "*" in x.s

C:\Users\jaraco\.local\pipx\venvs\xonsh\Lib\site-packages\xonsh\parsers\base.py:2420: DeprecationWarning: ast.NameConstant is deprecated and will be removed in Python 3.14; use ast.Constant instead

p[0] = ast.NameConstant(value=True, lineno=p1.lineno, col_offset=p1.lexpos)

C:\Users\jaraco\.local\pipx\venvs\xonsh\Lib\site-packages\xonsh\parsers\base.py:2410: DeprecationWarning: ast.Ellipsis is deprecated and will be removed in Python 3.14; use ast.Constant instead

p[0] = ast.EllipsisNode(lineno=p1.lineno, col_offset=p1.lexpos)

C:\Users\jaraco\.local\pipx\venvs\xonsh\Lib\site-packages\xonsh\parsers\base.py:2657: DeprecationWarning: ast.Num is deprecated and will be removed in Python 3.14; use ast.Constant instead

p[0] = ast.Num(

C:\Program Files\Python 3.12\Lib\ast.py:587: DeprecationWarning: Attribute n is deprecated and will be removed in Python 3.14; use value instead

return Constant(*args, **kwargs)

C:\Users\jaraco\.local\pipx\venvs\xonsh\Lib\site-packages\xonsh\parsers\context_check.py:23: DeprecationWarning: ast.Num is deprecated and will be removed in Python 3.14; use ast.Constant instead

elif isinstance(x, (ast.Set, ast.Dict, ast.Num, ast.Str, ast.Bytes)):

C:\Users\jaraco\.local\pipx\venvs\xonsh\Lib\site-packages\xonsh\parsers\context_check.py:23: DeprecationWarning: ast.Str is deprecated and will be removed in Python 3.14; use ast.Constant instead

elif isinstance(x, (ast.Set, ast.Dict, ast.Num, ast.Str, ast.Bytes)):

C:\Users\jaraco\.local\pipx\venvs\xonsh\Lib\site-packages\xonsh\parsers\context_check.py:23: DeprecationWarning: ast.Bytes is deprecated and will be removed in Python 3.14; use ast.Constant instead

elif isinstance(x, (ast.Set, ast.Dict, ast.Num, ast.Str, ast.Bytes)):

C:\Users\jaraco\.local\pipx\venvs\xonsh\Lib\site-packages\xonsh\parsers\context_check.py:45: DeprecationWarning: ast.NameConstant is deprecated and will be removed in Python 3.14; use ast.Constant instead

elif isinstance(x, ast.NameConstant):

C:\Users\jaraco\.local\pipx\venvs\xonsh\Lib\site-packages\xonsh\parsers\base.py:3550: DeprecationWarning: ast.Str is deprecated and will be removed in Python 3.14; use ast.Constant instead

p[0] = ast.Str(s=p1.value, lineno=p1.lineno, col_offset=p1.lexpos)

C:\Program Files\Python 3.12\Lib\ast.py:587: DeprecationWarning: Attribute s is deprecated and will be removed in Python 3.14; use value instead

return Constant(*args, **kwargs)

C:\Users\jaraco\.local\pipx\venvs\xonsh\Lib\site-packages\xonsh\parsers\base.py:179: DeprecationWarning: Attribute s is deprecated and will be removed in Python 3.14; use value instead

return "*" in x.s

C:\Users\jaraco\.local\pipx\venvs\xonsh\Lib\site-packages\xonsh\parsers\base.py:3550: DeprecationWarning: ast.Str is deprecated and will be removed in Python 3.14; use ast.Constant instead

p[0] = ast.Str(s=p1.value, lineno=p1.lineno, col_offset=p1.lexpos)

C:\Program Files\Python 3.12\Lib\ast.py:587: DeprecationWarning: Attribute s is deprecated and will be removed in Python 3.14; use value instead

return Constant(*args, **kwargs)

C:\Users\jaraco\.local\pipx\venvs\xonsh\Lib\site-packages\xonsh\parsers\base.py:179: DeprecationWarning: Attribute s is deprecated and will be removed in Python 3.14; use value instead

return "*" in x.s

```

Hopefully these warnings can be suppressed or a fix added soon to streamline the experience for users on Python 3.12+. | closed | 2023-06-19T15:37:00Z | 2023-07-29T15:45:32Z | https://github.com/xonsh/xonsh/issues/5157 | [

"parser",

"py312"

] | jaraco | 4 |

strawberry-graphql/strawberry-django | graphql | 261 | relay depth limit | Hi,

I was reading [blog](https://blog.cloudflare.com/protecting-graphql-apis-from-malicious-queries/) from Cloudflare about malicious queries in GraphQL.

Is there a way to detect query depth so I can respond respectively ?

```gql

query {

petition(ID: 123) {

signers {

nodes {

petitions {

nodes {

signers {

nodes {

petitions {

nodes {

...

}

}

}

}

}

}

}

}

}

}

```

Thanks | closed | 2023-06-14T22:47:41Z | 2023-06-15T23:00:37Z | https://github.com/strawberry-graphql/strawberry-django/issues/261 | [] | tasiotas | 6 |

dynaconf/dynaconf | fastapi | 979 | [bug] when using a validator with a default for nested data it parses value via toml twice | **Describe the bug**

```py

from __future__ import annotations

from dynaconf import Dynaconf

from dynaconf import Validator

settings = Dynaconf()

settings.validators.register(

Validator("group.something_new", default=5),

)

settings.validators.validate()

assert settings.group.test_list == ["1", "2"], settings.group

```

Execution

```console

$ DYNACONF_GROUP__TEST_LIST="['1','2']" python app.py

```

expectation:

```py

settings.group.test_list == ["1", "2"]

```

current behavior

```console

$ DYNACONF_GROUP__TEST_LIST="['1','2']" python app.py

Traceback (most recent call last):

File "/home/rochacbruno/Projects/dynaconf/tests_functional/issues/905_item_duplication_in_list/app.py", line 21, in <module>

assert settings.group.test_list == ["1", "2"], settings.group

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

AssertionError: {'something_new': 5, 'TEST_LIST': [1, 2]}

```

## What is happening?

At the end of validators.validate.validate the `Validator("group.something_new", default=5),` is executed and then default value is merged with current data, during that process dynaconf is calling `parse_conf_data` passing `tomlfy=True` which forces toml to evaluate the existing data again so.

```py

In [1]: from dynaconf.utils.parse_conf import parse_conf_data

In [2]: data = {"TEST_LIST": ["1", "2"]}

In [3]: parse_conf_data(data, tomlfy=True)

Out[3]: {'TEST_LIST': [1, 2]}

In [4]: parse_conf_data(data, tomlfy=False)

Out[4]: {'TEST_LIST': ['1', '2']}

```

## Possible solutions:

Change `setdefault` method to use `tomlfy=False`

Change `parse_with_toml` to avoid doing that transformation.

| closed | 2023-08-16T17:05:12Z | 2024-07-08T18:09:40Z | https://github.com/dynaconf/dynaconf/issues/979 | [

"bug",

"4.0-breaking-change"

] | rochacbruno | 1 |

FlareSolverr/FlareSolverr | api | 626 | [yggtorrent] (testing) Exception (yggtorrent): Error connecting to FlareSolverr | **Please use the search bar** at the top of the page and make sure you are not creating an already submitted issue.

Check closed issues as well, because your issue may have already been fixed.

### How to enable debug and html traces

[Follow the instructions from this wiki page](https://github.com/FlareSolverr/FlareSolverr/wiki/How-to-enable-debug-and-html-trace)

### Environment

* **FlareSolverr version**:

* **Last working FlareSolverr version**:

* **Operating system**:

* **Are you using Docker**: [yes/no]

* **FlareSolverr User-Agent (see log traces or / endpoint)**:

* **Are you using a proxy or VPN?** [yes/no]

* **Are you using Captcha Solver:** [yes/no]

* **If using captcha solver, which one:**

* **URL to test this issue:**

### Description

[List steps to reproduce the error and details on what happens and what you expected to happen]

### Logged Error Messages

[Place any relevant error messages you noticed from the logs here.]

[Make sure you attach the full logs with your personal information removed in case we need more information]

### Screenshots

[Place any screenshots of the issue here if needed]

| closed | 2022-12-20T12:15:19Z | 2022-12-21T01:00:42Z | https://github.com/FlareSolverr/FlareSolverr/issues/626 | [

"invalid"

] | o0-sicnarf-0o | 0 |

microsoft/qlib | machine-learning | 1,371 | Can provider_uri be other protocols like http | ## ❓ Questions and Help

We sincerely suggest you to carefully read the [documentation](http://qlib.readthedocs.io/) of our library as well as the official [paper](https://arxiv.org/abs/2009.11189). After that, if you still feel puzzled, please describe the question clearly under this issue. | closed | 2022-11-21T06:56:35Z | 2023-02-24T12:02:34Z | https://github.com/microsoft/qlib/issues/1371 | [

"question",

"stale"

] | Vincent4zzzz | 1 |