repo_name stringlengths 9 75 | topic stringclasses 30 values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2 values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

robinhood/faust | asyncio | 270 | Table Length Method Issue | When iterating through the table, we only display the active keys. But when we use `len(table)`, it actually counts both active and inactive keys: https://github.com/robinhood/faust/blob/master/faust/stores/rocksdb.py#L306. We should only display number of active keys to make it consistent with iterkey and iteritems. @ask let me know if this is a good feature to change or if there is any potential side effect if we change this. | closed | 2019-01-09T23:43:38Z | 2019-01-15T00:29:44Z | https://github.com/robinhood/faust/issues/270 | [] | allisonwang | 1 |

waditu/tushare | pandas | 1,028 | 每日指标中的自由流通股本为负数 | `{

"api_name":"daily_basic",

"token":"XXXXXXXXXXXX",

"params":{

"trade_date":"20190424"

},

"fields":["trade_date", "ts_code", "pe", "pe_ttm", "pb", "ps", "ps_ttm", "total_share", "float_share", "free_share"]

}`

调用的时候 “600657” 这一只股票的由流通股本为负数 "-5719.7841"

Tushare ID: 261375 | closed | 2019-05-02T15:59:30Z | 2019-07-02T14:07:20Z | https://github.com/waditu/tushare/issues/1028 | [] | musicaudience | 3 |

ipython/ipython | data-science | 13,926 | capture_output does not respect trailing semicolon | By convention, IPython suppresses the return value of the last expression if it ends with a trailing semicolon. `%%capture` and `utils.capture.capture_output` don't respect this convention:

```python

In [1]: %%capture out

...: x = 1

...: 1;

In [2]: out.outputs[0]

Out[2]: 1

```

Related: #10227 #13841 (but it's about a different problem) | open | 2023-02-04T15:34:49Z | 2023-02-12T01:37:28Z | https://github.com/ipython/ipython/issues/13926 | [

"magics"

] | akhmerov | 1 |

MaartenGr/BERTopic | nlp | 1,626 | Documents and Topics are different lengths and cannot merge the topics | Hi there Maarten!

Thank you for all of the support you are offering the community!

I am running into the problem that my docs and topics have different lengths after I ran the model. I do not have the possibility to run the model again, but because of this issue, I cannot plot the topics over time or merge them.

Would it be possible to suggest how to proceed from here?

<img width="1218" alt="Screenshot 2023-11-10 at 14 35 04" src="https://github.com/MaartenGr/BERTopic/assets/28362483/6bcc9c34-4cb7-4e68-98fd-67281de2b126">

Thank you in advance!

| open | 2023-11-10T13:35:48Z | 2023-11-27T16:38:15Z | https://github.com/MaartenGr/BERTopic/issues/1626 | [] | daianacric95 | 8 |

nicodv/kmodes | scikit-learn | 104 | Kprototypes unexpected keyword argument 'n_jobs' | I notice that when trying to run:

`km = KPrototypes(n_clusters=20, init='Huang', n_init=1, n_jobs=-1, verbose=1)`

that I get an error that says:

`__init__() got an unexpected keyword argument 'n_jobs'`

I noticed that this should be fixed in the next release - when will that be? | closed | 2019-02-18T19:05:26Z | 2019-02-18T19:21:02Z | https://github.com/nicodv/kmodes/issues/104 | [] | klepikhina | 0 |

gradio-app/gradio | data-visualization | 9,969 | Add a setting that allows users to customize the tab placement (`Left`, `Center`, or `Right`). | - [x] I have searched to see if a similar issue already exists.

I think many would agree that it would be convenient to place some tabs in different locations. For example, the "Settings" tab could be located on the right, and the "INFO" tab could be on the left. The main tabs could be placed in the center or in another convenient location for users.

It would also be nice to have the ability to arrange tabs in a column instead of in a single line.

These changes could significantly improve some interfaces and make them more user-friendly.

| open | 2024-11-16T09:11:40Z | 2025-01-11T12:41:36Z | https://github.com/gradio-app/gradio/issues/9969 | [

"enhancement"

] | Bebra777228 | 1 |

Gerapy/Gerapy | django | 115 | 域名注册 | closed | 2019-10-06T15:28:51Z | 2019-10-06T15:32:31Z | https://github.com/Gerapy/Gerapy/issues/115 | [] | Germey | 0 | |

horovod/horovod | deep-learning | 3,589 | Can not run `RayExecutor` | Code:

```python

import ray

from horovod.ray import RayExecutor

import horovod.torch as hvd

# Start the Ray cluster or attach to an existing Ray cluster

ray.init()

num_workers = 1

# Start num_workers actors on the cluster

settings = RayExecutor.create_settings(timeout_s=30)

executor = RayExecutor(

settings, num_workers=num_workers,cpus_per_worker=1, use_gpu=True)

# This will launch `num_workers` actors on the Ray Cluster.

executor.start()

# Using the stateless `run` method, a function can take in any args or kwargs

def simple_fn():

hvd.init()

print("hvd rank", hvd.rank())

return hvd.rank()

# Execute the function on all workers at once

result = executor.run(simple_fn)

print(result)

executor.shutdown()

```

Result:

```python

2022-06-28 22:05:33,656 INFO services.py:1477 -- View the Ray dashboard at http://127.0.0.1:8266

(BaseHorovodWorker pid=14896) *** SIGSEGV received at time=1656453935 on cpu 0 ***

(BaseHorovodWorker pid=14896) PC: @ 0x7f80eff99fcc (unknown) horovod::common::(anonymous namespace)::BackgroundThreadLoop()

(BaseHorovodWorker pid=14896) @ 0x7f833f117980 4560 (unknown)

(BaseHorovodWorker pid=14896) @ 0x7f833cbffaa3 24 execute_native_thread_routine

(BaseHorovodWorker pid=14896) @ ... and at least 4 more frames

(BaseHorovodWorker pid=14896) [2022-06-28 22:05:35,709 E 14896 14934] logging.cc:325: *** SIGSEGV received at time=1656453935 on cpu 0 ***

(BaseHorovodWorker pid=14896) [2022-06-28 22:05:35,709 E 14896 14934] logging.cc:325: PC: @ 0x7f80eff99fcc (unknown) horovod::common::(anonymous namespace)::BackgroundThreadLoop()

(BaseHorovodWorker pid=14896) [2022-06-28 22:05:35,710 E 14896 14934] logging.cc:325: @ 0x7f833f117980 4560 (unknown)

(BaseHorovodWorker pid=14896) [2022-06-28 22:05:35,710 E 14896 14934] logging.cc:325: @ 0x7f833cbffaa3 24 execute_native_thread_routine

(BaseHorovodWorker pid=14896) [2022-06-28 22:05:35,710 E 14896 14934] logging.cc:325: @ ... and at least 4 more frames

(BaseHorovodWorker pid=14896) Fatal Python error: Segmentation fault

(BaseHorovodWorker pid=14896)

2022-06-28 22:05:35,839 WARNING worker.py:1728 -- A worker died or was killed while executing a task by an unexpected system error. To troubleshoot the problem, check the logs for the dead worker. RayTask ID: ffffffffffffffff192c4de9ae2ac15aafc2ab1801000000 Worker ID: 64a4d8791c417ee4cc7d4ea3473dcb4fa6303705700c01ddc8bfd3fa Node ID: 78571af15b4fb6c918265637b8618542b59fd3c7f493a81a0b6b7569 Worker IP address: 10.0.2.180 Worker port: 33549 Worker PID: 14896 Worker exit type: SYSTEM_ERROR Worker exit detail: Worker unexpectedly exits with a connection error code 2. End of file. There are some potential root causes. (1) The process is killed by SIGKILL by OOM killer due to high memory usage. (2) ray stop --force is called. (3) The worker is crashed unexpectedly due to SIGSEGV or other unexpected errors.

Traceback (most recent call last):

File "test_0628.py", line 25, in <module>

result = executor.run(simple_fn)

File "/home/ubuntu/anaconda3/envs/hd/lib/python3.8/site-packages/horovod/ray/runner.py", line 376, in run

return self._maybe_call_ray(self.adapter.run, **kwargs_)

File "/home/ubuntu/anaconda3/envs/hd/lib/python3.8/site-packages/horovod/ray/runner.py", line 421, in _maybe_call_ray

return driver_func(**kwargs)

File "/home/ubuntu/anaconda3/envs/hd/lib/python3.8/site-packages/horovod/ray/runner.py", line 599, in run

return ray.get(self._run_remote(fn=f))

File "/home/ubuntu/anaconda3/envs/hd/lib/python3.8/site-packages/ray/_private/client_mode_hook.py", line 105, in wrapper

return func(*args, **kwargs)

File "/home/ubuntu/anaconda3/envs/hd/lib/python3.8/site-packages/ray/_private/worker.py", line 2176, in get

raise value

ray.exceptions.RayActorError: The actor died unexpectedly before finishing this task.

class_name: BaseHorovodWorker

actor_id: 192c4de9ae2ac15aafc2ab1801000000

pid: 14896

namespace: acb48abc-7432-420d-923f-2d19a510800c

ip: 10.0.2.180

The actor is dead because its worker process has died. Worker exit type: SYSTEM_ERROR Worker exit detail: Worker unexpectedly exits with a connection error code 2. End of file. There are some potential root causes. (1) The process is killed by SIGKILL by OOM killer due to high memory usage. (2) ray stop --force is called. (3) The worker is crashed unexpectedly due to SIGSEGV or other unexpected errors.

None

Exception ignored in: <function ActorHandle.__del__ at 0x7f93cce2ef70>

Traceback (most recent call last):

File "/home/ubuntu/anaconda3/envs/hd/lib/python3.8/site-packages/ray/actor.py", line 1029, in __del__

AttributeError: 'NoneType' object has no attribute 'worker'

``` | closed | 2022-06-28T22:41:00Z | 2025-03-20T19:51:17Z | https://github.com/horovod/horovod/issues/3589 | [

"bug"

] | JiahaoYao | 1 |

SciTools/cartopy | matplotlib | 1,803 | Lat and lon labels in LambertConformal at edges not shown | ### Description

I want to plot data in the LambertConformal projection, 'cut out' the figure into the shape of the projection, and add axis labels. However, the labels that belong in the corners (minimum and maximum latitude and longitude) are not shown. See image below: I would like to also have the labels for 40N, 80N, 80W and 20E. These are included in the array that I give as input to the `xlocator` and `ylocator` of the `gridliner` object. How can I ensure that these labels in the corners are also shown?

<!--

If you are reporting a bug, attach the *entire* traceback from Python.

If you are proposing an enhancement/new feature, provide links to related articles, reference examples, etc.

If you are asking a question, please ask on StackOverflow and use the cartopy tag. All cartopy

questions on StackOverflow can be found at https://stackoverflow.com/questions/tagged/cartopy

-->

#### Code to reproduce

```

import numpy as np

import matplotlib.pyplot as plt

import cartopy.crs as ccrs

import matplotlib.path as mpath

import matplotlib.ticker as mticker

bounds_lon = [-80,20]

bounds_lat = [40,80]

projection = ccrs.LambertConformal(central_longitude=np.mean(bounds_lon),central_latitude=np.mean(bounds_lat))

fig, ax = plt.subplots(1,1,figsize=(24,8),subplot_kw={'projection': projection})

ax.coastlines()

# Create nicely shaped boundaries (based on https://stackoverflow.com/questions/65687535/how-do-i-limit-the-longitude-extent-in-cartopys-lambertconformal-and-keep-the-c)

rect = mpath.Path([[bounds_lon[0], bounds_lat[0]],

[bounds_lon[1], bounds_lat[0]],

[bounds_lon[1], bounds_lat[1]],

[bounds_lon[0], bounds_lat[1]],

[bounds_lon[0], bounds_lat[0]],

]).interpolated(20)

proj_to_data = ccrs.PlateCarree()._as_mpl_transform(ax) - ax.transData

rect_in_target = proj_to_data.transform_path(rect)

ax.set_boundary(rect_in_target)

ax.set_extent([bounds_lon[0], bounds_lon[1], bounds_lat[0] - 15, bounds_lat[1]])

# Set axes labels

gl = ax.gridlines(crs=ccrs.PlateCarree(), x_inline=False, y_inline=False,

linewidth=2, color='gray', alpha=0.5, linestyle='--')

gl.xlocator = mticker.FixedLocator(np.arange(bounds_lon[0],bounds_lon[1]+0.1,10))

gl.ylocator = mticker.FixedLocator(np.arange(bounds_lat[0],bounds_lat[1]+0.1,10))

gl.left_labels = True

gl.bottom_labels = True

plt.show()

```

#### Traceback

```

```

<details>

<summary>Full environment definition</summary>

<!-- fill in the following information as appropriate -->

### Operating system

### Cartopy version

### conda list

```

```

### pip list

```

```

</details>

| closed | 2021-06-10T12:45:11Z | 2021-06-10T14:45:20Z | https://github.com/SciTools/cartopy/issues/1803 | [] | MiriamSterl | 1 |

plotly/dash-core-components | dash | 147 | Markdown (and probably SyntaxHighlighter) default `children` value needs update | With dcc 0.15.4 and:

> The children property of dash_core_components.Markdown and dash_core_components.SyntaxHighlighter now accepts an array of strings (previously it had to be a string). Now, if an array is provided, it is collapsed into a string with line breaks (see #134).

now creating `dcc.Markdown()` component without any children value, for an empty component that will be later filled with a callback crashes in React constructor:

```

// must be a string or an array of strings

if(typeof props.children !== 'string') {

props.children = props.children.join('\n');

}

```

as `children` is not a string, but the default `children` value that crashes on `.join()` call (at least that is what browser console tells me): `TypeError: Cannot read property 'join' of null`.

I think that the default value for `dcc.Markdown.children` should be `""` or empty list.

Or if providing `children` value is required, then Python should catch components without it and raise an exception, as it is it was really hard to find the error.

| closed | 2018-01-18T16:22:00Z | 2018-01-18T22:47:02Z | https://github.com/plotly/dash-core-components/issues/147 | [

"dash-type-bug"

] | radekwlsk | 2 |

Anjok07/ultimatevocalremovergui | pytorch | 683 | M1 Mac Heating Up while processing | Hi - I have an M1 Mac running Ventura 13.4 and Iam facing a similar issue. The processing time is much longer than it is on a windows PC (5-10 mins for an average 10MB song) and the mac heats up significantly while UVR is under processing.

| open | 2023-07-21T08:16:05Z | 2023-08-23T20:57:00Z | https://github.com/Anjok07/ultimatevocalremovergui/issues/683 | [] | rajeevbat | 8 |

microsoft/nni | machine-learning | 5,501 | Error occurs when pip install nni[SMAC] | **Describe the issue**:

When I use `pip install nni[SMAC]` , error occurs. The error is as follows. I don't know how to solve it. Thanks for your answer!

```

Collecting ConfigSpaceNNI>=0.4.7.3

Downloading http://mirrors.aliyun.com/pypi/packages/35/c7/e3b8b1d662498a92fa2913d9c7c2134b4831820c8a13de962b987c0acb18/ConfigSpaceNNI-0.4.7.3.tar.gz (108 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 108.5/108.5 kB 2.6 MB/s eta 0:00:00

Preparing metadata (setup.py) ... error

error: subprocess-exited-with-error

× python setup.py egg_info did not run successfully.

│ exit code: 1

╰─> [40 lines of output]

/home/lvqinyi/miniconda3/envs/sunze/lib/python3.9/site-packages/setuptools/_distutils/extension.py:134: UserWarning: Unknown Extension options: 'compiler_directives'

warnings.warn(msg)

/home/lvqinyi/miniconda3/envs/sunze/lib/python3.9/site-packages/setuptools/dist.py:770: UserWarning: Usage of dash-separated 'description-file' will not be supported in future versions. Please use the underscore name 'description_file' instead

warnings.warn(

/home/lvqinyi/miniconda3/envs/sunze/lib/python3.9/site-packages/setuptools/installer.py:27: SetuptoolsDeprecationWarning: setuptools.installer is deprecated. Requirements should be satisfied by a PEP 517 installer.

warnings.warn(

WARNING: The repository located at mirrors.aliyun.com is not a trusted or secure host and is being ignored. If this repository is available via HTTPS we recommend you use HTTPS instead, otherwise you may silence this warning and allow it anyway with '--trusted-host mirrors.aliyun.com'.

ERROR: Could not find a version that satisfies the requirement Cython (from versions: none)

ERROR: No matching distribution found for Cython

Traceback (most recent call last):

File "/home/lvqinyi/miniconda3/envs/sunze/lib/python3.9/site-packages/setuptools/installer.py", line 82, in fetch_build_egg

subprocess.check_call(cmd)

File "/home/lvqinyi/miniconda3/envs/sunze/lib/python3.9/subprocess.py", line 373, in check_call

raise CalledProcessError(retcode, cmd)

subprocess.CalledProcessError: Command '['/home/lvqinyi/miniconda3/envs/sunze/bin/python', '-m', 'pip', '--disable-pip-version-check', 'wheel', '--no-deps', '-w', '/tmp/tmp8spnbdat', '--quiet', 'Cython']' returned non-zero exit status 1.

The above exception was the direct cause of the following exception:

Traceback (most recent call last):

File "<string>", line 2, in <module>

File "<pip-setuptools-caller>", line 34, in <module>

File "/tmp/pip-install-tzzzixvz/configspacenni_126c6bee502a4fe7b51e4ef98928bc8c/setup.py", line 56, in <module>

setup(

File "/home/lvqinyi/miniconda3/envs/sunze/lib/python3.9/site-packages/setuptools/__init__.py", line 86, in setup

_install_setup_requires(attrs)

File "/home/lvqinyi/miniconda3/envs/sunze/lib/python3.9/site-packages/setuptools/__init__.py", line 80, in _install_setup_requires

dist.fetch_build_eggs(dist.setup_requires)

File "/home/lvqinyi/miniconda3/envs/sunze/lib/python3.9/site-packages/setuptools/dist.py", line 874, in fetch_build_eggs

resolved_dists = pkg_resources.working_set.resolve(

File "/home/lvqinyi/miniconda3/envs/sunze/lib/python3.9/site-packages/pkg_resources/__init__.py", line 789, in resolve

dist = best[req.key] = env.best_match(

File "/home/lvqinyi/miniconda3/envs/sunze/lib/python3.9/site-packages/pkg_resources/__init__.py", line 1075, in best_match

return self.obtain(req, installer)

File "/home/lvqinyi/miniconda3/envs/sunze/lib/python3.9/site-packages/pkg_resources/__init__.py", line 1087, in obtain

return installer(requirement)

File "/home/lvqinyi/miniconda3/envs/sunze/lib/python3.9/site-packages/setuptools/dist.py", line 944, in fetch_build_egg

return fetch_build_egg(self, req)

File "/home/lvqinyi/miniconda3/envs/sunze/lib/python3.9/site-packages/setuptools/installer.py", line 84, in fetch_build_egg

raise DistutilsError(str(e)) from e

distutils.errors.DistutilsError: Command '['/home/lvqinyi/miniconda3/envs/sunze/bin/python', '-m', 'pip', '--disable-pip-version-check', 'wheel', '--no-deps', '-w', '/tmp/tmp8spnbdat', '--quiet', 'Cython']' returned non-zero exit status 1.

[end of output]

note: This error originates from a subprocess, and is likely not a problem with pip.

error: metadata-generation-failed

× Encountered error while generating package metadata.

╰─> See above for output.

note: This is an issue with the package mentioned above, not pip.

hint: See above for details.

```

**Environment**:

- NNI version: 2.10

- Training service (local|remote|pai|aml|etc): local

- Client OS: Ubuntu 20.04

- Python version: 3.9.13

- PyTorch version: 1.12.0

- Is conda/virtualenv/venv used?: conda used

- Is running in Docker?: No

| closed | 2023-04-03T14:36:06Z | 2023-04-04T07:14:56Z | https://github.com/microsoft/nni/issues/5501 | [] | sunze992 | 0 |

tox-dev/tox | automation | 3,468 | Allow disabling plugins via CLI | ## Issue

```

# inside tox own codebase:

tox -e 3.13 -- -k test_provision_requires_ok

```

Note that version of python is irrelevant, as it does reproduce with all supported versions.

I also tried to modify the failing test to compensate for the missing pip, but it still failed with another error, now complaining about missing `hatchling`... and adding hatchling did not address this one. So we might have more than one bug here.

```

proj = tox_project({"tox.ini": "[tox]\nrequires=demo-pkg-inline\n[testenv]\npackage=skip\ndeps=pip"})

```

## Environment

Provide at least:

- OS:

<details open>

<summary>Output of <code>pip list</code> of the host Python, where <code>tox</code> is installed</summary>

```console

```

</details>

## Output of running tox

<details open>

<summary>Output of <code>tox -rvv</code></summary>

```console

❯ tox -e 3.10 -- -k test_provision_requires_ok

3.10: venv> /Users/ssbarnea/.config/mise/installs/python/3.13.0/bin/uv venv -p 3.10 --allow-existing /Users/ssbarnea/code/os/tox/.tox/3.10

3.10: install_dependency-groups> /Users/ssbarnea/.config/mise/installs/python/3.13.0/bin/uv pip install 'build[virtualenv]>=1.2.2.post1' 'covdefaults>=2.3' 'detect-test-pollution>=1.2' 'devpi-process>=1.0.2' 'diff-cover>=9.2' 'distlib>=0.3.9' 'flaky>=3.8.1' 'hatch-vcs>=0.4' 'hatchling>=1.26.3' 'psutil>=6.1' 'pytest-cov>=5' 'pytest-mock>=3.14' 'pytest-xdist>=3.6.1' 'pytest>=8.3.3' 're-assert>=1.1' 'setuptools>=75.1; python_version <= "3.8"' 'setuptools>=75.6; python_version > "3.8"' 'time-machine>=2.15; implementation_name != "pypy"' 'wheel>=0.45'

.pkg: _optional_hooks> python /Users/ssbarnea/.config/mise/installs/python/3.13.0/lib/python3.13/site-packages/pyproject_api/_backend.py True hatchling.build

.pkg: get_requires_for_build_wheel> python /Users/ssbarnea/.config/mise/installs/python/3.13.0/lib/python3.13/site-packages/pyproject_api/_backend.py True hatchling.build

.pkg: get_requires_for_build_editable> python /Users/ssbarnea/.config/mise/installs/python/3.13.0/lib/python3.13/site-packages/pyproject_api/_backend.py True hatchling.build

.pkg: build_wheel> python /Users/ssbarnea/.config/mise/installs/python/3.13.0/lib/python3.13/site-packages/pyproject_api/_backend.py True hatchling.build

3.10: install_package_deps> /Users/ssbarnea/.config/mise/installs/python/3.13.0/bin/uv pip install 'cachetools>=5.5' 'chardet>=5.2' 'colorama>=0.4.6' 'filelock>=3.16.1' 'packaging>=24.2' 'platformdirs>=4.3.6' 'pluggy>=1.5' 'pyproject-api>=1.8' 'tomli>=2.1; python_version < "3.11"' 'typing-extensions>=4.12.2; python_version < "3.11"' 'virtualenv>=20.27.1'

3.10: install_package> /Users/ssbarnea/.config/mise/installs/python/3.13.0/bin/uv pip install --reinstall --no-deps tox@/Users/ssbarnea/code/os/tox/.tox/.tmp/package/78/tox-4.23.3.dev17+g9152d396.d20241210-py3-none-any.whl

3.10: commands[0]> pytest -k test_provision_requires_ok

================================================================= test session starts =================================================================

platform darwin -- Python 3.10.16, pytest-8.3.4, pluggy-1.5.0

cachedir: .tox/3.10/.pytest_cache

rootdir: /Users/ssbarnea/code/os/tox

configfile: pyproject.toml

testpaths: tests

plugins: cov-6.0.0, flaky-3.8.1, time-machine-2.16.0, devpi-server-6.14.0, anyio-4.7.0, mock-3.14.0, xdist-3.6.1

collected 1824 items / 1823 deselected / 1 selected

tests/test_provision.py E [100%]

======================================================================= ERRORS ========================================================================

____________________________________________________ ERROR at setup of test_provision_requires_ok _____________________________________________________

tox_wheel = PosixPath('/private/var/folders/32/1xrphgzd4xv777syxjtkpdw80000gn/T/pytest-of-ssbarnea/pytest-105/dist0/tox-4.23.4-py3-none-any.whl')

tmp_path_factory = TempPathFactory(_given_basetemp=None, _trace=<pluggy._tracing.TagTracerSub object at 0x101e226b0>, _basetemp=PosixPath...rs/32/1xrphgzd4xv777syxjtkpdw80000gn/T/pytest-of-ssbarnea/pytest-105'), _retention_count=3, _retention_policy='failed')

@pytest.fixture(scope="session")

def tox_wheels(tox_wheel: Path, tmp_path_factory: TempPathFactory) -> list[Path]:

with elapsed("acquire dependencies for current tox"): # takes around 1.5s if already cached

result: list[Path] = [tox_wheel]

info = tmp_path_factory.mktemp("info")

with ZipFile(str(tox_wheel), "r") as zip_file:

zip_file.extractall(path=info)

dist_info = next((i for i in info.iterdir() if i.suffix == ".dist-info"), None)

if dist_info is None: # pragma: no cover

msg = f"no tox.dist-info inside {tox_wheel}"

raise RuntimeError(msg)

distribution = Distribution.at(dist_info)

wheel_cache = ROOT / ".wheel_cache" / f"{sys.version_info.major}.{sys.version_info.minor}"

wheel_cache.mkdir(parents=True, exist_ok=True)

cmd = [sys.executable, "-I", "-m", "pip", "download", "-d", str(wheel_cache)]

assert distribution.requires is not None

for req in distribution.requires:

requirement = Requirement(req)

if not requirement.extras: # pragma: no branch # we don't need to install any extras (tests/docs/etc)

cmd.append(req)

> check_call(cmd)

cmd = ['/Users/ssbarnea/code/os/tox/.tox/3.10/bin/python3', '-I', '-m', 'pip', 'download', '-d', ...]

dist_info = PosixPath('/private/var/folders/32/1xrphgzd4xv777syxjtkpdw80000gn/T/pytest-of-ssbarnea/pytest-105/info0/tox-4.23.4.dist-info')

distribution = <importlib.metadata.PathDistribution object at 0x1046ae8c0>

info = PosixPath('/private/var/folders/32/1xrphgzd4xv777syxjtkpdw80000gn/T/pytest-of-ssbarnea/pytest-105/info0')

req = "pytest>=8.3.3; extra == 'test'"

requirement = <Requirement('pytest>=8.3.3; extra == "test"')>

result = [PosixPath('/private/var/folders/32/1xrphgzd4xv777syxjtkpdw80000gn/T/pytest-of-ssbarnea/pytest-105/dist0/tox-4.23.4-py3-none-any.whl')]

tmp_path_factory = TempPathFactory(_given_basetemp=None, _trace=<pluggy._tracing.TagTracerSub object at 0x101e226b0>, _basetemp=PosixPath...rs/32/1xrphgzd4xv777syxjtkpdw80000gn/T/pytest-of-ssbarnea/pytest-105'), _retention_count=3, _retention_policy='failed')

tox_wheel = PosixPath('/private/var/folders/32/1xrphgzd4xv777syxjtkpdw80000gn/T/pytest-of-ssbarnea/pytest-105/dist0/tox-4.23.4-py3-none-any.whl')

wheel_cache = PosixPath('/Users/ssbarnea/code/os/tox/.wheel_cache/3.10')

zip_file = <zipfile.ZipFile [closed]>

tests/test_provision.py:96:

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

popenargs = (['/Users/ssbarnea/code/os/tox/.tox/3.10/bin/python3', '-I', '-m', 'pip', 'download', '-d', ...],), kwargs = {}, retcode = 1

cmd = ['/Users/ssbarnea/code/os/tox/.tox/3.10/bin/python3', '-I', '-m', 'pip', 'download', '-d', ...]

def check_call(*popenargs, **kwargs):

"""Run command with arguments. Wait for command to complete. If

the exit code was zero then return, otherwise raise

CalledProcessError. The CalledProcessError object will have the

return code in the returncode attribute.

The arguments are the same as for the call function. Example:

check_call(["ls", "-l"])

"""

retcode = call(*popenargs, **kwargs)

if retcode:

cmd = kwargs.get("args")

if cmd is None:

cmd = popenargs[0]

> raise CalledProcessError(retcode, cmd)

E subprocess.CalledProcessError: Command '['/Users/ssbarnea/code/os/tox/.tox/3.10/bin/python3', '-I', '-m', 'pip', 'download', '-d', '/Users/ssbarnea/code/os/tox/.wheel_cache/3.10', 'cachetools>=5.5', 'chardet>=5.2', 'colorama>=0.4.6', 'filelock>=3.16.1', 'packaging>=24.2', 'platformdirs>=4.3.6', 'pluggy>=1.5', 'pyproject-api>=1.8', "tomli>=2.1; python_version < '3.11'", "typing-extensions>=4.12.2; python_version < '3.11'", 'virtualenv>=20.27.1', "devpi-process>=1.0.2; extra == 'test'", "pytest-mock>=3.14; extra == 'test'", "pytest>=8.3.3; extra == 'test'"]' returned non-zero exit status 1.

cmd = ['/Users/ssbarnea/code/os/tox/.tox/3.10/bin/python3', '-I', '-m', 'pip', 'download', '-d', ...]

kwargs = {}

popenargs = (['/Users/ssbarnea/code/os/tox/.tox/3.10/bin/python3', '-I', '-m', 'pip', 'download', '-d', ...],)

retcode = 1

/opt/homebrew/Cellar/python@3.10/3.10.16/Frameworks/Python.framework/Versions/3.10/lib/python3.10/subprocess.py:369: CalledProcessError

---------------------------------------------------------------- Captured stdout setup ----------------------------------------------------------------

done in 0.43799304217100143s acquire current tox wheel

done in 0.057830207981169224s acquire dependencies for current tox

---------------------------------------------------------------- Captured stderr setup ----------------------------------------------------------------

/Users/ssbarnea/code/os/tox/.tox/3.10/bin/python3: No module named pip

```

</details>

## Minimal example

<!-- If possible, provide a minimal reproducer for the issue. -->

```console

```

| open | 2024-12-10T17:31:01Z | 2025-01-21T19:22:31Z | https://github.com/tox-dev/tox/issues/3468 | [

"feature:new",

"help:wanted"

] | ssbarnea | 4 |

huggingface/diffusers | deep-learning | 10,467 | FLUX.1-dev FP8 Example Code: tmpxft_00000788_00000000-10_fp8_marlin.cudafe1.cpp | ### Describe the bug

Unable to inference using Flux FP8

Logs

[FP8_logs.txt](https://github.com/user-attachments/files/18314458/FP8_logs.txt)

### Reproduction

https://huggingface.co/docs/diffusers/main/en/api/pipelines/flux#single-file-loading-for-the-fluxtransformer2dmodel

```

import torch

from diffusers import FluxTransformer2DModel, FluxPipeline

from transformers import T5EncoderModel, CLIPTextModel

from optimum.quanto import freeze, qfloat8, quantize

bfl_repo = "black-forest-labs/FLUX.1-dev"

dtype = torch.bfloat16

transformer = FluxTransformer2DModel.from_single_file("https://huggingface.co/Kijai/flux-fp8/blob/main/flux1-dev-fp8.safetensors", torch_dtype=dtype)

quantize(transformer, weights=qfloat8)

freeze(transformer)

text_encoder_2 = T5EncoderModel.from_pretrained(bfl_repo, subfolder="text_encoder_2", torch_dtype=dtype)

quantize(text_encoder_2, weights=qfloat8)

freeze(text_encoder_2)

pipe = FluxPipeline.from_pretrained(bfl_repo, transformer=None, text_encoder_2=None, torch_dtype=dtype)

pipe.transformer = transformer

pipe.text_encoder_2 = text_encoder_2

pipe.enable_model_cpu_offload()

prompt = "A cat holding a sign that says hello world"

image = pipe(

prompt,

guidance_scale=3.5,

output_type="pil",

num_inference_steps=20,

generator=torch.Generator("cpu").manual_seed(0)

).images[0]

image.save("flux-fp8-dev.png")

```

### Logs

```shell

Attached logs

```

### System Info

Windows 11

```

(venv) C:\ai1\diffuser_t2i>python --version

Python 3.10.11

(venv) C:\ai1\diffuser_t2i>echo %CUDA_PATH%

C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v12.6

```

```

(venv) C:\ai1\diffuser_t2i>pip list

Package Version

------------------ ------------

accelerate 1.2.1

aiofiles 23.2.1

annotated-types 0.7.0

anyio 4.7.0

certifi 2024.12.14

charset-normalizer 3.4.1

click 8.1.8

colorama 0.4.6

diffusers 0.33.0.dev0

einops 0.8.0

exceptiongroup 1.2.2

fastapi 0.115.6

ffmpy 0.5.0

filelock 3.16.1

fsspec 2024.12.0

gguf 0.13.0

gradio 5.9.1

gradio_client 1.5.2

h11 0.14.0

httpcore 1.0.7

httpx 0.28.1

huggingface-hub 0.25.2

idna 3.10

imageio 2.36.1

imageio-ffmpeg 0.5.1

importlib_metadata 8.5.0

Jinja2 3.1.5

markdown-it-py 3.0.0

MarkupSafe 2.1.5

mdurl 0.1.2

mpmath 1.3.0

networkx 3.4.2

ninja 1.11.1.3

numpy 2.2.1

opencv-python 4.10.0.84

optimum-quanto 0.2.6

orjson 3.10.13

packaging 24.2

pandas 2.2.3

pillow 11.1.0

pip 23.0.1

protobuf 5.29.2

psutil 6.1.1

pydantic 2.10.4

pydantic_core 2.27.2

pydub 0.25.1

Pygments 2.18.0

python-dateutil 2.9.0.post0

python-multipart 0.0.20

pytz 2024.2

PyYAML 6.0.2

regex 2024.11.6

requests 2.32.3

rich 13.9.4

ruff 0.8.6

safehttpx 0.1.6

safetensors 0.5.0

semantic-version 2.10.0

sentencepiece 0.2.0

setuptools 65.5.0

shellingham 1.5.4

six 1.17.0

sniffio 1.3.1

starlette 0.41.3

sympy 1.13.1

tokenizers 0.21.0

tomlkit 0.13.2

torch 2.5.1+cu124

torchvision 0.20.1+cu124

tqdm 4.67.1

transformers 4.47.1

typer 0.15.1

typing_extensions 4.12.2

tzdata 2024.2

urllib3 2.3.0

uvicorn 0.34.0

websockets 14.1

wheel 0.45.1

zipp 3.21.0

```

### Who can help?

You tell me who will help me resolve this issue :) | closed | 2025-01-06T06:42:14Z | 2025-01-06T16:58:21Z | https://github.com/huggingface/diffusers/issues/10467 | [

"bug"

] | nitinmukesh | 4 |

geex-arts/django-jet | django | 349 | __init__() missing 1 required positional argument: 'sortable_by' | I find a bug.

In jet/utils.py line 223

==============

this is true.

| open | 2018-08-28T06:37:28Z | 2019-08-13T14:51:47Z | https://github.com/geex-arts/django-jet/issues/349 | [] | charloai | 16 |

stanfordnlp/stanza | nlp | 1,229 | FInd POS of a sentence that is enclosed between a delimeter | I need to find the pos certain word from a sentence.

For example: sentence = "For all models ~ITE+CKAD~ obtains the highest fluency of 1.68 and ~ITE+DD~ has the highest Knowledge Relevance of 0.56 and highest Context Coherence of 0.90"

From the above sentence, I need to find the pos before and after the word that is enclosed by ~ delimiter, I need to find pos of the bolded words from the same sentence.

sentence = "For all **models** ~ITE+CKAD~ **obtains** the highest fluency of 1.68 **and** ~ITE+DD~ **has** the highest Knowledge Relevance of 0.56 and highest Context Coherence of 0.90"

The issue in finding pos is the special character between the words,

When I pass the sentence into the model ~ITE+CKAD~ is separated into ITE, +, CKAD which is making me track pos of the adjacent words.

What is want is how can I make a model to consider tokens as words that are separated by whitespace, the meaning model should consider ~ITE+CKAD~ as a single token.

The end goal is to find the pos before and after of the text that is enclosed by ^ delimiter.

Any other best approach is also appreciated

Note: there can be any special character then + like - or _ .

Thank you for your help in advance.

| closed | 2023-03-30T06:35:51Z | 2023-04-28T00:19:30Z | https://github.com/stanfordnlp/stanza/issues/1229 | [

"question"

] | kushal-h | 2 |

ageitgey/face_recognition | python | 1,411 | How to not draw boxes around unknown faces | * face_recognition version: 1.3.0

* Python version: 3.9.7

* Operating System: Big Sur MacOs

### Description

I am using the KNN classifier contained inside the file named "face_recognition_knn.py"

Inside the "train" folder, I've included and trained only one person.

Inside the "test" folder, I have one image containing the trained subject among multiple people.

The computer draws a box around everyone's face, including unknown people.

How do I tell the computer not to draw a box around unknown people?

Sorry. Noob question here.

### What I Did

This is literally the exact same code in the original file "face_recognition_knn.py"

```

import math

from sklearn import neighbors

import os

import os.path

import pickle

from PIL import Image, ImageDraw

import face_recognition

from face_recognition.face_recognition_cli import image_files_in_folder

ALLOWED_EXTENSIONS = {'png', 'jpg', 'jpeg'}

def train(train_dir, model_save_path=None, n_neighbors=None, knn_algo='ball_tree', verbose=False):

"""

Trains a k-nearest neighbors classifier for face recognition.

:param train_dir: directory that contains a sub-directory for each known person, with its name.

(View in source code to see train_dir example tree structure)

Structure:

<train_dir>/

├── <person1>/

│ ├── <somename1>.jpeg

│ ├── <somename2>.jpeg

│ ├── ...

├── <person2>/

│ ├── <somename1>.jpeg

│ └── <somename2>.jpeg

└── ...

:param model_save_path: (optional) path to save model on disk

:param n_neighbors: (optional) number of neighbors to weigh in classification. Chosen automatically if not specified

:param knn_algo: (optional) underlying data structure to support knn.default is ball_tree

:param verbose: verbosity of training

:return: returns knn classifier that was trained on the given data.

"""

X = []

y = []

# Loop through each person in the training set

for class_dir in os.listdir(train_dir):

if not os.path.isdir(os.path.join(train_dir, class_dir)):

continue

# Loop through each training image for the current person

for img_path in image_files_in_folder(os.path.join(train_dir, class_dir)):

image = face_recognition.load_image_file(img_path)

face_bounding_boxes = face_recognition.face_locations(image)

if len(face_bounding_boxes) != 1:

# If there are no people (or too many people) in a training image, skip the image.

if verbose:

print("Image {} not suitable for training: {}".format(img_path, "Didn't find a face" if len(face_bounding_boxes) < 1 else "Found more than one face"))

else:

# Add face encoding for current image to the training set

X.append(face_recognition.face_encodings(image, known_face_locations=face_bounding_boxes)[0])

y.append(class_dir)

# Determine how many neighbors to use for weighting in the KNN classifier

if n_neighbors is None:

n_neighbors = int(round(math.sqrt(len(X))))

if verbose:

print("Chose n_neighbors automatically:", n_neighbors)

# Create and train the KNN classifier

knn_clf = neighbors.KNeighborsClassifier(n_neighbors=n_neighbors, algorithm=knn_algo, weights='distance')

knn_clf.fit(X, y)

# Save the trained KNN classifier

if model_save_path is not None:

with open(model_save_path, 'wb') as f:

pickle.dump(knn_clf, f)

return knn_clf

def predict(X_img_path, knn_clf=None, model_path=None, distance_threshold=0.6):

"""

Recognizes faces in given image using a trained KNN classifier

:param X_img_path: path to image to be recognized

:param knn_clf: (optional) a knn classifier object. if not specified, model_save_path must be specified.

:param model_path: (optional) path to a pickled knn classifier. if not specified, model_save_path must be knn_clf.

:param distance_threshold: (optional) distance threshold for face classification. the larger it is, the more chance

of mis-classifying an unknown person as a known one.

:return: a list of names and face locations for the recognized faces in the image: [(name, bounding box), ...].

For faces of unrecognized persons, the name 'unknown' will be returned.

"""

if not os.path.isfile(X_img_path) or os.path.splitext(X_img_path)[1][1:] not in ALLOWED_EXTENSIONS:

raise Exception("Invalid image path: {}".format(X_img_path))

if knn_clf is None and model_path is None:

raise Exception("Must supply knn classifier either thourgh knn_clf or model_path")

# Load a trained KNN model (if one was passed in)

if knn_clf is None:

with open(model_path, 'rb') as f:

knn_clf = pickle.load(f)

# Load image file and find face locations

X_img = face_recognition.load_image_file(X_img_path)

X_face_locations = face_recognition.face_locations(X_img)

# If no faces are found in the image, return an empty result.

if len(X_face_locations) == 0:

return []

# Find encodings for faces in the test iamge

faces_encodings = face_recognition.face_encodings(X_img, known_face_locations=X_face_locations)

# Use the KNN model to find the best matches for the test face

closest_distances = knn_clf.kneighbors(faces_encodings, n_neighbors=1)

are_matches = [closest_distances[0][i][0] <= distance_threshold for i in range(len(X_face_locations))]

# Predict classes and remove classifications that aren't within the threshold

return [(pred, loc) if rec else ("unknown", loc) for pred, loc, rec in zip(knn_clf.predict(faces_encodings), X_face_locations, are_matches)]

def show_prediction_labels_on_image(img_path, predictions):

"""

Shows the face recognition results visually.

:param img_path: path to image to be recognized

:param predictions: results of the predict function

:return:

"""

pil_image = Image.open(img_path).convert("RGB")

draw = ImageDraw.Draw(pil_image)

for name, (top, right, bottom, left) in predictions:

# Draw a box around the face using the Pillow module

draw.rectangle(((left, top), (right, bottom)), outline=(0, 0, 255))

# There's a bug in Pillow where it blows up with non-UTF-8 text

# when using the default bitmap font

name = name.encode("UTF-8")

# Draw a label with a name below the face

text_width, text_height = draw.textsize(name)

draw.rectangle(((left, bottom - text_height - 10), (right, bottom)), fill=(0, 0, 255), outline=(0, 0, 255))

draw.text((left + 6, bottom - text_height - 5), name, fill=(255, 255, 255, 255))

# Remove the drawing library from memory as per the Pillow docs

del draw

# Display the resulting image

pil_image.show()

if __name__ == "__main__":

# STEP 1: Train the KNN classifier and save it to disk

# Once the model is trained and saved, you can skip this step next time.

print("Training KNN classifier...")

classifier = train("knn_examples/train", model_save_path="trained_knn_model.clf", n_neighbors=2)

print("Training complete!")

# STEP 2: Using the trained classifier, make predictions for unknown images

for image_file in os.listdir("knn_examples/test"):

full_file_path = os.path.join("knn_examples/test", image_file)

print("Looking for faces in {}".format(image_file))

# Find all people in the image using a trained classifier model

# Note: You can pass in either a classifier file name or a classifier model instance

predictions = predict(full_file_path, model_path="trained_knn_model.clf")

# Print results on the console

for name, (top, right, bottom, left) in predictions:

print("- Found {} at ({}, {})".format(name, left, top))

# Display results overlaid on an image

show_prediction_labels_on_image(os.path.join("knn_examples/test", image_file), predictions)

```

| closed | 2022-02-16T19:09:19Z | 2022-02-17T18:50:53Z | https://github.com/ageitgey/face_recognition/issues/1411 | [] | peoplecure | 1 |

betodealmeida/shillelagh | sqlalchemy | 190 | Unable to load adapter gsheetsapi | Thank you for this very useful library. I have a simple streamlit python app in which shillelagh fails because gsheetsapi adapter is not getting loaded. There is probably some similarity to a past issue https://github.com/betodealmeida/shillelagh/issues/112 so I set the version to 1.0.1, but without success.

requirements.txt

```

streamlit

shillelagh[gsheetaspi]==1.0.1

pandas

```

Error message:

```

File "/app/gsheetdb_tutorial/math_db_ui.py", line 52, in establish_connection

"gsheetsapi": {"service_account_file": service_account_file}})

File "/home/appuser/venv/lib/python3.7/site-packages/shillelagh/backends/apsw/db.py", line 517, in connect

adapter_kwargs = {mapping[k]: v for k, v in adapter_kwargs.items()}

File "/home/appuser/venv/lib/python3.7/site-packages/shillelagh/backends/apsw/db.py", line 517, in <dictcomp>

adapter_kwargs = {mapping[k]: v for k, v in adapter_kwargs.items()}

KeyError: 'gsheetsapi'

```

Can you please help? | closed | 2022-03-06T10:58:03Z | 2022-03-08T06:05:43Z | https://github.com/betodealmeida/shillelagh/issues/190 | [] | code-anjali | 4 |

pytest-dev/pytest-qt | pytest | 487 | Access violation in `waitSignal` with temporary object | This test causes an access violation.

```python

from PySide6.QtCore import QObject, Signal

class Signaller(QObject):

signal = Signal()

def test_should_pass_but_gives_access_violation(qtbot):

qtbot.waitSignal(Signaller().signal, timeout=1)

```

Windows 10 21H2

pytest==7.3.1

pytest-qt==4.2.0

PySide6-Essentials==6.5.0 | closed | 2023-05-14T17:17:37Z | 2023-05-16T20:15:50Z | https://github.com/pytest-dev/pytest-qt/issues/487 | [] | bersbersbers | 15 |

streamlit/streamlit | deep-learning | 10,756 | Custom components unmount and reload on switch_page | ### Checklist

- [x] I have searched the [existing issues](https://github.com/streamlit/streamlit/issues) for similar issues.

- [x] I added a very descriptive title to this issue.

- [x] I have provided sufficient information below to help reproduce this issue.

### Summary

Hi! I have found that when I change pages with `st.switch_pages` , If I have a Custom component loaded, it's unmounted and remounted, and it's a little bit glitched way to have the component. So is very inconsistent the state of my component.

I have troubles to keep the state aligned to session. I would like to know if it's planned to have a better implementations, a more native use of Custom Components in streamlit, and try to mount the react component one time only in the user session.

I have an example with my custom navigation component in my repo. If run the demon with the checkbox "with native way", you can see the glitched unmount

https://github.com/quiradev/streamlit-plugins/tree/main/examples/components/navbar/native_streamlit_multipage

Thanks in advance.

### Reproducible Code Example

```Python

import sys

import streamlit as st

st.set_page_config(layout="wide")

if "logged_in" not in st.session_state:

st.session_state.logged_in = True

USER = "admin"

PASSWORD = "admin"

positions = ["top", "under", "side"]

def my_sidebar():

with st.sidebar:

st.write("Logged in:", st.session_state.logged_in)

position_mode = st.radio(

"Navbar position mode",

positions,

index=positions.index(st.session_state.get("position_mode", "side")),

key="position_mode_input",

)

sticky_nav = st.checkbox(

"Sticky navbar",

value=st.session_state.get("sticky_nav", True),

key="sticky_nav_input"

)

native_way = st.checkbox(

"Use native way",

value=st.session_state.get("native_way", True),

key="native_way_input"

)

st.session_state["position_mode"] = position_mode

st.session_state["sticky_nav"] = sticky_nav

st.session_state["native_way"] = native_way

def my_heading():

st.title("Streamlit Multi-Page App")

st.subheader("This is a multi-page app with a native Streamlit navbar.")

st.markdown("> But only vizualize well with navbar on `top` position")

def login():

_, col, _ = st.columns([2, 6, 2])

with col:

with st.form(key="login_form"):

user = st.text_input("Username")

password = st.text_input("Password", type="password")

submitted = st.form_submit_button("Submit")

with st.expander("Psst! Here's the login info"):

st.write(f"Username and Password is:")

st.markdown(f"""

```bash

{USER}

```

""")

if submitted:

if user == USER and password == PASSWORD:

st.session_state.logged_in = True

st_switch_home()

else:

st.toast("Invalid username or password", icon="❌")

def account():

st.write("Account page")

st.caption("This is a protected page. Only logged in users can view this.")

def settings():

st.button("Theme")

def logout():

st.session_state.logged_in = False

st.session_state.app_id = None

st.session_state.active_app_id = None

st.rerun()

st.logo(

image="https://streamlit.io/images/brand/streamlit-logo-primary-colormark-darktext.svg",

icon_image="https://streamlit.io/images/brand/streamlit-mark-color.png"

)

dashboard = st.Page("dashboard.py", title="Dashboard", icon=":material/dashboard:", default=True, url_path="dashboard")

login_page = st.Page(login, title="Log in", icon=":material/login:", url_path="login")

account_page = st.Page(account, title="Account", icon=":material/account_circle:", url_path="account")

settings_page = st.Page(settings, title="Settings", icon=":material/settings:", url_path="settings")

bugs = st.Page("reports/bugs.py", title="Bug reports", icon=":material/bug_report:", url_path="bugs")

bugs2 = st.Page("reports/bugs.py", title="Bug reports2", icon=":material/bug_report:", url_path="bugs2")

bugs3 = st.Page("reports/bugs.py", title="Bug reports3", icon=":material/bug_report:", url_path="bugs3")

alerts = st.Page("reports/alerts.py", title="System alerts", icon=":material/notification_important:", url_path="alerts")

search = st.Page("tools/search.py", title="Search", icon=":material/search:", url_path="search")

history = st.Page("tools/history.py", title="History", icon=":material/history:", url_path="history")

logout_page = st.Page(logout, title="Log out", icon=":material/logout:", url_path="logout")

# HERE IS THE CHANGE

from streamlit_plugins.components.navbar import st_navbar, build_menu_from_st_pages, NavbarPositionType, st_navigation, st_switch_home

my_sidebar()

position_mode: NavbarPositionType = st.session_state.get("position_mode", "top")

sticky_nav = st.session_state.get("sticky_nav", True)

native_way = st.session_state.get("native_way", False)

if st.session_state.logged_in:

if position_mode == "top":

my_heading()

page = st_navigation(

{

"": [dashboard],

"Reports": [alerts],

"Tools": [search, history, bugs, bugs2, bugs3]

},

section_info={

"Reports": {"icon": ":material/assessment:"},

"Tools": {"icon": ":material/extension:"}

},

position_mode=position_mode if st.session_state.logged_in else "hidden", sticky_nav=sticky_nav,

login_page=login_page, logout_page=logout_page,

account_page=account_page,

settings_page=settings_page,

native_way=native_way

)

if st.session_state.logged_in:

# SOME TEXT ABOVE THE NAVBAR

page.run()

else:

login_page._can_be_called = True

login_page.run()```

### Steps To Reproduce

Use Custom component with st.navigation, and when change page the component change but other streamlit components if are common in multiple pages are preserved, because the html dont change only the custom components are removed.

### Expected Behavior

Keep mounted the custom components dont removed or unmounted.

### Current Behavior

_No response_

### Is this a regression?

- [ ] Yes, this used to work in a previous version.

### Debug info

- Streamlit version: 1.42.0

- Python version: 3.11

- Operating System: Windows

- Browser: Chrome

### Additional Information

| open | 2025-03-12T20:31:14Z | 2025-03-18T20:30:58Z | https://github.com/streamlit/streamlit/issues/10756 | [

"type:enhancement",

"status:awaiting-user-response"

] | vquilon | 4 |

FactoryBoy/factory_boy | django | 462 | The docs mention a ``_next_sequence`` attribute which doesn't exist | There is no such property as `_next_sequence` (http://factoryboy.readthedocs.io/en/latest/reference.html#factory.Factory.reset_sequence)

```

type object 'UserFactory' has no attribute '_next_sequence'

```

Am I missing something here?

EDIT: Ignore my original issue, however, the second part of it (this property missing) still stands | closed | 2018-03-21T07:27:08Z | 2018-10-15T05:42:18Z | https://github.com/FactoryBoy/factory_boy/issues/462 | [

"Doc"

] | fgblomqvist | 2 |

QingdaoU/OnlineJudge | django | 38 | 有没有删除小组的功能? | closed | 2016-05-10T07:01:23Z | 2016-05-10T11:37:56Z | https://github.com/QingdaoU/OnlineJudge/issues/38 | [] | Ir1d | 1 | |

polakowo/vectorbt | data-visualization | 189 | ReferenceError: underlying object has vanished | Hi,

I am having this weird problem where i get this error when I try to run vbt once, but it works on second attempt:

`pf = vbt.Portfolio.from_signals(df.close, df.entries, df.exits)`

Error:

```

Traceback (most recent call last):

File "C:\Users\john\AppData\Roaming\Python\Python37\site-packages\numba\core\caching.py", line 479, in save

data_name = overloads[key]

KeyError: ((UniTuple(int64 x 2), array(float64, 2d, C), array(int32, 1d, C), readonly array(float64, 1d, C), array(int32, 2d, C), array(float64, 1d, C), array(float64, 1d, C), array(float64, 0d, C), array(float64, 0d, C), array(int32, 0d, C), array(int32, 0d, C), array(float64, 0d, C), array(int32, 0d, C), array(float64, 0d, C), array(float64, 0d, C), array(float64, 0d, C), array(float64, 0d, C), array(bool, 0d, C), array(bool, 0d, C), array(bool, 0d, C), array(bool, 0d, C), array(bool, 0d, C), array(int32, 0d, C), array(bool, 0d, C), array(float64, 0d, C), array(float64, 0d, C), array(float64, 0d, C), array(float64, 0d, C), array(float64, 0d, C), array(bool, 0d, C), array(float64, 0d, C), array(int32, 0d, C), array(int32, 0d, C), array(int32, 0d, C), array(int32, 0d, C), array(int32, 0d, C), type(CPUDispatcher(<function no_adjust_sl_func_nb at 0x000001D06ECAEE58>)), Tuple(), type(CPUDispatcher(<function no_adjust_tp_func_nb at 0x000001D06ECC7708>)), Tuple(), bool, bool, bool, bool, int64, int64, bool), ('x86_64-pc-windows-msvc', 'skylake', '+64bit,+adx,+aes,+avx,+avx2,-avx512bf16,-avx512bitalg,-avx512bw,-avx512cd,-avx512dq,-avx512er,-avx512f,-avx512ifma,-avx512pf,-avx512vbmi,-avx512vbmi2,-avx512vl,-avx512vnni,-avx512vpopcntdq,+bmi,+bmi2,-cldemote,+clflushopt,-clwb,-clzero,+cmov,+cx16,+cx8,-enqcmd,+f16c,+fma,-fma4,+fsgsbase,+fxsr,-gfni,+invpcid,-lwp,+lzcnt,+mmx,+movbe,-movdir64b,-movdiri,-mwaitx,+pclmul,-pconfig,-pku,+popcnt,-prefetchwt1,+prfchw,-ptwrite,-rdpid,+rdrnd,+rdseed,+rtm,+sahf,-sgx,-sha,-shstk,+sse,+sse2,+sse3,+sse4.1,+sse4.2,-sse4a,+ssse3,-tbm,-vaes,-vpclmulqdq,-waitpkg,-wbnoinvd,-xop,+xsave,+xsavec,+xsaveopt,+xsaves'), ('0f0f2548aca57509e5e0bbe5e6f34487985e9f37bae572ebbe794f9d6f5c3bbe', 'e3b0c44298fc1c149afbf4c8996fb92427ae41e4649b934ca495991b7852b855'))

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "D:\Anaconda3\envs\edge\lib\site-packages\IPython\core\interactiveshell.py", line 3441, in run_code

exec(code_obj, self.user_global_ns, self.user_ns)

File "<ipython-input-3-a3d49dcdedf1>", line 1, in <module>

pf = vbt.Portfolio.from_signals(df.close, df.entries, df.exits) # Backtest in sample entries and exits

File "D:\Anaconda3\envs\edge\lib\site-packages\vectorbt\portfolio\base.py", line 1295, in from_signals

close.ndim == 2

File "C:\Users\john\AppData\Roaming\Python\Python37\site-packages\numba\core\dispatcher.py", line 439, in _compile_for_args

raise e

File "C:\Users\john\AppData\Roaming\Python\Python37\site-packages\numba\core\dispatcher.py", line 372, in _compile_for_args

return_val = self.compile(tuple(argtypes))

File "C:\Users\john\AppData\Roaming\Python\Python37\site-packages\numba\core\dispatcher.py", line 915, in compile

self._cache.save_overload(sig, cres)

File "C:\Users\john\AppData\Roaming\Python\Python37\site-packages\numba\core\caching.py", line 661, in save_overload

self._save_overload(sig, data)

File "C:\Users\john\AppData\Roaming\Python\Python37\site-packages\numba\core\caching.py", line 671, in _save_overload

self._cache_file.save(key, data)

File "C:\Users\john\AppData\Roaming\Python\Python37\site-packages\numba\core\caching.py", line 488, in save

self._save_index(overloads)

File "C:\Users\john\AppData\Roaming\Python\Python37\site-packages\numba\core\caching.py", line 532, in _save_index

data = self._dump(data)

File "C:\Users\john\AppData\Roaming\Python\Python37\site-packages\numba\core\caching.py", line 560, in _dump

return pickle.dumps(obj, protocol=-1)

File "C:\Users\john\AppData\Roaming\Python\Python37\site-packages\numba\core\types\functions.py", line 473, in __getnewargs__

raise ReferenceError("underlying object has vanished")

ReferenceError: underlying object has vanished

```

Running it again, it works?

| closed | 2021-07-09T15:45:27Z | 2021-07-09T16:11:34Z | https://github.com/polakowo/vectorbt/issues/189 | [] | jmrichardson | 2 |

iterative/dvc | data-science | 9,915 | `exp run --allow-missing`: runs stage unexpectedly | # Bug Report

## Description

`dvc exp run --allow-missing` runs stages even though they are unchanged other than missing files.

### Reproduce

```console

$ git clone git@github.com:iterative/example-get-started.git

$ cd example-get-started

$ dvc exp run --allow-missing -S train.min_split=0.005

Reproducing experiment 'plump-leak'

'data/data.xml.dvc' didn't change, skipping

Stage 'prepare' didn't change, skipping

WARNING: 'data/prepared' is empty.

WARNING: 'data/prepared' is empty.

Running stage 'featurize':

> python src/featurization.py data/prepared data/features

Traceback (most recent call last):

File "/private/tmp/example-get-started/src/featurization.py", line 136, in <module>

main()

File "/private/tmp/example-get-started/src/featurization.py", line 120, in main

generate_and_save_train_features(

File "/private/tmp/example-get-started/src/featurization.py", line 58, in generate_and_save_train_features

df_train = get_df(train_input)

^^^^^^^^^^^^^^^^^^^

File "/private/tmp/example-get-started/src/featurization.py", line 14, in get_df

df = pd.read_csv(

^^^^^^^^^^^^

File "/Users/dave/micromamba/envs/example-get-started/lib/python3.11/site-packages/pandas/io/parsers/readers.py", line 912, in read_csv

return _read(filepath_or_buffer, kwds)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/dave/micromamba/envs/example-get-started/lib/python3.11/site-packages/pandas/io/parsers/readers.py", line 577, in _read

parser = TextFileReader(filepath_or_buffer, **kwds)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/dave/micromamba/envs/example-get-started/lib/python3.11/site-packages/pandas/io/parsers/readers.py", line 1407, in __init__

self._engine = self._make_engine(f, self.engine)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/dave/micromamba/envs/example-get-started/lib/python3.11/site-packages/pandas/io/parsers/readers.py", line 1661, in _make_engine

self.handles = get_handle(

^^^^^^^^^^^

File "/Users/dave/micromamba/envs/example-get-started/lib/python3.11/site-packages/pandas/io/common.py", line 868, in get_handle

handle = open(handle, ioargs.mode)

^^^^^^^^^^^^^^^^^^^^^^^^^

FileNotFoundError: [Errno 2] No such file or directory: 'data/prepared/train.tsv'

ERROR: failed to reproduce 'featurize': failed to run: python src/featurization.py data/prepared data/features, exited with 1

```

### Expected

DVC should be skipping `featurize` since nothing changed in this stage.

DVC generates empty `data/prepared` and `data/features` dirs, which might be part of the problem?

### Environment information

**Output of `dvc doctor`:**

```console

$ dvc doctor

DVC version: 3.17.1.dev6+ge2aedb7e9

-----------------------------------

Platform: Python 3.11.4 on macOS-13.5-arm64-arm-64bit

Subprojects:

dvc_data = 2.8.1

dvc_objects = 0.24.1

dvc_render = 0.5.3

dvc_task = 0.3.0

scmrepo = 1.0.4

Supports:

azure (adlfs = 2023.4.0, knack = 0.11.0, azure-identity = 1.13.0),

gdrive (pydrive2 = 1.16.1),

gs (gcsfs = 2023.6.0),

hdfs (fsspec = 2023.6.0, pyarrow = 12.0.1),

http (aiohttp = 3.8.5, aiohttp-retry = 2.8.3),

https (aiohttp = 3.8.5, aiohttp-retry = 2.8.3),

oss (ossfs = 2021.8.0),

s3 (s3fs = 2023.6.0, boto3 = 1.26.161),

ssh (sshfs = 2023.7.0),

webdav (webdav4 = 0.9.8),

webdavs (webdav4 = 0.9.8),

webhdfs (fsspec = 2023.6.0)

Config:

Global: /Users/dave/Library/Application Support/dvc

System: /Library/Application Support/dvc

Cache types: <https://error.dvc.org/no-dvc-cache>

Caches: local

Remotes: https

Workspace directory: apfs on /dev/disk3s1s1

Repo: dvc, git

Repo.site_cache_dir: /Library/Caches/dvc/repo/d009e3e973ba4fa60c5080b78a58592a

``` | closed | 2023-09-05T17:53:44Z | 2023-09-07T14:02:00Z | https://github.com/iterative/dvc/issues/9915 | [

"p1-important",

"A: experiments"

] | dberenbaum | 2 |

FlareSolverr/FlareSolverr | api | 384 | [yggtorrent] (testing) Exception (yggtorrent): [...] Only Chrome at revision rlatest is guaranteed to work. | ### Environment

* **FlareSolverr version**: 2.2.4

* **Last working FlareSolverr version**: 2.2.4

* **Operating system**: CentOS 7

* **Are you using Docker**: yes

* **FlareSolverr User-Agent (see log traces or / endpoint)**: Mozilla/5.0 (X11; Linux x86_64; rv:94.0) Gecko/20100101 Firefox/94.0

* **Are you using a proxy or VPN?** no

* **Are you using Captcha Solver:** no

* **If using captcha solver, which one:**

* **URL to test this issue:** https://www5.yggtorrent.la/

### Description

Installed FlareSolver from docker

Installed Jackett from docker

Configured Jackett to use FlareSolver

Configured Jackett to use ygg using the user/password auth

Worked a week agggo

Now, when clicking the test button I got the error message

### Logged Error Messages

> 2022-04-25T14:14:52+02:00 INFO REQ-203 Cloudflare detected

> 2022-04-25T14:15:23+02:00 INFO REQ-203 Challenge solved

> 2022-04-25T14:16:02+02:00 INFO REQ-204 Incoming request => POST /v1 body: {"maxTimeout":55000,"cmd":"request.get","url":"https://www5.yggtorrent.la/engine/search?category=2140&name=&description=&file=&uploader=&sub_category=&do=search&order=desc&sort=publish_date"}

> 2022-04-25T14:16:08+02:00 WARN REQ-204 Page not reloaded (do not report!): Cause: TimeoutError: Navigation timeout of 18333.333333333332 ms exceeded

> 2022-04-25T14:16:33+02:00 ERROR REQ-204 Unexpected error: ProtocolError: Protocol error (Runtime.callFunctionOn): Target closed.

> 2022-04-25T14:16:44+02:00 ERROR REQ-204 TimeoutError: Timed out after 40000 ms while trying to connect to the browser! Only Chrome at revision rlatest is guaranteed to work.

> 2022-04-25T14:16:44+02:00 INFO REQ-204 Response in 42.246 s

> 2022-04-25T14:16:45+02:00 ERROR REQ-204 Error: Unable to process browser request. Error: Maximum timeout reached. maxTimeout=55000 (ms)

> 2022-04-25T14:16:45+02:00 INFO REQ-204 Response in 152.614 s

2022-04-25T16:01:38+02:00 WARN REQ-213 Page not reloaded (do not report!): Cause: Error: Navigation failed because browser has disconnected!

2022-04-25T16:01:38+02:00 ERROR REQ-213 Unexpected error: Error: Protocol error (Network.getCookies): Session closed. Most likely the page has been closed.

### Screenshots

| closed | 2022-04-25T12:24:02Z | 2022-07-30T22:10:28Z | https://github.com/FlareSolverr/FlareSolverr/issues/384 | [] | vipera7 | 3 |

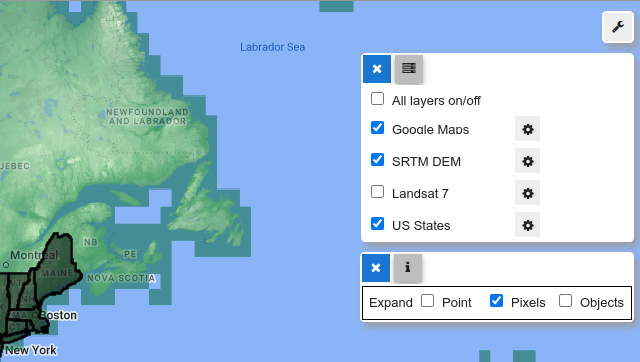

scikit-optimize/scikit-optimize | scikit-learn | 626 | Algorithm may not converge? | I'm using the package to do hyper parameter optimisation of a 3-layer neural network. When calling

> res_gp = gp_minimize(train_bayes, space, n_calls=200, n_random_starts=20, acq_func='EIps',

n_jobs=-1, verbose=True, random_state=random_seed)

and plotting the res_gp object, the following plot suggests that the optimisation is indeed working

but then this plot raises some eyebrows:

calls this into question.

If I am not mistaken (see also issue #624 ), lighter colours correspond to minima, yet the algorithm seems to try to stay away from minimal regions? Is this a bug? | open | 2018-02-01T11:01:59Z | 2019-08-27T02:42:53Z | https://github.com/scikit-optimize/scikit-optimize/issues/626 | [] | bstemper | 16 |

nteract/papermill | jupyter | 482 | Papermill adding unusual syntax to HTML stored in JSON | Hi Friends.

I've been using papermill for a while to execute jupyter notebooks in a CI framework. Thank you for your work on it as it's been a fantastic tool.

I'm running into an odd (i think?) bug. When i run a notebook with an interactive element that will require an iframe - case in point creating a map using leaflet (folium for python), it seems to be adding some code that makes the output render incorrectly.

I just ran one of my notebooks. Prior to running papermill, the iframe embed looked like this:

The important piece here is that the `src=data:text/html` part is correctly populated in the json.

```

"<div style=\"width:100%;\"><div style=\"position:relative;width:100%;height:0;padding-bottom:60%;\"><iframe src=\"data:text/html;charset=utf-8;base64,

```

When i run papermill and create a new notebook - for some reason i get the following below: notice that the src now begins with about:blank.

```

"<div style=\"width:100%;\"><div style=\"position:relative;width:100%;height:0;padding-bottom:60%;\"><iframe src=\"about:blank\" style=\"position:absolute;width:100%;height:100%;left:0;top:0;border:none !important;\" data-html=PCFET0NUWVBFIGh0bWw+C

```

can anyone shed some light on why this is happening? it's happening with all of the notebooks that i run through papermill that use folium and have an iframe embed. I just udpated to version 2.0 from 1.2 just as a sanity check and it's still doing the same thing.

Here is an example of a rendered page where you can see the folium map iframes don't render properly. But i have traced this error back to running papermill as the JSON looks fine prior to running it. Any suggestions for fixing this are much appreciated!

https://www.earthdatascience.org/courses/scientists-guide-to-plotting-data-in-python/plot-spatial-data/customize-raster-plots/interactive-maps/

oh i am running papermill on a MAC with python as follows (i am adding this just in case i'm missing a parameter here):

`pm.execute_notebook(notebook, out_notebook)`

but our CI build is linux and the behavior is the same there.

many thanks | closed | 2020-03-23T23:44:20Z | 2020-04-20T16:06:51Z | https://github.com/nteract/papermill/issues/482 | [] | lwasser | 4 |

tox-dev/tox | automation | 2,524 | Dependency with "vulnerable" version of py | Hi all,

I couldn't find this reported yet (apologies if it's duplicate), but tox has a dependency with `py`, which is currently flagged as a vulnerability: https://nvd.nist.gov/vuln/detail/CVE-2022-42969 and therefore reported by tools like `safety` and `pip-audit`.

There is a lot of chatter in [here](https://github.com/pytest-dev/py/issues/287) about whether this should be considered a vulnerability in the first place and whether the vulnerability should be taken down. It doesn't sound like the `py` maintainers are going to _fix_ the affected code, instead they removed the dependency from `pytest` altogether by [vendoring](https://github.com/pytest-dev/pytest/pull/10396) the code they still needed.

Is this something that could be done in `tox` as well?

Thanks in advance!

| closed | 2022-11-01T18:10:39Z | 2022-11-01T18:28:09Z | https://github.com/tox-dev/tox/issues/2524 | [

"bug:normal"

] | juanitosvq | 3 |

stanfordnlp/stanza | nlp | 690 | evaluation of trained models | Hello! Thanks so much for your amazing library. I have trained all the processors on my Persian data based on the Model Training and Evaluation documentation. I would like to run the trained model on some test data and evaluate them. I also need to save the prediction files to manually go through them to check some specific cases. On this documentation, it says that we can use ```bash scripts/run_ete.sh ${corpus} ${split}``` to evaluate the full parsing pipeline. However, it seems that there is no such file as ```run_ete.sh``` in this repository. I was wondering if there is any update available on this part of the documentation. I very much appreciate your help. | closed | 2021-05-05T20:59:21Z | 2021-06-17T17:19:51Z | https://github.com/stanfordnlp/stanza/issues/690 | [

"question"

] | royakabiri | 4 |

sqlalchemy/sqlalchemy | sqlalchemy | 10,850 | [MySQL] Nullability of generated columns is not inspected correctly | ### Describe the bug

The inspector does not pick up the nullability of generated columns for MySQL.

### Optional link from https://docs.sqlalchemy.org which documents the behavior that is expected

_No response_

### SQLAlchemy Version in Use

2.0.22

### DBAPI (i.e. the database driver)

MySQL

### Database Vendor and Major Version

MySQL 8

### Python Version

Python 3.11

### Operating system

Linux

### To Reproduce

```python

import os

from sqlalchemy import sql, create_engine, inspect

# Create a table with a generated column in MySQL

engine = create_engine(os.environ["DB_URL"])

conn = engine.connect()

ddl = """CREATE TABLE IF NOT EXISTS mytable (

my_generated_column int GENERATED ALWAYS AS (1234) VIRTUAL NOT NULL

);"""

conn.execute(sql.text(ddl))

# Try inspecting the generated column

inspector = inspect(conn)

cols = inspector.get_columns("mytable")

cols = [col for col in cols if col["name"] == "my_generated_column"]

assert cols[0]["nullable"] == False

```

### Error

```

Traceback (most recent call last):

File "reproduction.py", line 19, in <module>

assert cols[0]["nullable"] == False

^^^^^^^^^^^^^^^^^^^^^^^^^^^^

AssertionError

```

### Additional context

_No response_ | closed | 2024-01-09T13:27:00Z | 2024-01-23T18:17:51Z | https://github.com/sqlalchemy/sqlalchemy/issues/10850 | [

"bug",

"mysql",

"reflection",

"PRs (with tests!) welcome"

] | GeorchW | 5 |

KaiyangZhou/deep-person-reid | computer-vision | 118 | what the expected output in results folder when visualize the ranks? | when visualizing the ranks I have gotten a probe folder and 20 gallery folder, there is more than one person identity in both the gallery and probe folder.

my question is: How can I make sure the results are correct? can you explain the expected results?

Thank you. | closed | 2019-03-01T17:17:43Z | 2019-03-18T16:12:57Z | https://github.com/KaiyangZhou/deep-person-reid/issues/118 | [] | muna-cs | 1 |

MagicStack/asyncpg | asyncio | 565 | Error when importing after compiling with GCC 10 | * **asyncpg version**: asyncpg-0.21.0.dev0+7f5c2a2 (same for 0.20.1)

* **PostgreSQL version**: does not matter

* **Do you use a PostgreSQL SaaS? If so, which? Can you reproduce

the issue with a local PostgreSQL install?**: does not matter

* **Python version**: 3.8.2 and 3.9.0a5+

* **Platform**: Fedora 32 x64/aarch64 and Alpine Linux 3.12 alpha x64/aarch64

* **Do you use pgbouncer?**: does not matter

* **Did you install asyncpg with pip?**: no

* **If you built asyncpg locally, which version of Cython did you use?**: 0.29.16 and 3.0a2

* **Can the issue be reproduced under both asyncio and

[uvloop](https://github.com/magicstack/uvloop)?**: does not matter

I have tested this on the following scenarios:

Fedora 32 x86_64, Python 3.8.2, GCC 10.0.1 20200328 (Red Hat 10.0.1-0.11) from official repos

Fedora 33 (rawhide) aarch64, Python 3.8.2, GCC 10.0.1 20200420 (Red Hat 10.0.1-0.12) from official repos

Alpine Linux 3.12 alpha x86_64, Python 3.9a5, GCC 10.0.1 20200427 from source (builds at https://ftp.travitia.xyz/alpine/x86_64/)

Alpine Linux 3.12 alpha aarch64, Python 3.9a5, GCC 10.0.1 20200426 from source (builds at https://ftp.travitia.xyz/alpine/aarch64/)

All have been tested twice with Cython 0.29.16 and Cython 3.0a2.

Whenever I compile asyncpg with GCC 9.3 on any of above scenarios, it compiles fine and runs fine.

Whenever I use GCC 10 in any of above scenarios, it *does build fine*, but importing it gives me:

```py

>>> import asyncpg

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "/home/jens/.local/lib/python3.8/site-packages/asyncpg/__init__.py", line 8, in <module>

from .connection import connect, Connection # NOQA

File "/home/jens/.local/lib/python3.8/site-packages/asyncpg/connection.py", line 19, in <module>

from . import connect_utils

File "/home/jens/.local/lib/python3.8/site-packages/asyncpg/connect_utils.py", line 28, in <module>

from . import protocol

File "/home/jens/.local/lib/python3.8/site-packages/asyncpg/protocol/__init__.py", line 8, in <module>

from .protocol import Protocol, Record, NO_TIMEOUT # NOQA

File "asyncpg/protocol/protocol.pyx", line 1, in init asyncpg.protocol.protocol

ImportError: /home/jens/.local/lib/python3.8/site-packages/asyncpg/pgproto/pgproto.cpython-38-x86_64-linux-gnu.so: undefined symbol: uuid_to_hex

```

This is weird, as readelf shows:

```sh

$ readelf -a /home/jens/.local/lib/python3.8/site-packages/asyncpg/pgproto/pgproto.cpython-38-x86_64-linux-gnu.so | grep uuid_to_hex

000000055d90 004f00000007 R_X86_64_JUMP_SLO 0000000000000000 uuid_to_hex + 0

79: 0000000000000000 0 NOTYPE GLOBAL DEFAULT UND uuid_to_hex

1176: 0000000000000000 0 NOTYPE GLOBAL DEFAULT UND uuid_to_hex

```

I don't know C much, but I have seen that uuid_to_hex is defined in the code for pgproto, so I have no clue how this happens.

FYI: On all scenarios, I am able to compile and use uvloop and cpython 3.9 without any errors.

EDIT: Same issue with Alpine Linux 3.12 alpha, Python 3.9a6, Cython 3.0a3 and GCC 10 20200430 | closed | 2020-04-28T15:33:44Z | 2020-06-26T18:20:55Z | https://github.com/MagicStack/asyncpg/issues/565 | [] | Gelbpunkt | 3 |

SciTools/cartopy | matplotlib | 1,630 | Crimea is wrongly moved to the Russia | Wanted to use your library to make some heatmaps on the country levels.

And found that you have wrong shapes for Ukraine and Russia, more precisely in your shapes, Crimea belongs to Russia.

This obviously contradicts international understanding. With this mistake, you hiddenly push all library users to break international laws on their graphs.

I hope you will fix this fast.

| closed | 2020-08-06T17:59:50Z | 2020-08-06T18:20:24Z | https://github.com/SciTools/cartopy/issues/1630 | [] | johngull | 2 |

wger-project/wger | django | 1,424 | Website terms of service are smpty | https://wger.de/en/software/terms-of-service

Is empty, no matter if German or English.

I told a guy on Reddit about this and how he might contribute and get exercises and he liked it, but mentioned, that the imprint and the tos are empty and this is something that did not sit well with them. | open | 2023-09-11T06:19:16Z | 2023-09-11T12:01:14Z | https://github.com/wger-project/wger/issues/1424 | [] | natrius | 1 |

open-mmlab/mmdetection | pytorch | 11,144 | How to generate coco fromat annotations on custom dataset | I rencently encounter a problem that I fail to generate coco format json file, depite I have set the `format_only=True` in the test_evaluator. I have checked the previous issues, and found several similiar questions with related functions removed. Threrefore, I wonder whether it is possible to provide a tutorial regarding this issue. Thank you so much in advance. | open | 2023-11-08T14:32:00Z | 2023-11-08T14:32:00Z | https://github.com/open-mmlab/mmdetection/issues/11144 | [] | CDchenlin | 0 |

sczhou/CodeFormer | pytorch | 373 | Question on Training Steps and Cross entropy Loss for Stage 2 | Your work is truly impressive! The clarity and depth you bring to the subject are remarkable!

Could you please let me know how many **training steps** are required in stage 2 and how small the **Cross Entropy Loss** should be to achieve normal images?

I use the pretrained VQGAN which provided by authors to start the training of stage-2, but it seem that it could generate a normal image. I have trained 70k step and batch size is 8, learning rate is 4e-5. following is my loss figure and results:

Please, kind-hearted person, help me. I don't know what the problem is. | open | 2024-05-23T09:13:04Z | 2025-02-17T13:15:30Z | https://github.com/sczhou/CodeFormer/issues/373 | [] | lxxie298 | 3 |

browser-use/browser-use | python | 214 | Outdated workflow actions | GitHub Actions workflow still uses checkout@v3 and Python@v3, these are outdated for a while now and new versions have been published | closed | 2025-01-11T19:01:07Z | 2025-01-19T23:53:30Z | https://github.com/browser-use/browser-use/issues/214 | [] | sushil959465 | 0 |

nvbn/thefuck | python | 1,425 | Support for Windows CMD | When I run "fuck" on windows cmd I get

"Seems like fuck alias isn't configured!"

But the help does not show how to configure thefuck for windows cmd, only for powershell.

The latest commit shows that there is support for windows cmd

https://github.com/nvbn/thefuck/commit/3cd187a3bb47351890ac7308464e1a2780507220

But I guess since the latest release is from January and the commit is from July a version that supports windows cmd wasn't released yet.

---

The output of `thefuck --version` (something like `The Fuck 3.1 using Python

3.5.0 and Bash 4.4.12(1)-release`):

thefuck 3.32 for windows

Your system (Debian 7, ArchLinux, Windows, etc.):

Windows 11 CMD